Cheats for the PAL version of Quake on the Sega Saturn

Friday, 25th November 2016

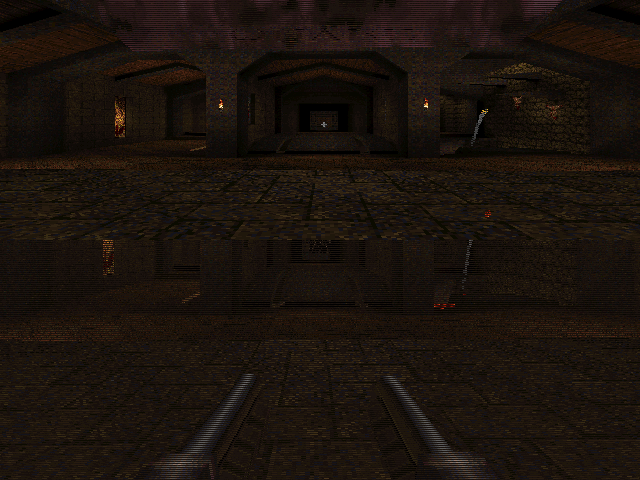

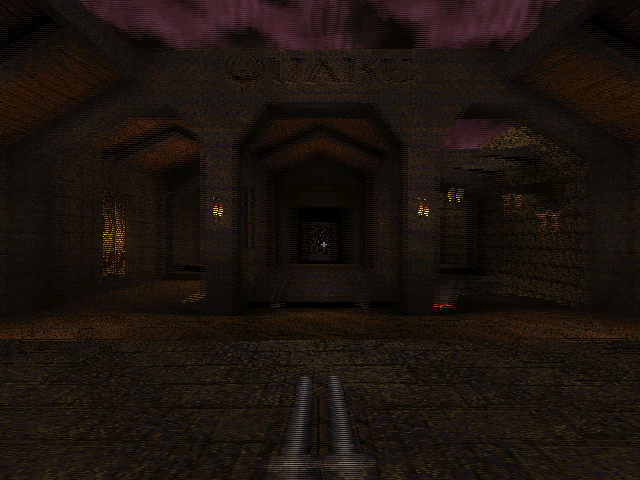

I recently picked up a Sega Saturn and a copy of the technical marvel that is Quake for it.

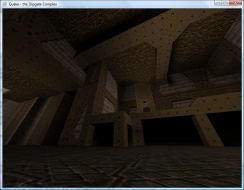

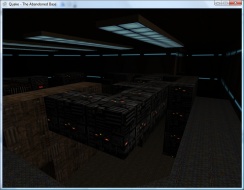

The Saturn is not renowned for being a particularly capable 3D machine and so the fact that Quake runs at all is quite remarkable, let alone as well as it does in Lobotomy Software's version. Rather than port the Quake engine to the Saturn the game uses the SlaveDriver engine, and includes conversions of 28 of the original 32 levels with some minor tweaks to improve performance. It certainly captures the atmosphere of Quake far more faithfully than most console ports of DOOM did to that game, leaving the sound and music intact and retaining the gritty aesthetic of Quake's software renderer.

Unfortunately, I'm not very good at it. Even though I could probably complete the PC version's first level in my sleep these days it took me three shameful attempts on the easiest difficulty level to get through it on the Saturn. The controls are somewhat awkward (for example, to aim up you need to hold X and press down on the d-pad) and so I thought that a cheat code or two might help me along until I'd got to grips with the game's controls.

I found a list of cheats on a newsgroup from the game's developer but most of them did not work with my copy of the game, and the few codes that did do something ended up performing the function of a different cheat. For example, invicibility ("Paul Mode") is toggled by highlighting "Customize Controls" then entering RLXYZRLXYZ, but on my copy of the game that toggled "Jevons-Control Mode" instead. These codes matched the ones on various cheat database sites across the Internet, so I was a bit puzzled until I found a forum post with a couple of codes that did work. This is an incomplete set, and it's clear that the PAL version of the game has different cheats to the NTSC-U version. Other sites either mentioned that the PAL version doesn't have cheats at all, or is missing most of them due to being an older version of the engine.

One thing stuck out to me, though - all NTSC-U cheat codes follow the same basic formula of highlighting a particular menu item under "Options", entering a ten button sequence using only the X, Y, Z, R and L buttons, and then seeing a confirmation message on the screen. I assumed that the PAL version would do something similar, and that some table of cheat codes and messages could be found in the executable. I popped the game CD into my PC CD drive and copied the executable file to the hard disk so I could examine it in a hex editor.

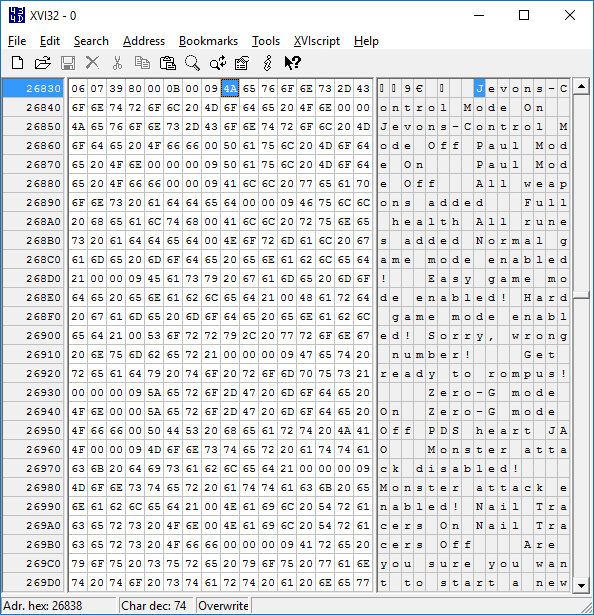

The first thing I did was search for "Jevons-Control" which identified it at the top of a list of other cheat-related messages such as "All weapons added" or "Nail Tracers On" which made me hopeful that the other cheat codes were present in the PAL version of the game. Some cheats in the NTSC-U version (such as those relating to rain or cluster bombs) didn't seem to have an equivalent message in the list of strings here so these were presumably missing in the PAL version, but at least I knew I was on the right track.

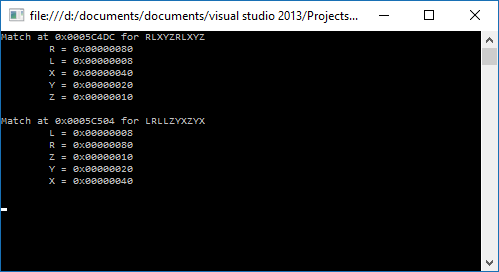

At this point, I had two button sequences that I knew worked - RLXYZRLXYZ and LRLLZYXZYX. I hoped that these codes might be found in the game's executable, but of course didn't know how they'd be represented. At first I assumed each code would be a ten byte sequence, with one value for 'R', one value for 'L', another for 'X' and so on and so forth. As the two known cheats repeated buttons (for example, LRLLZYXZYX has L three times) it would be possible to see if a particular sequence of bytes followed the same pattern as the cheat code (for example, with LRLLZYXZYX the first, third and fourth bytes would need to all have the same value to represent 'L', and that value could not appear anywhere else in the ten byte sequence). With that in mind I wrote a program that scanned through the entire binary from start to finish, checking to see if either of the two codes could be found. Neither could, so I changed the program to instead assume that each button's value would be stored as a sixteen-bit word. Still no luck, but as the Saturn is a 32-bit system I again increased the size of each button in the sequence to try 32-bit integers and found two matches for the two codes.

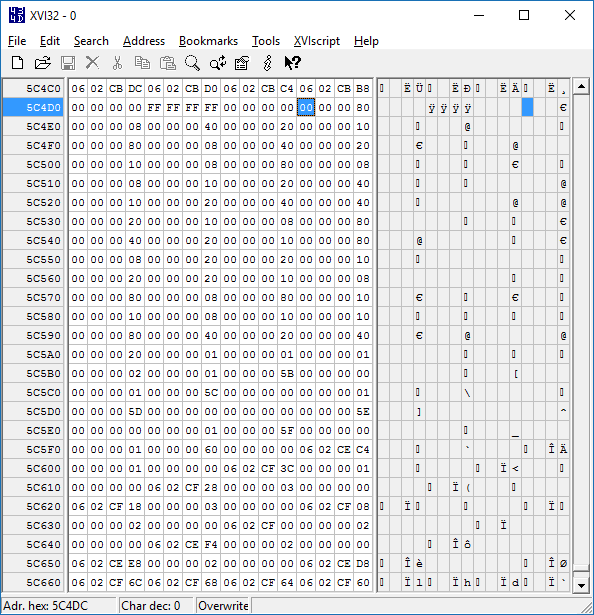

Not only were both sequences found, but both used the same values for the buttons (e.g. L is 0x00000008 in both sequences) and both were near each other in the binary. This seemed like the place to look, so I put the lower address value into the hex editor to see if there were other sequences nearby.

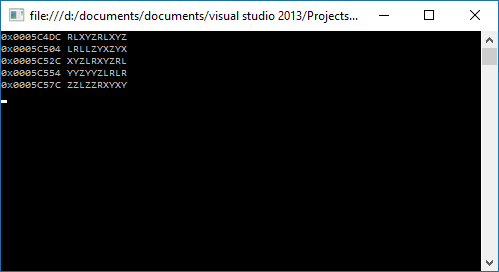

Now that I knew where the cheat codes were and how to map each value to a button name I could work through the binary, check to see if each sequence of ten 32-bit integers all matched known button values and if so output the sequence. This gave me three additional sequences for a total of five.

Now I could take those five sequences, try them in the game and match them to the known NTSC-U sequences. Most of the cheat codes sequences are used more than once, changing behaviour depending on which menu item was highlighted when they were entered. Comparing the effect of certain codes in the PAL version against what the NTSC-U version was known to do produces the following table:

| NTSC-U | PAL |

|---|---|

| RRLRXYZXYZ | RLXYZRLXYZ |

| RLXYZRLXYZ | LRLLZYXZYX |

| RXLZLRYLRY | XYZLRXYZRL |

| RYLYXYZXYZ | YYZYYZLRLR |

| RZLXYLRYLR | ZZLZZRXYXY |

I'm not sure why the PAL version uses different cheat code sequences, but it is an earlier version of the game and they are also quite a bit easier to enter on the console so maybe it was decided that players needed to work harder to take advantage of their cheat codes. There are a few other NTSC-U cheat code sequences that don't match up with the PAL version, but these are for cheats that seem to be missing equivalent strings (such as the previously mentioned rain or cluster bomb cheats) so I reckon they were not yet added to the PAL version.

For the sake of completeness, here is a list of cheats that work in the PAL version of Quake. All need to be entered by pausing the game, highlighting a particular item in the Options menu and then entering the cheat code as quickly as you can. Some cheats also require you to stand in a particular place in a map or to have collected certain items first; these are noted where appropriate.

| Name | Menu | Cheat |

|---|---|---|

| Paul Mode (Invincibility) | Customize Controls | LRLLZYXZYX |

| All Weapons | Customize Controls | XYZLRXYZRL |

| Full Health | Customize Controls | YYZYYZLRLR |

|

All Runes Stand in the area where the first rune can be picked up in "The House of Cthon". |

Customize Controls | ZZLZZRXYXY |

| Jevons-Control Mode (3D Control Pad) | Customize Controls | RLXYZRLXYZ |

|

Level Select You must either have all four runes or be standing on the right hand side of the flat part of the bridge over the lava in the "Entrance" level. |

Reset to Defaults | RLXYZRLXYZ |

| Restart Level | Reset to Defaults | LRLLZYXZYX |

| Normal Difficulty | Music Volume | RLXYZRLXYZ |

| Easy Difficulty | Music Volume | LRLLZYXZYX |

| Hard Difficulty | Music Volume | XYZLRXYZRL |

|

Show Credits Stand on the right hand side of the bridge under the round stained glass window in "Castle of the Damned". |

Stereo | RLXYZRLXYZ |

|

Show Special Credits Stand in the secret underwater cave containing the Megahealth and Nails in "Gloom Keep". |

Stereo | LRLLZYXZYX |

|

Quake Wrestling Stand either at the Quad Damage in the secret area opened by jumping into the overhead light in "The Sewage System" or in the suspended cage half way through "The Tower of Despair". |

Stereo | XYZLRXYZRL |

| Zero-G Mode | Lookspring | RLXYZRLXYZ |

| Monster Attack | Auto Targeting | RLXYZRLXYZ |

| Nail Tracers | Auto Targeting | LRLLZYXZYX |

If there are still people out there struggling through the PAL version of Quake on the Sega Saturn, maybe these cheat codes will come in handy!

Adding more stereoscopic modes to Quake II's OpenGL renderer

Thursday, 11th March 2010

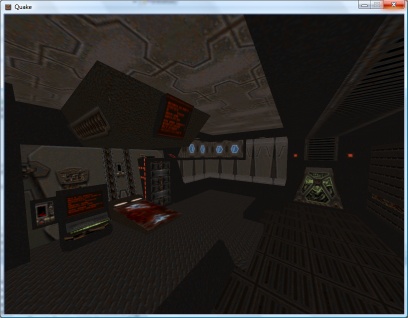

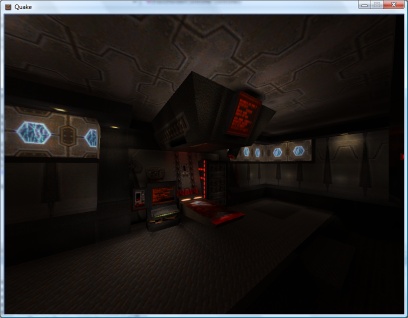

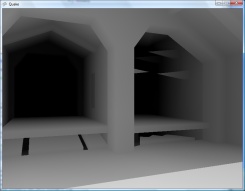

Quake II's OpenGL renderer supports stereoscopic rendering providing you own a video card that has the requisite hardware and driver support ("quad-buffered" OpenGL rather than a single front and back buffer you have two front buffers and two back buffers, one for each eye). Not owning such a video card I decided to have a go at adding some other stereoscopic rendering modes that worked with regular hardware.

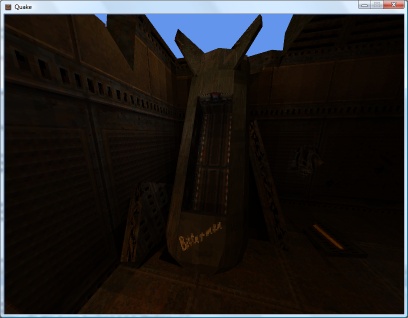

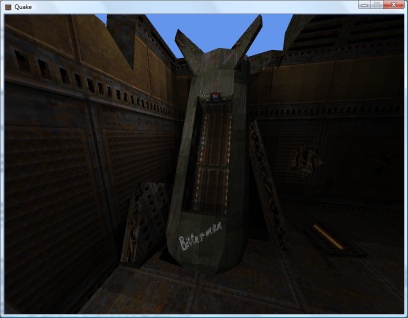

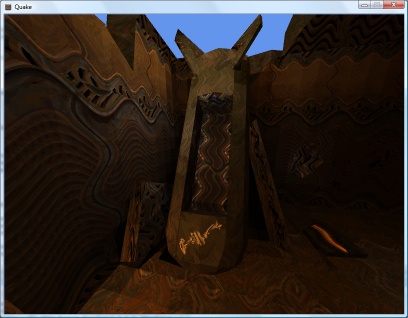

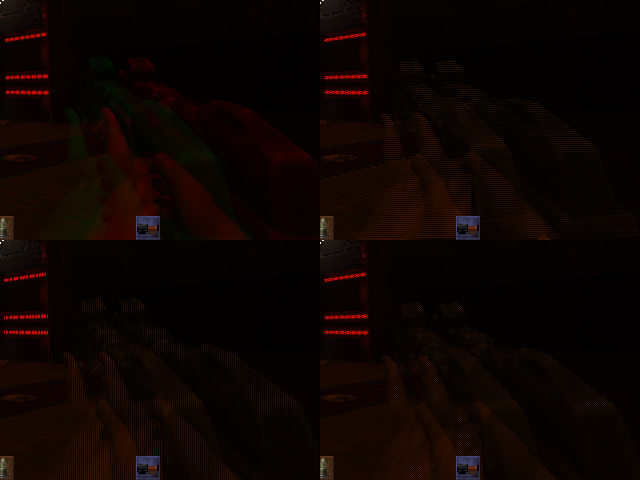

The four new stereoscopic rendering modes

The ability to enable or disable drawing with a particular colour component in OpenGL makes implementing an anaglyph mode very simple temporarily switch off red when drawing the view from one eye and temporarily switch off blue and green when drawing the view from the other to produce a final image that can be used with red/cyan 3D glasses. A new string console variable cl_stereo_anaglyph_colors can be amended to set the colour of your particular glasses, e.g. mg for magenta/green.

By drawing a mask to the stencil buffer before rendering one can easily add "interleaved" modes; there's the standard row interleaved format, but I've also added column and pixel interleaved formats.

It looks like the stereoscopic OpenGL code was started but not finished in Quake II; there were a number of odd bugs, such as the viewport position being changed instead of the camera position when drawing the left and right eye views (producing two views that were offset in 2D, not 3D). A snippet of code hints towards why this may be:

#if 0 // commented out until H3D pays us the money they owe us GL_DrawStereoPattern(); #endif

H3D manufactured a VGA adaptor for 3D glasses which relied on a special pattern being displayed on the screen to enable it and switch it to the correct mode rather than let the user do so. H3D went bust toward the end of 1998, so I guess id software never got their money and that function has been commented out ever since.

The new binaries can be downloaded from the Stereo Quake page, and the source can be found on Google Code.

Adding a stereoscopic renderer to Quake II

Sunday, 7th February 2010

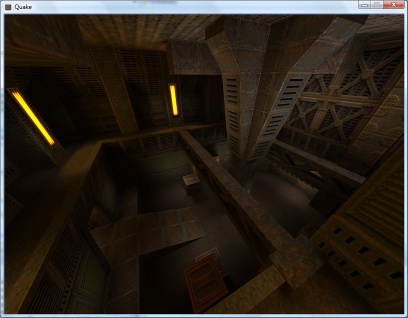

Having tweaked the stereoscopic rendering code in Quake, I decided to have a go at Quake II. This doesn't natively support row-interleaved stereoscopic rendering, but I thought that the shared code base of Quake and Quake II should make extending Quake II relatively simple.

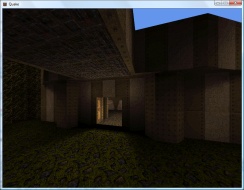

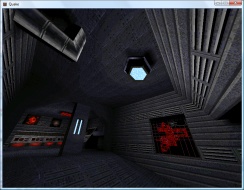

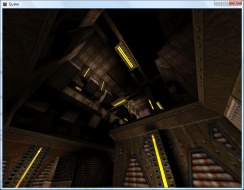

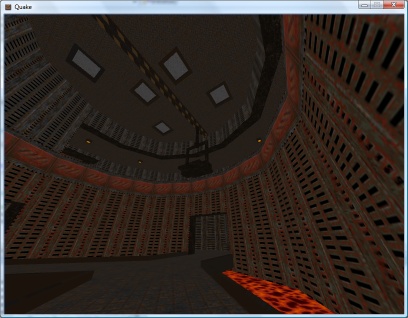

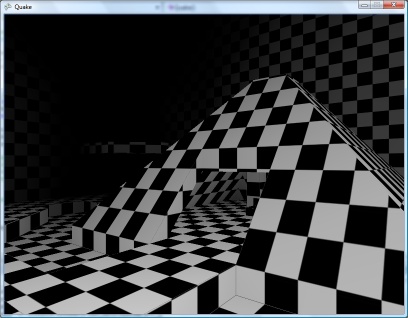

Quake II does have two console variables dedicated to stereoscopic rendering already, cl_stereo (enable/disable stereoscopic rendering) and cl_stereo_separation (controls the displacement of the camera between eyes; the same as LCD_X in Quake). These variables only seem to be used in the OpenGL renderer, though I haven't been able to get them to do anything meaningful – I have a hunch that you need a video card that supports stereoscopic rendering; these do exist, and have a socket on them for 3D glasses, but I'm having to make do with my DIY hardware. Furthermore, I've always found the OpenGL rendering in Quake and Quake II incredibly ugly, with blurry low-resolution textures (this is the reason I opted to emulate the software renderer when writing my own implementation of the Quake engine).

It turns out that Quake II does indeed render each frame twice with the camera offset when cl_stereo is switched on, but the software renderer doesn't do anything to blend the two views together. Using the same tricks as Quake – halving the height of the viewport, doubling the apparent stride of the render surface, shunting the address of the buffer down one scanline for one eye – seems to have done the trick, though finding out when exactly to carry out these steps hasn't been all that smooth. The particle rendering code still crashes with an access violation if called twice during a frame, but only in release mode. Fortunately, the entire software renderer has been written in C and assembly, so I've reverted to the C-based particle renderer instead of the assembly one for the time being as that doesn't appear to be affected by the same bug.

A slightly more bothersome problem is the use of 8-bit DirectDraw modes for full-screen rendering. Unfortunately, Windows seems to like interfering with the palette resulting in rather hideous colours. Typing vid_restart a few times into the console may eventually fix the issue, but it's far from an ideal solution. An alternative may be to rewrite the code to output 32-bit colour; this would also allow for coloured lighting. Unfortunately, I don't think I'd be especially good at rewriting the reams of x86 assembly required to implement such a fix, and the C software renderer I previously mentioned results in a slightly choppy framerate at high resolutions.

An alternative would be to learn how to use Direct3D from C and rewrite the renderer entirely, taking advantage of hardware acceleration but this would seem like an equally daunting task. If anyone has any suggestions or recommendations I'd be interested to hear them!

Replacement binaries for Quake and Quake II can be downloaded from the project page; source code is available on Google Code.

3D glasses, a VGA line-blanker and fixing Quake

Wednesday, 3rd February 2010

Some time ago, I posted about using interlaced video to display 3D images. Whilst the idea works very nicely in theory, it's quite tricky to get modern video cards to generate interlaced video at a variety of resolutions and refresh rates. My card limits me to 1920×1080 at i30 or 1920×1080 at i25, and only lets me use this mode on my LCD when I really need it on a CRT. Even if you can coax the video card to switch to a particular mode, this is quite a fragile state of affairs as full-screen games will switch to a different (and likely progressively scanned) mode.

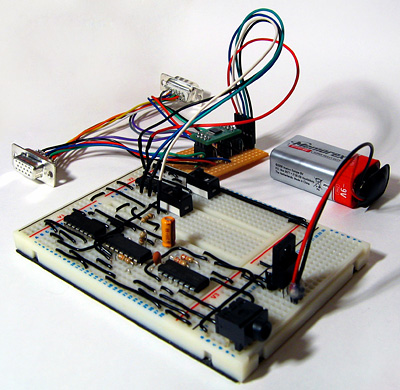

3D glasses adaptor with line blanker prototype

An alternative is to build an external bit of hardware that simulates an interlaced video mode from a progressive one. The easiest way of doing that is to switch off the RGB signals on alternate scanlines, blanking odd scanlines in one frame and even scanlines in the next. This type of circuit is appropriately named a line blanker, and my current implementation is shown above. It sits between the PC and the monitor, and uses a pair of flip-flops which toggle state on vsync or hsync signals from the PC. The output from the vsync flip-flop is used to control which eye is open and which is shut on the LCD glasses, and is also combined with the hsync flip-flop to switch the RGB signal lines on or off on alternate lines using a THS7375 video amplifier. Unfortunately, this amplifier is only available as TSSOP, which isn't much fun to solder if you don't have the proper equipment; I made a stab at it with a regular iron, the smallest tip I could find, lots of no-clean flux and some solder braid. I have been informed that solder paste makes things considerably easier, so will have to try that next time.

My cheap LCD glasses lack any form of internal circuitry, merely offering two LCD panels wired directly to a 3.5mm stereo jack, and so I'm using the 4030 exclusive-OR gate oscillator circuit to drive them.

The adaptor provides one switch to swap the left and right eyes in case they are reversed, and another is provided to disable the line blanking circuit (useful for genuine interlaced video modes or alternate frame 3D). You can download a schematic of the circuit here as a PDF.

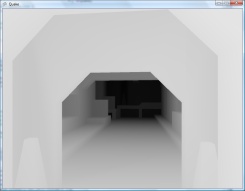

I've been using these glasses to play Quake in 3D, which is good fun but an experience that was sadly marred by a number of bugs and quirks in Quake's 3D mode.

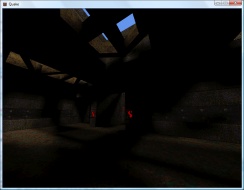

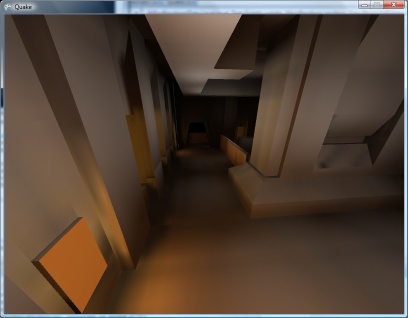

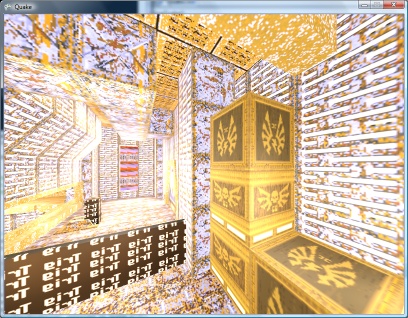

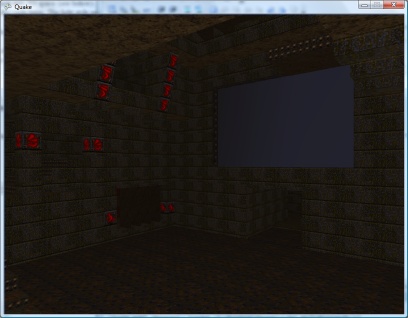

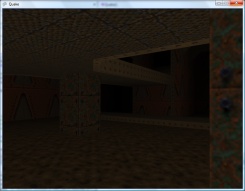

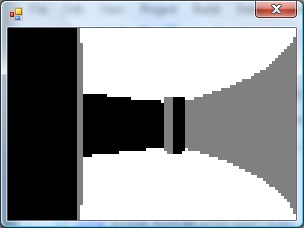

WinQuake, demonstrating the crosshair bug and excessive stereo separation of the weapon

The most obvious problems in the above screenshot are the migratory crosshair (appearing 25% of the way down the screen instead of vertically centred) and the excessive stereo separation of the player's weapon.

If the console variable LCD_X is non-zero, Quake halves the viewport height then doubles what it thinks is the stride of the graphics buffer. This causes it to skip every other scanline when rendering. Instead of rendering once, as normal, it translates the camera in one direction, renders, then offsets the start of the graphics buffer by one scanline, translates the camera in the other direction then renders again. This results in the two views (one for each eye) being interleaved into a single image.

The crosshair is added after the 3D view is rendered (in fact, Quake just prints a '+' sign in the middle of the screen using its text routines), which explains its incorrect position – Quake doesn't take the previously halved height of the display into consideration, causing the crosshair to be drawn with a vertical position of half of half the height of the screen. That's pretty easy to fix – if LCD_X is non-zero, multiply all previously halved heights and Y offsets by two before rendering the crosshair to compensate.

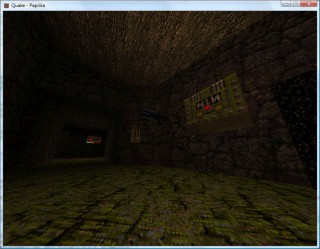

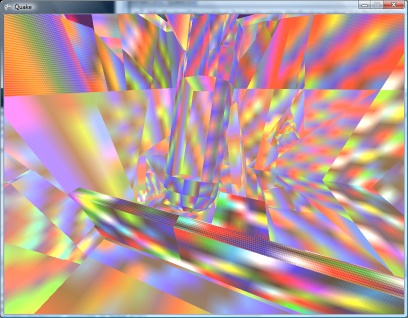

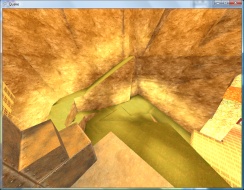

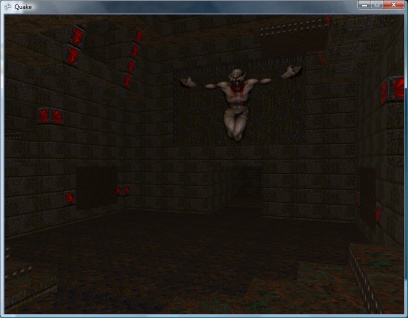

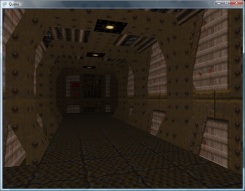

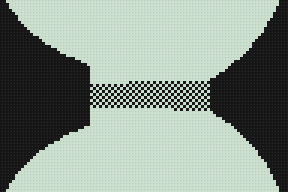

WinQuake, demonstrating the DirectDraw corruption bug

A slightly more serious bug is illustrated above. When using the DirectDraw renderer (the default in full-screen mode), the display is corrupted. This can be fixed by passing -dibonly to the engine, but it would be nice to fix it.

After a bit of digging, it appeared that the vid structure, which stores fields such as the address of the graphics buffer and its stride, was being modified between calls to the renderer. It seemed to be reverting to the actual properties of the graphics buffer (i.e. it pointed to the top of the buffer and stored the correct stride of the image, not the doubled one). Further digging identified VID_LockBuffer() as the culprit; this does nothing if you're using the dib rendering mode, but locks the buffer and updates the vid structure in other access modes. Fortunately, you can call this function as many times as you like (as long as you call VID_UnlockBuffer() a corresponding number of times) – it only locks the surface and updates vid the first time you call it. By surrounding the entire 3D rendering routine in a VID_LockBuffer()…VID_UnlockBuffer() pair, vid is left well alone, and Quake renders correctly in full-screen once again.

The final issue was the extreme stereo separation of the player weapon, caused by its proximity to the camera – it does make the game quite uncomfortable to play. The game moves the camera and weapon to the player's position, then applies some simple transformations to implement view/weapon bobbing, before rendering anything. Applying the same camera offset and rotation to the player weapon as the camera when generating the two 3D views put the weapon slap bang in the middle of the screen, as it would appear in regular "2D" Quake. This gives it the impression of a carboard cutout, and can put it behind/"inside" walls and floors when you walk up to them; I've added a console variable, LCD_VIEWMODEL_SCALE, that can be used to interpolate between the default 3D WinQuake view (value: 1) and the cardboard cutout view (value: 0).

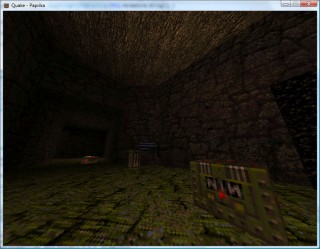

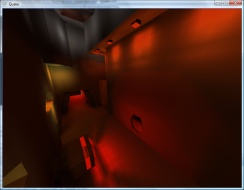

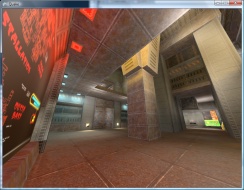

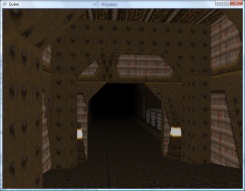

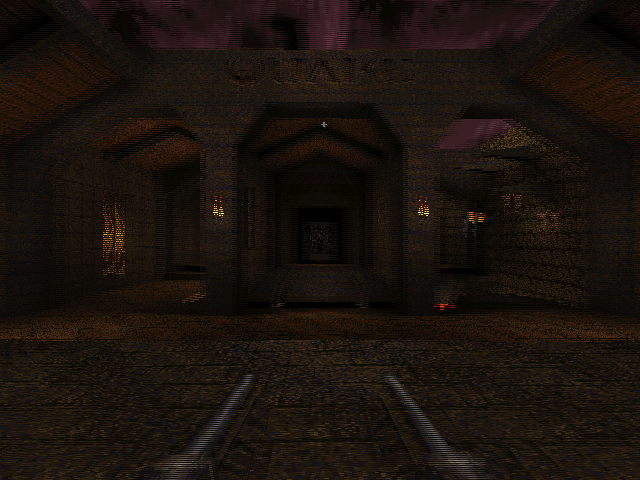

WinQuake with the 3D fixes applied

You can download the replacement WinQuake from here – you can just overwrite any existing executable. (You will also need the VC++ 2008 SP1 runtimes, if you do not already have them). Source code is included, and should build in VC++ 2008 SP1 (MASM only appears to be included in SP1, which is required to compile Quake's extensive collection of assembly source files).

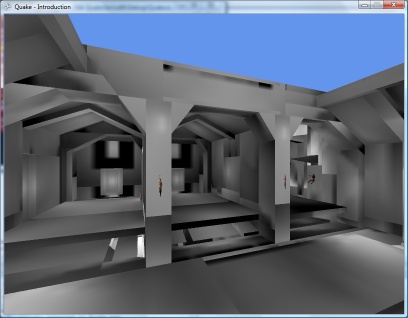

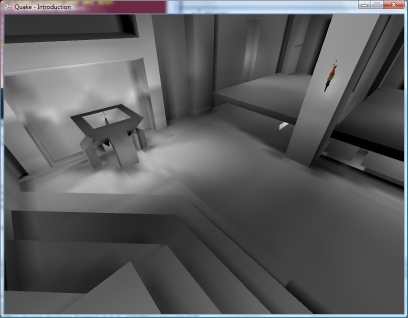

If you don't have a copy of Quake, I recorded its looping demos in 3D and uploaded them to YouTube. This was before I made the above fixes, so there's no crosshair or player weapon model in the videos – if you have access to YouTube-compatible 3D glasses or crossable eyes, click here. ![]()

Dynamic Lighting

Tuesday, 18th September 2007

Quake has a few dynamic lights - some projectiles, explosions and the fireballs light up their surroundings.

Fortunately, the method used is very simple: take the brightness of the projectile, divide it by the distance between the point on the wall and the light and add it to the light level for that point. This is made evident by pausing the early versions of Quake; when paused the areas around dynamic lights get brighter and brighter as the surface cache gets overwritten multiple times.

The next problem is that a light on the far side of a thin wall will light up both sides! Happily, this bug wasn't fixed in Quake, and is very evident in the start level where you can see the light spots from the fireballs in the Hard hall on the walls of the Medium hall.

I'd noticed that fireball-spawning entites would occasionally spawn a still fireball then remove it some seconds later. Looking over the Quake source code, it would appear that in each update the game iterates over all entities, checks their movetype property, then applies physics as applicable. Fireballs don't seem to have any real physics to speak of (they can pass through walls) beyond adding gravity each update to their velocity.

This required some further changes to get the VM working, including console variable support (gravity is defined in the sv_gravity console variable - this allows for the special low-gravity Ziggurat Vertigo).

For some reason the pickups seem to have their movetype set to TOSS resulting in all of the pickups flying away when the level started (not to mention abysmal performance). I added a hack to the droptofloor() QuakeC function that sets their on-floor flag (and hence disables their physics), but I'm not sure what the best course of action is going to be. I'm having to dig deeper and deeper into Quake's source, now...

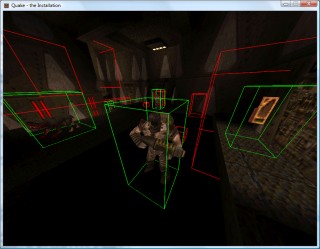

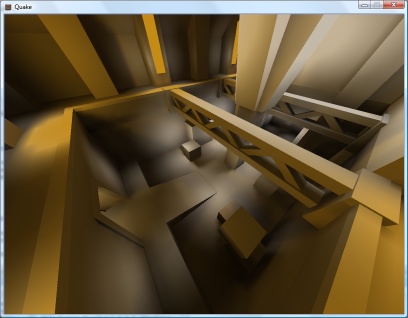

Bounding Box Bouncing

Friday, 14th September 2007

I've updated the collision detection between points and the world with Scet's idea of only testing with the faces in the leaf containing the start position of the point.

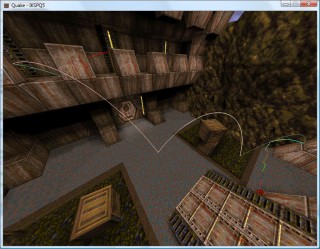

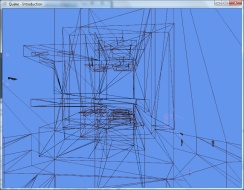

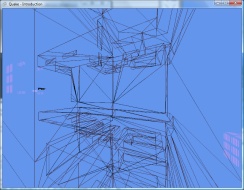

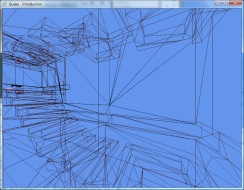

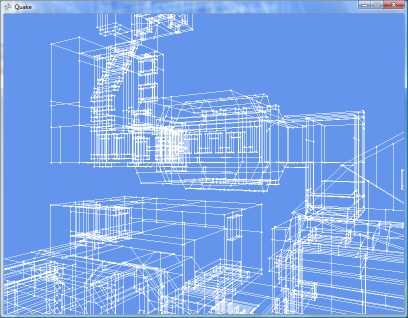

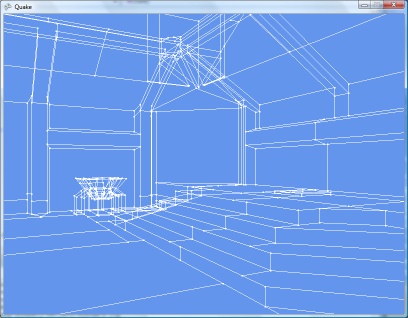

ZiggyWare's Simple Vector Rendering GameComponent is being very useful for testing the collision routines. ![]()

I have also extended the collision routines to bounding boxes. This fixes a few issues where a point could drop through a crack in the floor between two faces.

My technique is to test the collision between the eight corners of the box and then to pick the shortest collision and use that as the resultant position. This results in a bounding box that is not solid; you can impale it on a spike, for example.

In the above screenshot, the cube comes to rest with the corners all lined up on cracks, which means that it slips through as its base isn't solid.

Happily, object collisions are simple bounding-box affairs, the bounding boxes set with QuakeC code, which should simplify things somewhat.

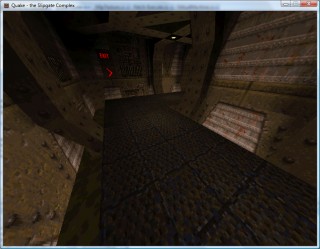

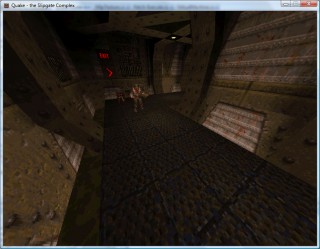

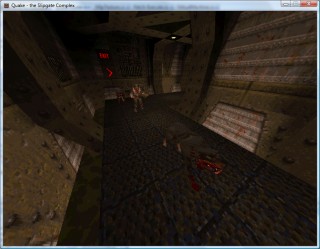

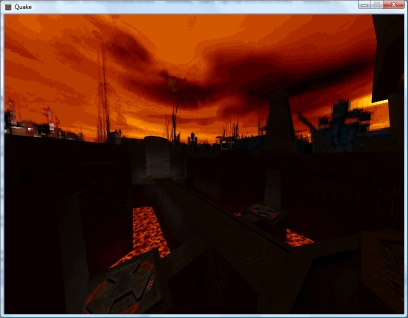

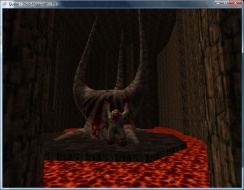

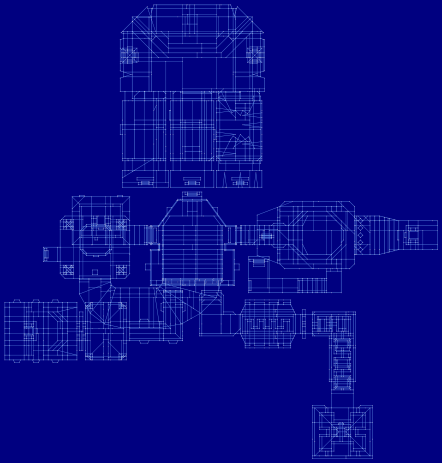

Goodness Gracious, Great Balls of Lava

Wednesday, 12th September 2007

I've reworked the VM completely to use an array of a union of a float and an int, rather than the MemoryStream kludge I was using before. This also removes a lot of the multiply-or-divide-by-four mess I had with converting indices to positions within the memory stream.

There are (as far as I know) three ways to invoke QuakeC methods. The first is when an entity is spawned, and this is only valid when the level is being loaded (the function that matches the entity's classname is called). The second is when an entity is touched (its touch function is called) and the third is when its internal timer, nextthink, fires (its think function is called).

The third is the easiest one to start with. On a monster-free level, there are still "thinking" items - every powerup item has droptofloor() scheduled to run.

A strange feature of custom levels has been that all of the power-up items have been floating in mid-air.

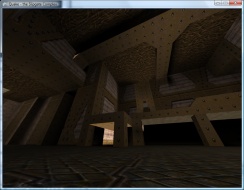

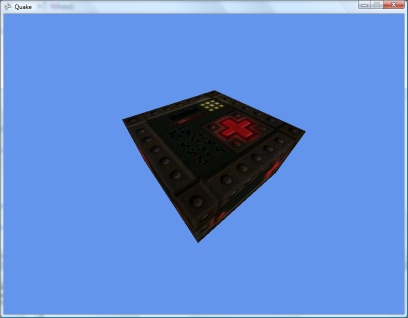

By hacking together some crude collision detection (face bouncing boxes and axial planes only) I could make those objects sit in the right place:

With many thanks to Zipster pointing me in the right direction I have extended this to perform collision detection of a moving vertex against all world faces.

Here I fire a vertex (with a lava ball drawn around it to show where it is) through the world. When I hit a face I reflect the velocity by the face normal.

It looks much more realistic if I decrease the velocity z component over time (to simulate gravity) and reduce the magnitude of the velocity each time it hits a surface, so I can now bounce objects around the world:

Performing the collision detection against every single face in the world is not very efficient (though on modern hardware I'm still in the high nineties). There are other problems to look into - such as collisions with invisible brushes used for triggers and collision rules when it comes to water and the sky, not to mention that I should really be performing bounding-box collision detection, not just single moving points. Points also need to slide off surfices, not just stop dead in their tracks.

Once a vertex hits a plane and has been reflected I push it out of the surface very slightly to stop it from getting trapped inside the plane. This has the side effect of lifting the vertex a little above the floor, which it then drops back against, making it slide down sloping floors.

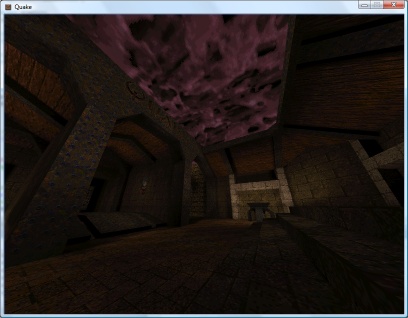

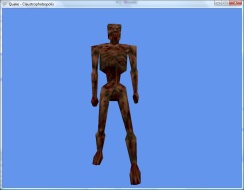

Entities Spawned by QuakeC Code

Thursday, 6th September 2007

After all that time spent trying to work out how the QuakeC VM works I finally have some real-world results. ![]()

Apart from the obvious boring stuff going on in the background parsing and loading entities go, two functions in particular are of note. The first is a native function, precache_model(string) which loads and caches a model of some description (sprites, Alias models or BSP models). The QuakeC VM I've written raises an event (passing an event containing the name of the model to load), which the XNA project can interpret and use to load a model into a format it's happy with.

With a suitable event handler and quick-and-dirty model renderer in place, the above models are precached (though they probably shouldn't be drawn at {0, 0, 0}).

The second function of interest is another native one, makestatic(entity). Quake supports three basic types of entity - dynamic entities (referenced by an index, move around and can be interacted with - ammo boxes, monsters and so on), temporary entities (removes itself from the game world automatically - point sprites) and static entities. Static entities are the easiest to handle - once spawned they stay fixed in one position, and can't be accessed (and hence can't be removed). Level decorations such as flaming torches are static. Here's the QuakeC code used to spawn a static small yellow flame:

void() light_flame_small_yellow = { precache_model ("progs/flame2.mdl"); setmodel (self, "progs/flame2.mdl"); FireAmbient (); makestatic (self); };

// Handle precache models: void Progs_PrecacheModelRequested(object sender, Quake.Files.QuakeC.PrecacheFileRequestedEventArgs e) { switch (Path.GetExtension(e.Filename)) { case ".mdl": if (CachedModels.ContainsKey(e.Filename)) return; // Already cached. CachedModels.Add(e.Filename, new Renderer.ModelRenderer(this.Resources.LoadObject<Quake.Files.Model>(e.Filename))); break; case ".bsp": if (CachedLevels.ContainsKey(e.Filename)) return; // Already cached. CachedLevels.Add(e.Filename, new Renderer.BspRenderer(this.Resources.LoadObject<Quake.Files.Level>(e.Filename))); break; } } // Spawn static entities: void Progs_SpawnStaticEntity(object sender, Quake.Files.QuakeC.SpawnStaticEntityEventArgs e) { // Grab the model name from the entity. string Model = e.Entity.Properties["model"].String; // Get the requisite renderer: Renderer.ModelRenderer Renderer; if (!CachedModels.TryGetValue(Model, out Renderer)) throw new InvalidOperationException("Model " + Model + " not cached."); // Add the entity's position to the renderer: Renderer.EntityPositions.Add(new Renderer.EntityPosition(e.Entity.Properties["origin"].Vector, e.Entity.Properties["angles"].Vector)); }

The result is a light sprinkling of static entities throughout the level.

As a temporary hack I just iterate over the entities, checking if each one is still active, and if so lumping them with the static entities.

If you look back a few weeks you'd notice that I already had a lot of this done. In the past, however, I was simply using a hard-coded entity name to model table and dumping entities any old how through the level. By parsing and executing progs.dat I don't have to hard-code anything, can animate models correctly, and even have the possibility of running the original game logic.

An example of how useful this is relates to level keys. In some levels you need one or two keys to get to the exit. Rather than use the same keys for each level, or use many different entity classes for keys, the worldspawn entity is assigned a type (Mediaeval, Metal or Base) and the matching key model is set automatically by the key spawning QuakeC function:

/*QUAKED item_key2 (0 .5 .8) (-16 -16 -24) (16 16 32) GOLD key In order for keys to work you MUST set your maps worldtype to one of the following: 0: medieval 1: metal 2: base */ void() item_key2 = { if (world.worldtype == 0) { precache_model ("progs/w_g_key.mdl"); setmodel (self, "progs/w_g_key.mdl"); self.netname = "gold key"; } if (world.worldtype == 1) { precache_model ("progs/m_g_key.mdl"); setmodel (self, "progs/m_g_key.mdl"); self.netname = "gold runekey"; } if (world.worldtype == 2) { precache_model2 ("progs/b_g_key.mdl"); setmodel (self, "progs/b_g_key.mdl"); self.netname = "gold keycard"; } key_setsounds(); self.touch = key_touch; self.items = IT_KEY2; setsize (self, '-16 -16 -24', '16 16 32'); StartItem (); };

One problem is entities that appear at different skill levels. Generally higher skill levels have more monsters, but there are other level design concerns such as swapping a strong enemy for a weaker one in the easy skill mode. In deathmatch mode entities are also changed - keys are swapped for weapons, for example. At least monsters are kind - their spawn function checks the deathmatch global and they remove themselves automatically, so adding the (C#) line Progs.Globals["deathmatch"].Value.Boolean = true; flushes them out nicely.

Each entity, however, has a simple field attached - spawnflags - that can have bits set to inhibit the entity from spawning at the three different skill levels.

Regrettably, whilst the Quake 1 QuakeC interpreter source code is peppered with references to Quake 2 it would appear that Quake 2 used native code rather than QuakeC to provide gameplay logic, so I've dropped development on the Quake 2 side at the moment.

QuakeC

Monday, 3rd September 2007

This journal hasn't been updated for a while, I know. That doesn't mean that work on the Quake project has dried up - on the contrary, a fair amount of head-way has been made!

The problem is that screenshots like the above are really not very interesting at all. ![]()

As far as I can tell, Quake's entity data (at runtime) is stored in a different chunk of memory to the memory used for global variables. I've had numerous problems getting the code to work - most of which caused by pointer confusion. Four bytes are generally used for a field (vectors use three singles, so you have 12 bytes), so I've tried multiplying and dividing offsets by four to try and get it all to work.

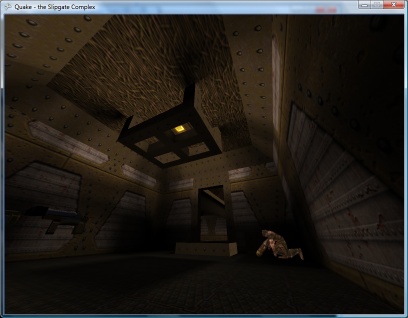

The basic entity data is stored in the .bsp file. It takes the following form:

{

"worldtype" "2"

"sounds" "6"

"classname" "worldspawn"

"wad" "gfx/base.wad"

"message" "the Slipgate Complex"

}

{

"classname" "info_player_start"

"origin" "480 -352 88"

"angle" "90"

}

{

"classname" "light"

"origin" "480 96 168"

"light" "250"

}

classname describes the type of the entity, and the other key-value pairs are used to adjust the entity's properties. All entities share the same set of fields, which are declared in a special section of the progs.dat file.

For each classname there is a matching QuakeC function. As far as I can tell the trick is to decode an entity then invoke its QuakeC method.

void() light = { if (!self.targetname) { // inert light remove(self); return; } if (self.style >= 32) { self.use = light_use; if (self.spawnflags & START_OFF) lightstyle(self.style, "a"); else lightstyle(self.style, "m"); } };

As you can probably guess, if a light entity is not attached to a particular target it is automatically removed from the world as it serves no useful function (the lightmaps are prerendered, after all). A similar example comes from the monsters which remove themselves if deathmatch is set. The QuakeC code also contains instructions on setting which model file to use for each entity and declares the animation frame sequences, so is pretty important to get working. ![]()

I have a variety of directories stuffed with images on my website, and (thankfully) I have used a faintly sane naming convention for some of these. I knocked together a PHP script which reads the files from these directories and creates a nifty sort of gallery. It automatically generates thumbnails and is quite fast. As I don't have an internet connection at home it's more practical to be able to just drop files into a directory and have the thing automatically update rather than spend time updating a database.

PHP source code (requires GD).

It's INI file driven. The two files are config.ini:

[Site] base_dir=../../ ; Base directory of the site ; I put this in bin/gallery, so need to go up two levels :) [Files] valid_extensions=jpg,gif,png ; Only three that are supported [Thumbnails] max_width=320 max_height=256 quality=90 ; Thumbnail JPEG quality

...and galleries.ini:

[VB6 Terrain] key=te ; Used in gallery=xyz parameter path=projects/te ; Image location, relative to base_dir date_format=ddmmyyyyi ; Filename format, where i = index. [MDX DOOM] key=doom path=images/doom ignore_extensions=jpg ; Can be used to ignore extensions. date_format=yyyy.mm.dd.ii

The index in the date format is for files that fall on the same date. Historically I used a single letter (01012003a, 01012003b), currently I use a two-digit integer (2003.01.01.01).

If a text file with the name of the image + ".txt" is found, that is used as a caption. (eg, /images/quake/2003.01.01.01.png and /images/quake/2003.01.01.01.png.txt).

It's not designed to be bullet-proof (and was written very very quickly) but someone might find it a useful base to work on. ![]()

8-bit Raycasting Quake Skies and Animated Textures

Monday, 20th August 2007

All of this Quake and XNA 3D stuff has given me a few ideas for calculator (TI-83) 3D.

One of my problems with calculator 3D apps is that I have never managed to even get a raycaster working. Raycasters aren't exactly very tricky things to write.

So, to help me, I wrote a raycaster in C#, limiting myself to the constraints of the calculator engine - 96×64 display, 256 whole angles in a full revolution, 16×16 map, that sort of thing. This was easy as I had floating-point maths to fall back on.

With that done, I went and ripped out all of the floating-point code and replaced it with fixed-point integer arithmetic; I'm using 16-bit values, 8 bits for the whole part and 8 bits for the fractional part.

From here, I just rewrote all of my C# code in Z80 assembly, chucking in debugging code all the way through so that I could watch the state of values and compare them with the results from my C# code.

The result is rather slow, but on the plus side the code is clean and simple. ![]() The screen is cropped for three reasons: it's faster to only render 64 columns (naturally), you get some space to put a HUD and - most importantly - it limits the FOV to 90°, as the classic fisheye distortion becomes a more obvious problem above this.

The screen is cropped for three reasons: it's faster to only render 64 columns (naturally), you get some space to put a HUD and - most importantly - it limits the FOV to 90°, as the classic fisheye distortion becomes a more obvious problem above this.

I sneaked a look at the source code of Gemini, an advanced raycaster featuring textured walls, objects and doors. It is much, much faster than my engine, even though it does a lot more!

It appears that the basic raycasting algorithm is pretty much identical to the one I use, but gets away with 8-bit fixed point values. 8-bit operations can be done significantly faster than 16-bit ones on the Z80, especially multiplications and divisions (which need to be implemented in software). You can also keep track of more variables in registers, and restricting the number of memory reads and writes can shave off some precious cycles.

Some ideas that I've had for the raycaster, that I'd like to try and implement:

- Variable height floors and ceilings. Each block in the world is given a floor and ceiling height. When the ray intersects the boundary, the camera height is subtracted from these values, they are divided by the length of the ray (for projection) and the visible section of the wall is drawn. Two counters would keep track of the upper and lower values currently drawn to to keep track of the last block's extent (for occlusion) and floor/ceiling colours could be filled between blocks.

- No texturing: wall faces and floors/ceilings would be assigned dithered shades of grey. I think this, combined with lighting effects (flickering, shading), would look better than monochrome texture mapping - and would be faster!

- Ray-transforming blocks. For example, you could have two 16×16 maps with a tunnel: the tunnel would contain a special block that would, when hit, tell the raycaster to start scanning through a different level. This could be used to stitch together large worlds from small maps (16×16 is a good value as it lets you reduce level pointers to 8-bit values).

- Adjusting floors and ceilings for lifts or crushing ceilings.

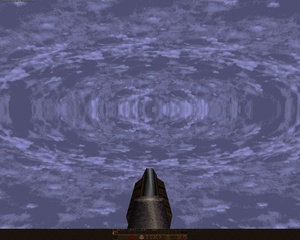

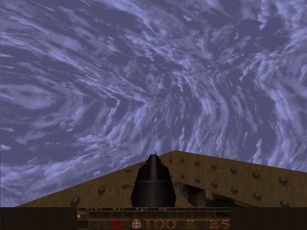

As far as the Quake project, I've made a little progress. I've added skybox support for Quake 2:

Quake 2's skyboxes are simply made up of six textures (top, bottom, front, back, left, right). Quake doesn't use a skybox. Firstly, you have two parts of the texture - one half is the sky background, and the other half is a cloud overlay (both layers scroll at different speeds). Secondly, it is warped in a rather interesting fashion - rather like a squashed sphere, reflected in the horizon:

For the moment, I'm just using the Quake 2 box plus a simple pixel shader to mix the two halves of the sky texture.

I daresay something could be worked out to simulate the warping.

The above is from GLQuake, which doesn't really look very convincing at all.

I've reimplemented the texture animation system in the new BSP renderer, including support for Quake 2's animation system (which is much simpler than Quake 1's - rather than have magic texture names, all textures contain the name of the next frame in their animation cycle).

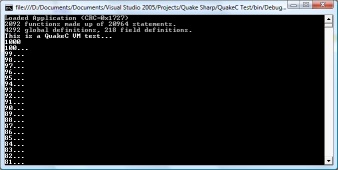

QuakeC VM

Wednesday, 15th August 2007

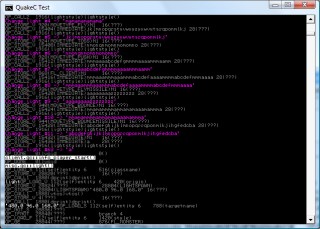

I've started serious work on the QuakeC virtual machine.

The bytecode is stored in a single file, progs.dat. It is made up of a number of different sections:

- Definitions data - an unformatted block of data containing a mixture of floating point values, integers and vectors.

- Statements - individual instructions, each made up of four short integers. Each statement has an operation code and up to three arguments. These arguments are typically pointers into the definitions data block.

- Functions - these provide a function name, a source file name, storage requirements for local variables and the address of the first statement.

On top of that are two tables that break down the definitions table into global and field variables (as far as I'm aware this is only used to print "nice" names for variables when debugging, as it just attaches a type and name to each definition) and a string table.

The first few values in the definition data table are used for predefined values, such as function parameters and return value storage.

Now, a slight problem is how to handle these variables. My initial solution was to read and write types strictly as particular types using the definitions table, but this idea got scrapped when I realised that the QuakeC bytecode uses the vector store opcode to copy string pointers, and a vector isn't much use when you need to print a string.

I now use a special VariablePointer class that internally stores the pointer inside the definition data block, and provides properties for reading and writing using the different formats.

/// <summary>Defines a variable.</summary> public class VariablePointer { private readonly uint Offset; private readonly QuakeC Source; private void SetStreamPos() { this.Source.DefinitionsDataReader.BaseStream.Seek(this.Offset, SeekOrigin.Begin); } public VariablePointer(QuakeC source, uint offset) { this.Source = source; this.Offset = offset; } #region Read/Write Properties /// <summary>Gets or sets a floating-point value.</summary> public float Float { get { this.SetStreamPos(); return this.Source.DefinitionsDataReader.ReadSingle(); } set { this.SetStreamPos(); this.Source.DefinitionsDataWriter.Write(value); } } /// <summary>Gets or sets an integer value.</summary> public int Integer { get { this.SetStreamPos(); return this.Source.DefinitionsDataReader.ReadInt32(); } set { this.SetStreamPos(); this.Source.DefinitionsDataWriter.Write(value); } } /// <summary>Gets or sets a vector value.</summary> public Vector3 Vector { get { this.SetStreamPos(); return new Vector3(this.Source.DefinitionsDataReader.BaseStream); } set { this.SetStreamPos(); this.Source.DefinitionsDataWriter.Write(value.X); this.Source.DefinitionsDataWriter.Write(value.Y); this.Source.DefinitionsDataWriter.Write(value.Z); } } #endregion #region Extended Properties public bool Boolean { get { return this.Float != 0f; } set { this.Float = value ? 1f : 0f; } } #endregion #region Read-Only Properties /// <summary>Gets a string value.</summary> public string String { get { return this.Source.GetString((uint)this.Integer); } } public Function Function { get { return this.Source.Functions[this.Integer]; } } #endregion }

If the offset for a statement is negative in a function, that means that the function being called is an internally-implemented one. The source code for the test application in the screenshot at the top of this entry is as follows:

float testVal; void() test = { dprint("This is a QuakeC VM test...\n"); testVal = 100; dprint(ftos(testVal * 10)); dprint("\n"); while (testVal > 0) { dprint(ftos(testVal)); testVal = testVal - 1; dprint("...\n"); } dprint("Lift off!"); };

There's a huge amount of work to be done here, especially when it comes to entities (not something I've looked at at all). All I can say is that I'm very thankful that the .qc source code is available and the DOS compiler runs happily under Windows - they're going to be handy for testing.

Quake 2 PVS, Realigned Lightmaps and Colour Lightmaps

Friday, 10th August 2007

Quake 2 stores its visibility lists differently to Quake 1 - as close leaves on the BSP tree will usually share the same visibility information, the lists are grouped into clusters (Quake 1 stored a visibility list for every leaf). Rather than go from the camera's leaf to find all of the other visible leaves directly, you need to use the leaf's cluster index to look up which other clusters are visible, then search through the other leaves to find out which reference that cluster too.

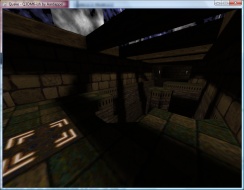

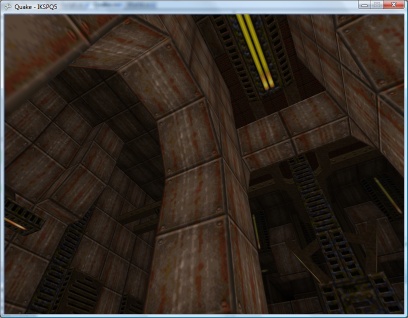

In a nutshell, I now use the visibility cluster information in the BSP to cull large quantities of hidden geometry, which has raised the framerate from 18FPS (base1.bsp) to about 90FPS.

I had a look at the lightmap code again. Some of the lightmaps appeared to be off-centre (most clearly visible when there's a small light bracket on a wall casting a sharp inverted V shadow on the wall underneath it, as the tip of the V drifted to one side). On a whim, I decided that if the size of the lightmap was rounded to the nearest 16 diffuse texture pixels, one could assume that the top-left corner was not at (0,0) but offset by 8 pixels to centre the texture. This is probably utter nonsense, but plugging in the offset results in almost completely smooth lightmaps, like the screenshot above.

I quite like Quake 2's colour lightmaps, and I also quite like the chunky look of the software renderer. I've modified the pixel shader for the best of both worlds. I calculate the three components of the final colour individually, taking the brightness value for the colourmap from one of the three channels in the lightmap.

float4 Result = 1; ColourMapIndex.y = 1 - tex2D(LightMapTextureSampler, vsout.LightMapTextureCoordinate).r; Result.r = tex2D(ColourMapSampler, ColourMapIndex).r; ColourMapIndex.y = 1 - tex2D(LightMapTextureSampler, vsout.LightMapTextureCoordinate).g; Result.g = tex2D(ColourMapSampler, ColourMapIndex).g; ColourMapIndex.y = 1 - tex2D(LightMapTextureSampler, vsout.LightMapTextureCoordinate).b; Result.b = tex2D(ColourMapSampler, ColourMapIndex).b; return Result;

Journals need more animated GIFs

Thursday, 9th August 2007

Pixel shaders are fun.

I've implemented support for decoding mip-maps from mip textures (embedded in the BSP) and from WAL files (external).

Now, I know that non-power-of-two textures are naughty. Quake uses a number of them, and when loading textures previously I've just let Direct3D do its thing which has appeared to work well.

However, now that I'm directly populating the entire texture, mip-maps and all, I found that Texture2D.SetData was throwing exceptions when I was attempting to shoe-horn in a non-power-of-two texture. Strange. I hacked together a pair of extensions to the Picture class - GetResized(width, height) which returns a resized picture (nearest-neighbour, naturally) - and GetPowerOfTwo(), which returns a picture scaled up to the next power-of-two size if required.

All textures now load correctly, and I can't help but notice that the strangely distorted textures - which I'd put down to crazy texture coordinates - now render correctly! It turns out that all of the distorted textures were non-power-of-two.

The screenshots above demonstrate that Quake 2 is also handled by the software-rendering simulation. The current effect file for the world is as follows:

uniform extern float4x4 WorldViewProj : WORLDVIEWPROJECTION; uniform extern float Time; uniform extern bool Rippling; uniform extern texture DiffuseTexture; uniform extern texture LightMapTexture; uniform extern texture ColourMap; struct VS_OUTPUT { float4 Position : POSITION; float2 DiffuseTextureCoordinate : TEXCOORD0; float2 LightMapTextureCoordinate : TEXCOORD1; float3 SourcePosition: TEXCOORD2; }; sampler DiffuseTextureSampler = sampler_state { texture = <DiffuseTexture>; mipfilter = POINT; }; sampler LightMapTextureSampler = sampler_state { texture = <LightMapTexture>; mipfilter = LINEAR; minfilter = LINEAR; magfilter = LINEAR; }; sampler ColourMapSampler = sampler_state { texture = <ColourMap>; addressu = CLAMP; addressv = CLAMP; }; VS_OUTPUT Transform(float4 Position : POSITION0, float2 DiffuseTextureCoordinate : TEXCOORD0, float2 LightMapTextureCoordinate : TEXCOORD1) { VS_OUTPUT Out = (VS_OUTPUT)0; // Transform the input vertex position: Out.Position = mul(Position, WorldViewProj); // Copy the other values straight into the output for use in the pixel shader. Out.DiffuseTextureCoordinate = DiffuseTextureCoordinate; Out.LightMapTextureCoordinate = LightMapTextureCoordinate; Out.SourcePosition = Position; return Out; } float4 ApplyTexture(VS_OUTPUT vsout) : COLOR { // Start with the original diffuse texture coordinate: float2 DiffuseCoord = vsout.DiffuseTextureCoordinate; // If the surface is "rippling", wobble the texture coordinate. if (Rippling) { float2 RippleOffset = { sin(Time + vsout.SourcePosition.x / 32) / 8, cos(Time + vsout.SourcePosition.z / 32) / 8 }; DiffuseCoord += RippleOffset; } // Calculate the colour map look-up coordinate from the diffuse and lightmap textures: float2 ColourMapIndex = { tex2D(DiffuseTextureSampler, DiffuseCoord).a, 1 - (float)tex2D(LightMapTextureSampler, vsout.LightMapTextureCoordinate).rgba }; // Look up and return the value from the colour map. return tex2D(ColourMapSampler, ColourMapIndex).rgba; } technique TransformAndTexture { pass P0 { vertexShader = compile vs_2_0 Transform(); pixelShader = compile ps_2_0 ApplyTexture(); } }

It would no doubt be faster to have two techniques; one for rippling surfaces and one for still surfaces. It is, however, easier to use the above and switch the rippling on and off when required (rather than group surfaces and switch techniques). Given that the framerate rises from ~135FPS to ~137FPS on my video card if I remove the ripple effect altogether, it doesn't seem worth it.

Sorting out the order in which polygons are drawn looks like it's going to get important, as I need to support alpha-blended surfaces for Quake 2, and there are some nasty areas of Z-fighting cropping up.

Alpha-blending in 8-bit? Software Quake didn't support any sort of alpha blending (hence the need to re-vis levels for use with Quake GL as underneath the opaque waters were marked as invisible), and Quake 2 has a data file that maps 16-bit colour values to 8-bit palette indices. Quake 2 also had a "stipple alpha" mode used a dither pattern to handle the two translucent surface opacities (1/3 and 2/3 ratios).

Shaders

Tuesday, 7th August 2007

Following sirob's prompting, I dropped the BasicEffect for rendering and rolled my own effect. After seeing the things that could be done with them (pixel and vertex shaders) I'd assumed they'd be hard to put together, and that I'd need to change my code significantly.

In reality all I've had to do is copy and paste the sample from the SDK documentation, load it into the engine (via the content pipeline), create a custom vertex declaration to handle two sets of texture coordinates (diffuse and lightmap) and strip out all of the duplicate code I had for creating and rendering from two vertex arrays.

[StructLayout(LayoutKind.Sequential)] public struct VertexPositionTextureDiffuseLightMap { public Xna.Vector3 Position; public Xna.Vector2 DiffuseTextureCoordinate; public Xna.Vector2 LightMapTextureCoordinate; public VertexPositionTextureDiffuseLightMap(Xna.Vector3 position, Xna.Vector2 diffuse, Xna.Vector2 lightMap) { this.Position = position; this.DiffuseTextureCoordinate = diffuse; this.LightMapTextureCoordinate = lightMap; } public readonly static VertexElement[] VertexElements = new VertexElement[]{ new VertexElement(0, 0, VertexElementFormat.Vector3, VertexElementMethod.Default, VertexElementUsage.Position, 0), new VertexElement(0, 12, VertexElementFormat.Vector2, VertexElementMethod.Default, VertexElementUsage.TextureCoordinate, 0), new VertexElement(0, 20, VertexElementFormat.Vector2, VertexElementMethod.Default, VertexElementUsage.TextureCoordinate, 1) }; }

uniform extern float4x4 WorldViewProj : WORLDVIEWPROJECTION; uniform extern texture DiffuseTexture; uniform extern texture LightMapTexture; uniform extern float Time; struct VS_OUTPUT { float4 Position : POSITION; float2 DiffuseTextureCoordinate : TEXCOORD0; float2 LightMapTextureCoordinate : TEXCOORD1; }; sampler DiffuseTextureSampler = sampler_state { Texture = <DiffuseTexture>; mipfilter = LINEAR; }; sampler LightMapTextureSampler = sampler_state { Texture = <LightMapTexture>; mipfilter = LINEAR; }; VS_OUTPUT Transform(float4 Position : POSITION, float2 DiffuseTextureCoordinate : TEXCOORD0, float2 LightMapTextureCoordinate : TEXCOORD1) { VS_OUTPUT Out = (VS_OUTPUT)0; Out.Position = mul(Position, WorldViewProj); Out.DiffuseTextureCoordinate = DiffuseTextureCoordinate; Out.LightMapTextureCoordinate = LightMapTextureCoordinate; return Out; } float4 ApplyTexture(VS_OUTPUT vsout) : COLOR { float4 DiffuseColour = tex2D(DiffuseTextureSampler, vsout.DiffuseTextureCoordinate).rgba; float4 LightMapColour = tex2D(LightMapTextureSampler, vsout.LightMapTextureCoordinate).rgba; return DiffuseColour * LightMapColour; } technique TransformAndTexture { pass P0 { vertexShader = compile vs_2_0 Transform(); pixelShader = compile ps_2_0 ApplyTexture(); } }

Of course, now I have that up and running I might as well have a play with it...

By adding up and dividing the individual RGB components of the lightmap texture by three you can simulate the monochromatic lightmaps used by Quake 2's software renderer. Sadly I know not of a technique to go the other way and provide colourful lightmaps for Quake 1. ![]() Not very interesting, though.

Not very interesting, though.

I've always wanted to do something with pixel shaders as you get to play with tricks that are a given in software rendering with the speed of dedicated hardware acceleration. I get the feeling that the effect (or a variation of it, at least) will be handy for watery textures.

float4 ApplyTexture(VS_OUTPUT vsout) : COLOR {

float2 RippledTexture = vsout.DiffuseTextureCoordinate;

RippledTexture.x += sin(vsout.DiffuseTextureCoordinate.y * 16 + Time) / 16;

RippledTexture.y += sin(vsout.DiffuseTextureCoordinate.x * 16 + Time) / 16;

float4 DiffuseColour = tex2D(DiffuseTextureSampler, RippledTexture).rgba;

float4 LightMapColour = tex2D(LightMapTextureSampler, vsout.LightMapTextureCoordinate).rgba;

return DiffuseColour * LightMapColour;

}

My code is no doubt suboptimal (and downright stupid).

Naturally, I needed to try and duplicate Scet's software rendering simulation trick. ![]()

The colour map (gfx/colormap.lmp) is a 256×64 array of bytes. Each byte is an index to a colour palette entry, on the X axis is the colour and on the Y axis is the brightness: ie, RGBColour = Palette[ColourMap[DiffuseColour, Brightness]]. I cram the original diffuse colour palette index into the (unused) alpha channel of the ARGB texture, and leave the lightmaps untouched.

float2 LookUp = 0; LookUp.x = tex2D(DiffuseTextureSampler, vsout.DiffuseTextureCoordinate).a; LookUp.y = (1 - tex2D(LightMapTextureSampler, vsout.LightMapTextureCoordinate).r) / 4; return tex2D(ColourMapTextureSampler, LookUp);

As I'm not loading the mip-maps (and am letting Direct3D handle generation of mip-maps for me) I have to disable mip-mapping for the above to work, as otherwise you'd end up with non-integral palette indices. The results are therefore a bit noisier in the distance than in vanilla Quake, but I like the 8-bit palette look. At least the fullbright colours work.

Less Colourful Quake 2

Monday, 6th August 2007

I've transferred the BSP rendering code to use the new level loading code, so I can now display correctly-coloured Quake 2 levels. ![]() The Quake stuff is in its own assembly, and is shared by the WinForms resource browser project and the XNA renderer.

The Quake stuff is in its own assembly, and is shared by the WinForms resource browser project and the XNA renderer.

I'm also now applying lightmaps via multiplication rather than addition, so they look significantly better.

A shader solution would be optimal. I'm currently just drawing the geometry twice, the second time with some alpha blending enabled.

Keyboard Handler Fix

Friday, 3rd August 2007

ArchG indicated a bug in the TextInputHandler class I posted a while back - no reference to the delegate instance used for the unmanaged callback is held, so as soon as the garbage collector kicks in things go rather horribly wrong.

/* * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * XnaTextInput.TextInputHandler - benryves@benryves.com * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * This is quick and very, VERY dirty. * * It uses Win32 message hooks to grab messages (as we don't get a nicely wrapped WndProc). * * I couldn't get WH_KEYBOARD to work (accessing the data via its pointer resulted in access * * violation exceptions), nor could I get WH_CALLWNDPROC to work. * * Maybe someone who actually knows what they're doing can work something out that's not so * * kludgy. * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * This quite obviously relies on a Win32 nastiness, so this is for Windows XNA games only! * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * * */ #region Using Statements using System; using System.Runtime.InteropServices; using System.Windows.Forms; // This class exposes WinForms-style key events. #endregion namespace XnaTextInput { /// <summary> /// A class to provide text input capabilities to an XNA application via Win32 hooks. /// </summary> class TextInputHandler : IDisposable { #region Win32 /// <summary> /// Types of hook that can be installed using the SetWindwsHookEx function. /// </summary> public enum HookId { WH_CALLWNDPROC = 4, WH_CALLWNDPROCRET = 12, WH_CBT = 5, WH_DEBUG = 9, WH_FOREGROUNDIDLE = 11, WH_GETMESSAGE = 3, WH_HARDWARE = 8, WH_JOURNALPLAYBACK = 1, WH_JOURNALRECORD = 0, WH_KEYBOARD = 2, WH_KEYBOARD_LL = 13, WH_MAX = 11, WH_MAXHOOK = WH_MAX, WH_MIN = -1, WH_MINHOOK = WH_MIN, WH_MOUSE_LL = 14, WH_MSGFILTER = -1, WH_SHELL = 10, WH_SYSMSGFILTER = 6, }; /// <summary> /// Window message types. /// </summary> /// <remarks>Heavily abridged, naturally.</remarks> public enum WindowMessage { WM_KEYDOWN = 0x100, WM_KEYUP = 0x101, WM_CHAR = 0x102, }; /// <summary> /// A delegate used to create a hook callback. /// </summary> public delegate int GetMsgProc(int nCode, int wParam, ref Message msg); /// <summary> /// Install an application-defined hook procedure into a hook chain. /// </summary> /// <param name="idHook">Specifies the type of hook procedure to be installed.</param> /// <param name="lpfn">Pointer to the hook procedure.</param> /// <param name="hmod">Handle to the DLL containing the hook procedure pointed to by the lpfn parameter.</param> /// <param name="dwThreadId">Specifies the identifier of the thread with which the hook procedure is to be associated.</param> /// <returns>If the function succeeds, the return value is the handle to the hook procedure. Otherwise returns 0.</returns> [DllImport("user32.dll", EntryPoint = "SetWindowsHookExA")] public static extern IntPtr SetWindowsHookEx(HookId idHook, GetMsgProc lpfn, IntPtr hmod, int dwThreadId); /// <summary> /// Removes a hook procedure installed in a hook chain by the SetWindowsHookEx function. /// </summary> /// <param name="hHook">Handle to the hook to be removed. This parameter is a hook handle obtained by a previous call to SetWindowsHookEx.</param> /// <returns>If the function fails, the return value is zero. To get extended error information, call GetLastError.</returns> [DllImport("user32.dll")] public static extern int UnhookWindowsHookEx(IntPtr hHook); /// <summary> /// Passes the hook information to the next hook procedure in the current hook chain. /// </summary> /// <param name="hHook">Ignored.</param> /// <param name="ncode">Specifies the hook code passed to the current hook procedure.</param> /// <param name="wParam">Specifies the wParam value passed to the current hook procedure.</param> /// <param name="lParam">Specifies the lParam value passed to the current hook procedure.</param> /// <returns>This value is returned by the next hook procedure in the chain.</returns> [DllImport("user32.dll")] public static extern int CallNextHookEx(int hHook, int ncode, int wParam, ref Message lParam); /// <summary> /// Translates virtual-key messages into character messages. /// </summary> /// <param name="lpMsg">Pointer to an Message structure that contains message information retrieved from the calling thread's message queue.</param> /// <returns>If the message is translated (that is, a character message is posted to the thread's message queue), the return value is true.</returns> [DllImport("user32.dll")] public static extern bool TranslateMessage(ref Message lpMsg); /// <summary> /// Retrieves the thread identifier of the calling thread. /// </summary> /// <returns>The thread identifier of the calling thread.</returns> [DllImport("kernel32.dll")] public static extern int GetCurrentThreadId(); #endregion #region Hook management and class construction. /// <summary>Handle for the created hook.</summary> private readonly IntPtr HookHandle; private readonly GetMsgProc ProcessMessagesCallback; /// <summary>Create an instance of the TextInputHandler.</summary> /// <param name="whnd">Handle of the window you wish to receive messages (and thus keyboard input) from.</param> public TextInputHandler(IntPtr whnd) { // Create the delegate callback: this.ProcessMessagesCallback = new GetMsgProc(ProcessMessages); // Create the keyboard hook: this.HookHandle = SetWindowsHookEx(HookId.WH_GETMESSAGE, this.ProcessMessagesCallback, IntPtr.Zero, GetCurrentThreadId()); } public void Dispose() { // Remove the hook. if (this.HookHandle != IntPtr.Zero) UnhookWindowsHookEx(this.HookHandle); } #endregion #region Message processing private int ProcessMessages(int nCode, int wParam, ref Message msg) { // Check if we must process this message (and whether it has been retrieved via GetMessage): if (nCode == 0 && wParam == 1) { // We need character input, so use TranslateMessage to generate WM_CHAR messages. TranslateMessage(ref msg); // If it's one of the keyboard-related messages, raise an event for it: switch ((WindowMessage)msg.Msg) { case WindowMessage.WM_CHAR: this.OnKeyPress(new KeyPressEventArgs((char)msg.WParam)); break; case WindowMessage.WM_KEYDOWN: this.OnKeyDown(new KeyEventArgs((Keys)msg.WParam)); break; case WindowMessage.WM_KEYUP: this.OnKeyUp(new KeyEventArgs((Keys)msg.WParam)); break; } } // Call next hook in chain: return CallNextHookEx(0, nCode, wParam, ref msg); } #endregion #region Events public event KeyEventHandler KeyUp; protected virtual void OnKeyUp(KeyEventArgs e) { if (this.KeyUp != null) this.KeyUp(this, e); } public event KeyEventHandler KeyDown; protected virtual void OnKeyDown(KeyEventArgs e) { if (this.KeyDown != null) this.KeyDown(this, e); } public event KeyPressEventHandler KeyPress; protected virtual void OnKeyPress(KeyPressEventArgs e) { if (this.KeyPress != null) this.KeyPress(this, e); } #endregion } }

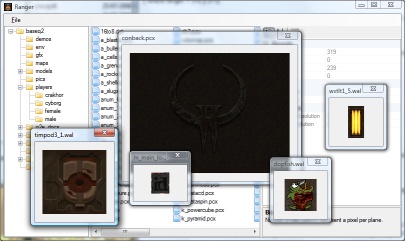

I wrote a crude ZSoft PCX loader (only handles 8-bit per plane, single-plane images, which is sufficient for Quake 2).

Using this loader I found colormap.pcx, which appears to perform the job of palette and colour map for Quake II.

.wal files now open with the correct palette. I've also copied over most of the BSP loading code, but it needs a good going-over to make it slightly more sane (especially where the hacks for Quake II support have been added).

Loader Change

Thursday, 2nd August 2007

I've started rewriting the underlying resource loading code to better handle multiple versions of the game.

To help with this I'm writing a WinForms-based resource browser.

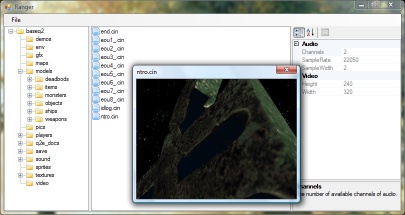

(That's the only real Quake-related change visible in the above screenshot. I've written a cinematic (.cin, used in Quake 2) loader).

To aid loading resources I've added a number of new generic types. For example, the Picture class always represents a 32-bit per pixel ARGB 2D picture. The decoders for various formats will always have access to the resource manager, so they can request palette information if they need it. To further aid issues, there are some handy interfaces that a specific format class can implement - for example, a class (such as WallTexture for handling .wal files) implementing IPictureLoader will always have a GetPicture() method.

The loader classes are also given attributes specifying which file extensions are handled. (This project uses quite a bit of reflection now). The only issue I can see with this are files that use the same extension but have different types, such as the range of .lmp files.

In addition, certain single files within the packages have multiple sub-files (for example, the .wad files in Quake). I'm not sure how I'll handle this, but I'm currently thinking of having the .wad loader implement IPackage so you could access files via gfx/somewad.wad/somefileinthewad, but some files don't have names or extensions.

Quake 2 and Emulation

Wednesday, 1st August 2007

The current design of the Quake project is that there are a bunch of classes in the Data namespace that are used to decode Quake's structures in a fairly brain-dead manner. To do anything useful with it you need to build up your own structures suitable for the way you intend on rendering the level.

The problem comes in when you try to load resources from different versions of Quake. Quake 1 and Quake 2 have quite a few differences. One major one is that every BSP level in Quake contains its own mip textures. You can call a method in the BSP class which returns sane texture coordinates as it can inspect the texture dimensions inside itself. Quake 2 stores all of the textures externally in .wal resources - the BSP class can no longer calculate texture coordinates as it can't work out how large the textures are as it can't see outside itself.

I guess the only sane way to work this out is to hide the native types from the end user and wrap everything up, but I've never liked this much as you might neglect to wrap up something that someone else would find very important, or you do something that is unsuitable for the way they really wanted to work.

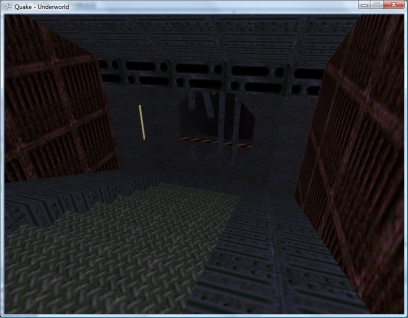

Anyhow. I've hacked around the BSP loader to within an inch of its life and it seems to be (sort of) loading Quake 2 levels for brute-force rendering. Quake 2 boasts truecolour lightmaps, improving the image quality quite significantly!

The truecolour lightmaps show off the Strogg disco lighting to its best effect. One of the problems with the Quake II BSP file format is that the indexing of lumps inside the file has changed. Not good.

That's a bit better. ![]() Quake II's lightmaps tend to stick to the red/brown/yellow end of the spectrum, but that is a truecolour set of lightmaps in action!

Quake II's lightmaps tend to stick to the red/brown/yellow end of the spectrum, but that is a truecolour set of lightmaps in action!

The lightmaps tend to look a bit grubby where they don't line up between faces. Some trick to join all lightmaps for a plane together into a single texture should do the trick, and reduce the overhead of having to load thousands of tiny textures (which I'm guessing have to be scaled up to a power-of-two). I'll have to look into it.

On to .wal (wall texture) loading - and I can't find a palette anywhere inside the Quake II pack files. I did find a .act (Photoshop palette) that claimed to be for Quake II, but it doesn't quite seem to match. It's probably made up of the right colours, but not in the right order.

Fortunately I have some PAK files with replacement JPEG textures inside them and can load those instead for the moment.

The brightness looks strange due to the bad way I apply the lightmaps - some kludgy forced two-pass affair with alpha blending modes set to something that sort of adds the two textures together in a not-very-convincing manner.

Can anyone recommend a good introduction to shaders for XNA? I'm not really trying to do anything that exciting.

This is a really bad and vague overview of the emulation technique I use in Cogwheel, so I apologise in advance. Emulation itself is very simple when done in the following manner - all you really need is a half-decent knowledge of how the computer you're emulating works at the assembly level. The following is rather Z80-specific.

At the heart of the system is its CPU. This device reads instructions from memory and depending on the value it reads it performs a variety of different actions. It has a small amount of memory inside itself which it uses to store its registers, variables used during execution. For example, the PC register is used as a pointer to the next instruction to fetch and execute from memory, and the SP register points at the top of the stack.

It can interact with the rest of the system in three main ways:

- Read/Write Memory

- Input/Output Hardware

- Interrupt Request

I assume you're familiar with memory. ![]() The hardware I refer to are peripheral devices such as video display processors, keypads, sound generators and so on. Data is written to and read from these devices on request. What the hardware device does with that data is up to it. I'll ignore interrupt requests for the moment.

The hardware I refer to are peripheral devices such as video display processors, keypads, sound generators and so on. Data is written to and read from these devices on request. What the hardware device does with that data is up to it. I'll ignore interrupt requests for the moment.

The CPU at an electronic level communicates with memory and hardware using two buses and a handful of control pins. The two buses are the address bus and data bus. The address bus is read-only (when viewed from outside the CPU) and is used to specify a memory address or a hardware port number. It is 16 bits wide, meaning that 64KB memory can be addressed. Due to the design, only the lower 8-bits are normally used for hardware addressing, giving you up to 256 different hardware devices.

The data bus is 8-bits wide (making the Z80 an "8-bit" CPU). It can be read from or written to, depending on the current instruction.

The exact function of these buses - whether you're addressing memory or a hardware device, or whether you're reading or writing - is relayed to the external hardware via some control pins on the CPU itself. The emulator author doesn't really need to emulate these. Rather, we can do something like this:

class CpuEmulator { public virtual void WriteMemory(ushort address, byte value) { // Write to memory. } public virtual byte ReadMemory(ushort address) { // Read from memory. return 0x00; } public virtual void WriteHardware(ushort address, byte value) { // Write to hardware. } public virtual byte ReadHardware(ushort address) { // Read from hardware. return 0x00; } }

A computer with a fixed 64KB RAM, keyboard on hardware port 0 and console (for text output) on port 1 might look like this:

class SomeBadComputer : CpuEmulator { private byte[] AllMemory = new byte[64 * 1024]; public override void WriteMemory(ushort address, byte value) { AllMemory[address] = value; } public override byte ReadMemory(ushort address) { return AllMemory[address]; } public override void WriteHardware(ushort address, byte value) { switch (address & 0xFF) { case 1: Console.Write((char)value); break; } } public override byte ReadHardware(ushort address) { switch (address & 0xFF) { case 0: return (byte)Console.ReadKey(); default: return 0x00; } } }

This is all very well, but how does the CPU actually do anything worthwhile?

It needs to read instructions from memory, decode them, and act on them. Suppose our CPU had two registers - 16-bit PC (program counter) and 8-bit A (accumulator) and this instruction set:

00nn : Load 'nn' into accumulator. 01nn : Output accumulator to port N. 02nn : Input to accumulator from port N. 03nnnn : Read from memory address nnnn to accumulator. 04nnnn : Write accumulator to memory address nnnn. 05nnnn : Jump to address nnnn.

Extending the above CpuEmulator class, we could get something like this:

partial class CpuEmulator { public ushort RegPC = 0; public byte RegA = 0; private int CyclesPending = 0; public void FetchExecute() { switch (ReadMemory(RegPC++)) { case 0x00: RegA = ReadMemory(RegPC++); CyclesPending += 8; break; case 0x01: WriteHardware(ReadMemory(RegPC++), RegA); CyclesPending += 8; break; case 0x02: RegA = ReadHardware(ReadMemory(RegPC++)); CyclesPending += 16; break; case 0x03: RegA = ReadMemory((ushort)(ReadMemory(RegPC++) + ReadMemory(RegPC++) * 256)); CyclesPending += 16; break; case 0x04: WriteMemory((ushort)(ReadMemory(RegPC++) + ReadMemory(RegPC++) * 256), RegA); CyclesPending += 24; break; case 0x05: RegPC = (ushort)(ReadMemory(RegPC++) + ReadMemory(RegPC++) * 256); CyclesPending += 24; break; default: // NOP CyclesPending += 4; break; } } }

The CyclesPending variable is used for timing. Instructions take a variable length of time to run (depending on complexity, length of opcode, whether it needs to access memory and so on). This time is typically measured in the number of clock cycles taken for the CPU to execute the instruction.

Using the above CyclesPending += x style one can write a function that will execute a particular number of cycles:

partial class CpuEmulator { public void Tick(int cycles) { CyclesPending -= cycles; while (CyclesPending < 0) FetchExecute(); } }

For some truly terrifying code, an oldish version of Cogwheel's instruction decoding switch block. That code has been automatically generated from a text file, I didn't hand-type it all.

Um... that's pretty much all there is. The rest is reading datasheets! Your CPU would need to execute most (if not all) instructions correctly, updating its internal state (and registers) as the hardware would. The non-CPU hardware (video processor, sound processor, controllers and so on) would also need to conform to data reads and writes correctly.

As far as timing goes, various bits of hardware need to run at their own pace. One scanline (of the video processor) is a good value for the Master System. Cogwheel provides this method to run the emulator for a single frame:

public void RunFrame() { this.VDP.RunFramePending = false; while (!this.VDP.RunFramePending) { this.VDP.RasteriseLine(); this.FetchExecute(228); } }

In the Master System's case, one scanline is displayed every 228 clock cycles. Some programs update the VDP on every scanline (eg changing the background horizontal scroll offset to skew the image in a driving game).

The above is embarrassingly vague, so if anyone is interested enough to want clarification on anything I'd be happy to give it.

QTEST

Tuesday, 31st July 2007

Looks nice, I could never figure out Quakes lightmaps.

The unofficial Quake specs are a bit confusing on this matter. To get the size of the texture, find the bounding rectangle for the face (using the horizontal and vertical vectors to convert the 3D vertices to 2D in the same way as it's done for the texture coordinates). Then divide by 16 and add one, like this:

Width = (Ceiling(Max.X / 16) - Floor(Min.X / 16)) + 1 Height = (Ceiling(Max.Y / 16) - Floor(Min.Y / 16)) + 1

It'd certainly work, but it'd probably look a bit odd (mixing and matching, that is - emulating the appearance of the software renderer on its own is very cool). ![]()

Quake II appears to contain a lump that maps 16-bit colour values to values in the palette. I don't know what that was used for, but you could probably use something similar to convert the truecolour textures to 8-bit textures.

The rest of this journal post goes off on a bit of a historical tangent.

Before Quake was released, ID released a deathmatch test program, QTEST. This featured the basic engine, three deathmatch levels and not a whole lot else.

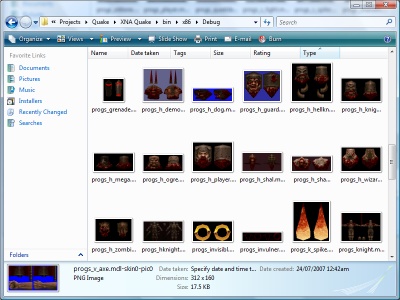

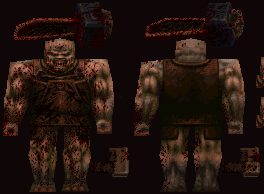

However, the PAK files contained some rather interesting files, including a variety of models - some of which were later dropped from Quake entirely!

The model version is type 3, and I've made a guess at the differences between type 3 and type 6 (6 is the version of the models in retail Quake). Type 6 has 8 more bytes after the frame count in the model header. I skip the "sync type" and "flags" fields, as I don't know what these do anyway. ![]() Type 3 files don't have a 16 byte frame name, either (between the frame bounding box information and vertices in type 6).

Type 3 files don't have a 16 byte frame name, either (between the frame bounding box information and vertices in type 6).

The most impressive extra model is progs/dragon.mdl. It appears in this early screenshot:

One character which changed design considerably was progs/shalrath.mdl.

Appearing in registered Quake, it became the following (QPed seems to mangle the palette, sorry):

The charmingly-named progs/vomitus.mdl is likewise untextured:

The frame data appears to be corrupted here, so I don't think my model loader is working properly. However, you can still get the rough idea of what progs/serpent.mdl might have looked like:

The fish model is quite different, but like the above its last few frames appear corrupted.

A number of textures changed in the final release. Some model textures, such as the grenade and nail textures, were originally much larger.

The Ogre before and after his makeover

The original 'gib' textures, eventually unidentifiable meat, were also toned down quite a lot from the rather more graphic ones in the QTEST PAKs.

The screenshots (taken directly from QTEST - I can't load those BSPs myself) show a few other changes - billboarded sprites for torches and flames were replaced with 3D models, the teleporter texture was changed and the particle effects for explosions were beefed up with billboarded sprites.

Let There Be Lightmaps

Monday, 30th July 2007

Eventually I gave up on my mod (double shotguns).

Heh, you might like the Killer Quake Pack. ![]()

I've added some primitive parsing and have now loaded the string table, instructions, functions and definitions from progs.dat but can't do a lot with them until I work out what the instructions are. Quake offers some predefined functions as well (quite a lot of them) so that'll require quite a lot of porting.

I seem to recall that the original Quake used "Truebright" colours (Which were either the first or last 16 colours in the palette), and these colours weren't affected by lighting. How the rendering was done, I've no idea.

I haven't looked at the data structures so could be making this up entirely: Quake does lighting using a colour map (a 2D structure with colour on one axis and brightness on the other). I'm assuming, therefore, that for the fullbright colours they map to the same colour for all brightnesses, rather than fade to black.

How could you simulate that? I guess that the quickest and dirtiest method would be to load each texture twice, once with the standard palette and once with the palette adjusted for the darkest brightness and use that as a luma texture. I believe Scet did some interesting and clever things with pixel shaders for DOOM, but that would end up playing merry Hell with 24-bit truecolour external textures.

Aye, it's fun. ![]()

I think I've cracked those blasted lightmaps.