Better drawing, editing, memory and emulation for BBC BASIC on the Sega Master System

Tuesday, 3rd August 2021

I have continued to work on the Sega Master System version of BBC BASIC, and it's feeling much more like a practical version of BASIC than something that was just holding together to run one specific program!

One of the key improvements is to standardise the handling of the different graphics modes. Previously I was using a coordinate system where (0,0) is in the top left corner and all drawing operations were carried out using physical device coordinates (so the screen was treated as being 256 pixels wide and 192 pixels tall). I have now moved the origin (0,0) into the bottom left corner with the Y axis pointing up and scale down all coordinates by 5, effectively making the screen 1280 logical units wide and 960 units tall. This isn't 100% compliant, as the BBC Micro and other versions of BASIC treat the screen as being 1024 units tall, but dividing by 5⅓ is considerably trickier and it would result in the graphics being squished further vertically, so I think using a logical resolution of 1280×960 is an acceptable compromise. I've added some VDU statements to allow you to move the graphics origin as well as define custom graphics and text viewports, so you can control where on the screen graphics and text appear and how they are clipped/scroll.

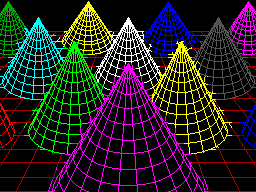

The output of the "Mandelbaum" program following these changes

I have also changed the default palette to more closely match the one used by the BBC Micro and other versions of BBC BASIC. This isn't too difficult when using the Master System's "Mode 4" as that has a user-definable palette, but the legacy TMS9918A modes have a fixed palette so I've tried to match the default palette as sensibly as I can to the TMS9918A palette. It's possible to change the logical to physical palette mappings under Master System "Mode 4" via a VDU command which writes directly to the CRAM (you can either remap one of the 16 logical entries to one of the stock 16 colours, or supply an RGB value directy to select additional colours) which allows for neat tricks like palette cycling, but the TMS9918A modes currently only let you change the current text/drawing colour, not amend the palette as that's fixed in hardware.

I've also added filled/outlined circle and axis-aligned ellipse PLOT commands using some code written by Darren "qarnos" Cubitt which I originally used in the TI-83 Plus version of BBC BASIC. This code is very capable and fully accepted 16-bit coordinates for its inputs, however it was also originally designed to output to a 96×64 pixel screen so the final plotting was done with 8-bit coordinates ranging from -128..127. Fortunately the Master System's screen also fits in 8-bit coordinates at 256 pixels wide but that's not quite enough information as you also need to be able to tell if a particular point is off-screen (less than zero or greater than 255); simply clipping it against those boundaries will result in a vertical smear on the left or right edge of the screen when drawing outlines. Fortunately I was able to figure out how to modify his code to add some extra clipping status flags to ensure that ellipses were clipped and displayed correctly on any part of the screen.

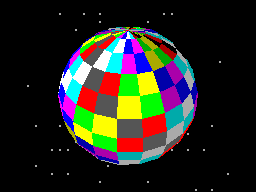

Filled circles and filled/outlined ellipses make these drawings possible

The only graphics operation exposed by the mode-specific drivers before was a simple "set pixel" routine. This is fine for lines but quite slow for filling shapes so graphics mode drivers can now supply a "fill horizontal span" routine for faster shape-filling. If the routine is left unimplemented a stub routine is provided that fills the span using the "set pixel" routine.

I also added a rectangle-filling PLOT command, which is perhaps not the most exciting-sounding graphics operation but it is used to clear the screen so it is at least useful. More interesting is a triangle-filling routine, something I've never enjoyed writing!

Usually I get very bogged down in the idea that the pixels affected when you fill a triangle should exactly fit within the pixels that are outlined by drawing lines between each of the triangle's points, no more and no less. This can be a bit difficult when the triangle is filled by tracing its edges from top to bottom and drawing horizontal spans between them. If the edge is "steep" (its overall height is greater than or equal to its width) then this isn't too bad, as there's only one X coordinate for each Y coordinate where a pixel would have been plotted. However, when the edge is "shallow" (its overall width is greater than its width) there are going to be certain Y coordinates where the line drawn would have had multiple pixels plotted. In that case, where is the boundary of the horizontal span?

The cop-out answer I've used in the past has been to set up three buffers the total height of the screen and to "draw" the three lines first using the same line-drawing algorithm as the line PLOTting command, keeping track of the minimum and maximum X coordinate for each Y coordinate. When it's time to fill the triangle the minimum and maximum X coordinate for each edge can be determined based on the current Y coordinate and a span drawn between them for perfect triangles. On the TI-83 Plus this takes up four bytes per line (minimum and maximum 16-bit values) for a 64 pixel tall screen, with three buffers for the three lines that comes to 4×64×3=768 bytes, pretty bad. On the Sega Master System that would be 4×192×3=2304 bytes, totally unacceptable on a machine with only 8KB total work RAM!

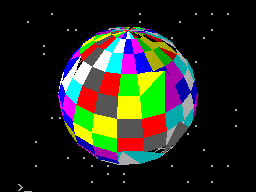

Each face of this 3D sphere is filled by two triangles.

I've instead simply done my best to interpolate from one X coordinate to the other when working my way down the triangle and filling scanlines, doing a bit of extra pre-incrementing and fudging of initial error values depending on whether it's the top half or bottom half of the triangle. My test was to draw the outline of a triangle in bright white and then to fill a dark blue triangle over the top, if any bright white pixels were visible around the outside this indicated a gap. I mostly got it filled, but I then tried my test program on a BBC Micro emulator and found the BBC Micro exhibited similar gaps so I don't think I'm doing too badly! The above screenshot of the 3D sphere was rendered using this triangle-filling code.

I've also been working on improving the line editor. This is called by BASIC when it's asking for a line of text input from you. Previously I'd only implemented adding characters to the end of the line and pressing backspace to remove characters from the end of the line; if you'd typed in a long piece of code and made a mistake at the start you'd need to backspace all the way to the mistake, correct it, then re-type the rest of the line. Now you can use the cursor keys (or home/end) to move around within the line, insert or overwrite new characters (toggled by pressing the insert key on the keyboard) at the cursor position or backspace/delete characters mid-line as required. It sounds like a small thing but it was quite a lot of code to get right and makes a big difference for usability!

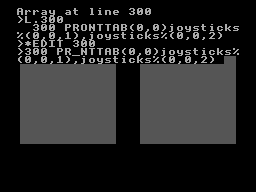

Another feature I've added to aid modifying lines of code is the *EDIT command. This takes a line number as an argument and then brings up the program line you've requested in the line editor, ready for editing. The way this works is a bit sneaky, as it's not natively implemented by BBC BASIC! The trick that makes it work is the ability to override two routines, OSLINE (which BASIC calls when it wants to display the line editor) and OSWRCH (which BASIC calls when it wants to output a character).

When a valid *EDIT <line> command is entered, OSLINE is overridden with the first custom routine. This routine doesn't ask for a line of input, but instead copies L.<line> to the input buffer, overrides OSWRCH with a routine that captures data to RAM, overrides OSLINE with a second custom routine, then returns. BASIC therefore thinks you've typed in a LIST statement so it dutifully starts outputting the line via OSWRCH, but this has been overridden to write the characters to RAM rather than the screen. When it's done this BASIC then calls OSLINE again for the next line of input, which brings up the second custom OSLINE handler. This pulls the data from RAM previously captured by the OSWRCH handler, copies it to the OSLINE buffer, and dispays it on-screen as if you'd just typed it in. It then restores the original OSLINE and OSWRCH handlers before jumping back into the stock OSLINE routine, so you can continue editing the line that you'd requested via *EDIT.

All of this hopefully makes entering programs via the keyboard less cumbersome. Of course, not having to type in programs in full every time you wanted to run them would be even better, and an attempt to give BASIC more memory provides another way to load programs in a somewhat roundabout manner.

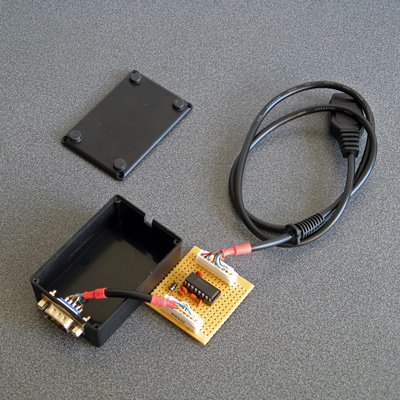

The photograph above shows the modified Monopoly cartridge that I'm now using to test BBC BASIC on real hardware instead of the modified After Burner cartridge I was using before. The advantage of Monopoly is that it has an additional 8KB RAM on board, which is used to save games in progress. Sega's cartridge mapper allows for on-cartridge RAM to be mapped into the address range $8000..$BFFF, immediately below the main work RAM which is at $C000..$DFFF. If present, then, BASIC's memory range (which runs from PAGE to HIMEM) can be extended by enabling the save RAM and moving PAGE down.

$8000..$BFFF is a 16KB range, though, and I mentioned that Monopoly has an 8KB RAM. This is true, and what it means is that the 8KB cartridge RAM is accessible from $8000..$9FFF but is then repeated ("mirrored") from $A000..$BFFF. The cartridge RAM detection therefore has to check two things: firstly that there is RAM in the first place (which can be verified by modifying memory at $8000 and seeing if those values stick) and how big it is (which can be checked by writing to $8000 and seeing if that has also modified the data at $8000+<RAM size>). Once presence of any RAM has been determined during startup, RAM mirroring is checked in 1KB, 2KB, 4KB and 8KB offsets. If any RAM mirroring is detected, then it's assumed the RAM is the size that was being checked at the time, however if not it's assumed that the RAM is the full 16KB. At this point, PAGE (which is $C000 in a stock machine with no cartridge RAM) is moved backwards by the size of the detected cartridge RAM, e.g. to $A000 for an 8KB RAM and $8000 for a 16KB RAM. This results in 16KB or 24KB total available to BBC BASIC, a considerable upgrade from the plain 8KB work RAM!

An added bonus of this is that when you type in programs they grow upwards in memory from PAGE. As PAGE now starts within your cartridge memory, and that cartridge memory retains its contents courtesy of a backup battery, it means that if your entered program is smaller than the size of your cartridge memory you can restore it when you switch the console back on by typing OLD.

That's very well for life on real hardware, but I continue to do most of my development testing in an emulator. I'm having to use my own emulator as there aren't any other Master System emulators that also include a PS/2 keyboard emulator, but I did end up running into a very weird bug. Certain trigonometric functions in BBC BASIC were producing very wrong values, resulting in very odd-looking output.

These shapes are on the wonk

Even though the Z80 emulator at the heart of the program passed ZEXDOC (an instruction tester that checks documented functionality) I remembered that it had failed some aspect of ZEXALL which checks the undocumented flags too. I re-ran ZEXALL and found the problem was with my implementation of the bit instruction, so I worked on fixing that including emulation of the Z80's internal temporary memptr/WZ register to ensure that bit n,(hl) set bits 3 and 5 of the flag register appropriately. ZEXALL now passes, but unsurprisingly it didn't fix my problem (as I didn't really think that BBC BASIC would rely on undocumented functionality!)

I ended up isolating certain exact values that when plugged into SIN() or COS() would produce incorrect results. I then dug out my old CP/M emulator and tried plugging those values into the generic CP/M version of BBC BASIC (which has none of my code in it!) and that produced the same, incorrect, results confirming that the issue was definitely in my Z80 emulation and not in some flaw of the BBC BASIC port.

After tracing through the code and dumping out debug values at certain points and seeing where it differed to a known good reference emulator I found that the fault occurred in FMUL, and from there I found that at some point the carry flag (which is either rotated or added to registers with RR or ADC instructions) was being lost. RR looked fine but digging into my implementation for the 16-bit ADC I found the culprit: the code is the same for ADD and ADC, but in ADC if the carry flag is set then the second operand is incremented by one first. This produces the correct numeric answer, but if the second operand was $FFFF on entry to the function then adding a carry of 1 to it would cause it to overflow back to 0. As this happens before any of the rest of the calculations are made it means that the final value of the carry flag was calculated based on op1+0 instead of op1+$10000, hence the loss of the carry flag.

Fortunately, fixing this fixed the wonky output demonstrated above and I feel slightly more confident in my emulation! Now I can continue working on BBC BASIC, I've had an idea for a screen mode that has a reduced number of colours but will hopefully let you draw anywhere on the screen without running out of tiles causing odd corruption (as happens in the usual 16-colour mode 4) and without attribute clash (as happens in the TMS9918A Graphics II mode)...

Running BBC BASIC on the Sega Master System

Thursday, 22nd July 2021

I've recently been spending some time finding a way to run BBC BASIC on the Sega Master System, inspired by BASIC Month 6: The Mandelbaum Set on the RetroBattlestations Reddit community.

When this month's program was first announced I tried running it on my only unarguably retrobattlestation, my Cambridge Z88, but the screen's low (vertical) resolution didn't do the program much justice.

I thought this gave me two options:

- Control some external piece of retro tech to produce higher-resolution output (e.g. a printer or a plotter.

- Port a BASIC interpreter to another retro system with a higher resolution display and run the program on that.

Unfortunately, I don't own any old printers or plotters (or a Logo-like turtle!) so option 2 seemed my best option. I had some experience adapting Richard Russell's BBC BASIC (Z80) to run on the TI-83 Plus calculator so I thought I should pick the Sega Master System as that also has a Z80 CPU in it. Here are some rough specs:

- 3.58(ish)MHz Z80 CPU.

- 8KB work RAM.

- TMS9918A-derived VDP for video with 16KB dedicated VRAM accessed via I/O port.

- SN76489-derived PSG for sound.

- Two controller ports with six input pins and two pins that could be configured as inputs or outputs.

- Software loaded from ROM cartridge or card slot with a small BIOS ROM that detects whether a cartridge or card is inserted (and if not, runs its own built-in game).

There is some precedence to this endeavour with the computer version of Sega's SG-1000, the SC-3000, which came with a keyboard and had BASIC ROM cartridges.

I wanted to try to keep this project as retro as possible, so no modern microcontrollers as I've been accused of cheating by using them in the past. I did have to make a couple of adaptors to allow me to plug in a keyboard and to give the Master System a serial port to load or save programs over – more about these later!

Loading BASIC onto the Master System

The first problem was getting BASIC onto the Master System at all. As my Master System doesn't have BASIC in ROM (it comes with Hang-On, which is perhaps more fun but less likely to handle the Mandelbaum set) I'd need to load the program onto a cartridge or card. Master System cartridges usually contain a mapper circuit to handle bank switching in the lower 48KB of the Z80's address space (the upper 16KB contains the 8KB work RAM, appearing twice) which is normally integrated directly into the ROM chip for the game, however there are a handful of games that have separate mapper chips and ROM chips and the ROM chips that Sega used have a pinout that is extremely close to that used by common EEPROMs (e.g. 29F010,

49F040) with only a couple of pins needing to be swapped around. After Burner is one such cartridge, so I've modified an old copy to let me plug in flash memory chips that I can program with the version of BASIC I'm working on. A switch at the top of the cartridge lets me switch back to the original pin configuration if I want to play the original copy of After Burner.

Typing commands into BASIC

With a flashable cartridge to hand I was able to assemble a version of Richard Russell's BBC BASIC (Z80) with some simple code stubs in place to direct text output to the screen. Output is useful but only half the story, we still need to be send commands to BASIC!

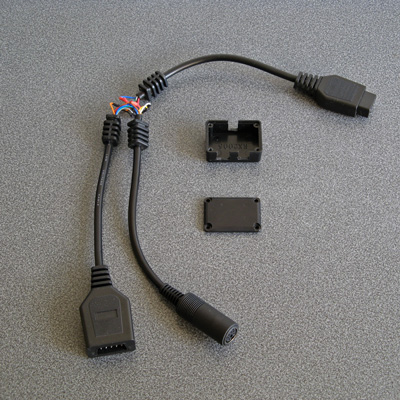

The Master System doesn't have its own keyboard, so I'd need to find some way to interface one to it. I have previously used PS/2 keyboards in a number of projects, as they are pretty simple to deal with. Electrically, they use two open collector I/O lines for bidirectional data transfer (one as clock, one as data), and fortunately each controller port of the Master System has two pins that can be configured as outputs which can therefore be used to interface with a keyboard (either left as inputs and pulled weakly high in their idle state, or driven low as outputs for their active state).

It acts as a pass-through cable so you can still have a regular controller plugged in when using the keyboard.

The two pins on the Master System control port that can act as outputs are TH and TR; TH is normally used by light guns to latch the horizontal counter in the video chip so is unused with normal controllers so it's no great loss here, but TR is the right action button (marked "2") so by using this pass-through adaptor you do unfortunately lose one of the controller buttons. However, you do gain a hundred or so keyboard keys, so I don't think it's too bad a compromise...

For the software side of things I adapted my Emerson AT device library, previously written for the TI-83 Plus calculators, to the Sega Master System hardware. This library handles the low-level AT device protocol and also translates the raw keyboard scancode values to the corresponding characters.

Saving and loading programs

At this point I was able to type in BASIC programs and run them on the Master System, which was pretty neat! However, I was still working on adding new features (e.g. drawing commands for graphics) and having to type in the entire Mandelbaum program after every change was going to get pretty exhausting. I could bake it into the ROM but that seemed like cheating, so I thought I should try to find a way to load the file from an external source. A floppy disk or tape cassette would seem authentically retro but adding a floppy drive controller to the Master System would be a fairly complicated task and I don't have a suitable data cassette recorder to even attempt loading from tape so I thought some sort of file store accessible over a serial port would be a good option.

The Z88 has a serial port and can act as a remotely-controlled file store when running the PC Link software (with the protocol documented here. This seemed like a good choice, if not for the fact that the Sega Master System doesn't have a serial port of its own. To get around this, I added one, using a MAX232 chip to adapt the Master System's 5V logic levels to RS-232 compatible ones so I could plug in a null modem cable from the Z88 or my PC without accidentally frying the Master System with -12V.

I wrote some code that bit-bangs the serial data over the controller port lines using the timing loop code I wrote for a previous BASIC Month (Crisps Tunes) to support rates between 19200 and 300 baud. 19200 baud is somewhat unreliable but that's OK because the Z88's 19200 baud is unreliable too, so the default 9600 baud speed does a good job. RTS/CTS handshaking has to be implemented, as there is no hardware serial support on the Master System and it needs to be actively polling the port to receive any data. In doing so I noticed one awkward fact about my PC's serial port - if you de-assert RTS it will continue sending data until its buffer is empty, presumably only checking the RTS line when it's about to top up the buffer. In practice this means that even if I change RTS virtually as soon as the start bit for the first byte is received, the PC will continue to send up to 256 bytes before stopping. To get around this I added a serial receive buffer that immediately checks for the next byte even after asserting RTS, and this seems to have done the trick.

The protocol used by PC Link requires acknowledgement after every single byte so is very slow but at least it's reliable. I plumbed the PC Link code into BASIC's LOAD and SAVE which makes loading and saving programs as transparent and easy as if you had a floppy disk in the system instead!

Pressed for size

The Master System has 8KB of RAM. Of this, 16 bytes are mapped to special hardware functions and BBC BASIC reserves 768 bytes for itself, so we're already down to 7,408 bytes. I initially reserved 256 bytes for my own needs (display settings, VDU command buffer, serial port status, keyboard status etc) bringing it down to 7,152 bytes. The 16KB of display memory is not directly accessible to the CPU, so it can't be used for additional work RAM, and having to access it indirectly via I/O ports is very slow but I can't afford to mirror parts of it in RAM for speed.

Initially the Mandelbaum program ran well enough by stripping out comments, but I then added sound support (with eight 13-byte envelope definitions, and four channels with their own state, copy of their active envelope and a command queue for 32 bytes per channel, adding around another 140 bytes of memory usage) and the program stopped running with a "No room" error during execution (performing a square root operation, of all things!) so I guess the tolerances were very tight. I went and combine more lines of code into single lines and replaced two-letter variable names with single-letter ones (no, really) and it was able to run again but I don't think 8KB is a particularly comfortable amount of RAM for a BASIC computer!

Further design considerations

The version of the BASIC host interface used here is very much a work-in-progress. I would need to extend it considerably to be useful, including:

- Fuller support of different VDU commands, e.g. redefining character shapes, changing the text and graphics viewports, better colour handling (differentiating between logical palettes and physical palettes).

- Better support of other modes (so far only TMS9918A "Text" and "Graphics II" modes are used, there is a Master System-specific "mode 4" but that lacks graphics support and only takes advantage of hardware scrolling for extremely fast program LISTing).

- Implementation of more graphics commands - so far only PLOT 4 (MOVE) and PLOT 5 (DRAW) are implemented. They also use a non-standard coordinate system with (0,0) in the top left of the screen and a screen resolution of 256x192, whereas for standardisation with other BBC BASIC implementations this should move (0,0) to the bottom left and use a logical resolution of 1280x1024 or similar.

- Support of other file systems rather than rely on a Z88 running PC Link, e.g. using I²C EEPROMs (as they use two open collector pins, so could be plugged into a controller port via a passive adaptor).

- Support of extra RAM, either integrated directly on a custom cartridge or using the battery-backed SRAM supported by some other cartridge types.

- Native controller support via BASIC ADVAL command (at the moment you can access the controller ports directly with GET(&DC))

This is before even getting into adding anything machine-specific (e.g. to take advantage of scrolling tilemaps or hardware sprites), but getting the BASICs down (and consistent!) is a very important starting point. Consistency is useful, after all I was able to get the original program running under BBC BASIC with very minimal changes to it.

But, in the short term, I have at least succeeded in what I set out to do, which was to run the Mandelbaum program on a retro "computer" wholly unsuited to it!

The above video provides another demonstration of the setup – playing the Cold Tea music demo, albeit with a heavily stripped-down version of the visuals as the Master System doesn't have anything that can match the capabilities of the BBC Micro's teletext mode 7.

Migrating SVN repositories to GitHub, maintaining history even on addition/removal of a trunk folder

Friday, 16th July 2021

Years ago I had a few projects hosted in Google Code's SVN repositories, but Google closed Code down in 2015 and though I backed the files up locally via svnsync to carry on working on the projects the code was never made publicly available again.

I decided it would be a good idea to move these repositories to GitHub, but quickly ran into the problem that during the initial migration from my local repository to Google Code's repository I had been forced to move all of the code into a trunk folder (I do not generally use SVN's branching or tagging features) and attempting to synchronise from SVN to Git lost all revision history prior to that change.

After scouring the Internet and many dead ends I came up with the below solution which has worked for me. As far as I can tell the Git tools used are designed for regular synchronisation of repositories and not a one-shot bulk migration. There are separate tools that claim to do a better job with less effort but getting them up and running on my Windows system probably takes longer than figuring out how to do it with Git's own tools...

First of all, you'll need to create a user list that maps your SVN user name(s) (left) to your name and email address (right) used on GitHub, like this:

Ben = Ben Ryves <benryves@benryves.com> Benjamin = Ben Ryves <benryves@benryves.com> benryves = Ben Ryves <benryves@benryves.com> benryves@benryves.com = Ben Ryves <benryves@benryves.com>

Save this list in a text file in your current directory (e.g. users.txt). Next you'll need to need to find the revision number where the move to the trunk folder happened, e.g. by checking the SVN logs. For the sake of this example, let's pretend it was in revision 100. You can now use git svn clone to create a temp copy of the SVN repository. To continue the example, suppose the SVN repository was backed up to D:\SVN\Google\brass-assembler, then the command would look like this:

git svn clone --no-metadata --authors-file=users.txt -r 1:99 file:///D/SVN/Google/brass-assembler temp

There are some notable differences from the usual here, which I'll try to explain:

- There is no --stdlayout parameter. This is because this makes the assumption that the repository follows the conventional trunk/tag/branch folder used in some SVN repositories, but this repository doesn't make use of such a structure (at least until revision 100!)

- The -r 1:99 argument limits the operation to be between the revisions 1 and 99. This is when all the files were in the root of the repository, before they were moved in revision 100.

- The SVN path looks like it's missing a colon, but this is intentional – at least on Windows the Git tools fail if the path contains a colon like it would when using the SVN tools for local paths, so remove that colon.

This may take a while to get started but eventually it should start synchronising the SVN repository to the new Git one in the temp folder up to (and including) the last revision where everything was in the root (99).

Once that has happened, you can perform the trick that makes this work. Open the file temp\.git\config in a text editor and in the [svn-remote "svn"] section you should find the line fetch = :refs/remotes/git-svn. As revisions after this point were moved into a trunk directory, we need to change this to fetch from trunk instead, so change this line to fetch = trunk:refs/remotes/git-svn:

[svn-remote "svn"] noMetadata = 1 url = file:///D/SVN/Google/brass-assembler fetch = trunk:refs/remotes/git-svn [svn] authorsfile = C:/Users/Ben/users.txt

Save the file, and then change your current working directory to your temporary repository, fetch the revisions from after the one that moved everything to trunk up to HEAD, then merge the changes (without the merge you'll only see up to the previously-cloned revision 99):

cd temp git svn fetch -r 101:HEAD git merge remotes/git-svn

At this point you can clone the temporary repository into a final one, so go up a level, clone the temp repository and delete it:

cd .. git clone temp Brass3 rmdir /s /q temp

Finally, you can push this local repository to GitHub. When you add a new repository GitHub will provide a clone URL (in the form https://github.com/<username>/<reponame>.git) so change the remote origin for the local repository that was just created and push your changes:

cd Brass3 git remote rm origin git remote add origin https://github.com/benryves/Brass3.git git push origin master

Once that has completed you should be able to view your code on the GitHub site with its full history. The last thing I do is to delete the local Git repository and clone again from the remote one on GitHub using the GitHub Desktop program as I am more comfortable using a GUI tool to keep an eye on changes (and I find I'm less likely to mess something up by accidentally mistyping something!)

In case you couldn't tell from the above examples I've also been looking at the source code for my old Brass 3 assembler project. I've been working on a little Z80 assembly project: running BBC BASIC on the Sega Master System, which has involved a lot of my old projects – Brass 3 to assemble the code, Cogwheel to test it, Emerson to handle PS/2 keyboard input and of course my experiences with running BBC BASIC on the TI-83 Plus calculator.

One thing I realised during all this was that Brass 3 had some problems running on 64-bit versions of Windows – the help application is completely non-functional, for example, and crashes silently to desktop. I dug into the code to fix it, only to find out that I'd already done so two years ago, and even got as far as rebuilding the installer package but then just forgot to upload it to the Internet. So, I'm very sorry for the delay, but I have now uploaded "Beta 14" to the Brass 3 page.

Making your own Dreamcast MIDI Interface Cable

Saturday, 1st May 2021

I love an unusual accessory for a video game console or computer, and one such accessory is the Dreamcast MIDI Interface Cable, HKT-9200, which allows you to connect MIDI devices to the Dreamcast console's serial port. Only released in Japan and with only one piece of software released for it — the O・to・i・re (お・と・い・れ) sequencer – these are a somewhat hard to find accessory nowadays and prices for second-hand units are far beyond what I could hope to afford (at the time of writing there are two on eBay, both for over £300).

Fortunately, the user darcagn on the Obscure Gamers forum took some photos of the insides of the interface box and from that I could make a pretty good guess as to how the cable works.

MIDI uses a serial protocol running at 31.25Kbps (a speed that can be easily derived by dividing a 1MHz clock by 32). Rather than signal "0" or "1" bits with different voltage levels (as with a PC's RS-232 serial ports, for example, which commonly uses +12V for a "0" and -12V for a "1") it uses a current loop, with 5mA current on for a "0" and current switched off for a "1". To avoid ground loops, which are a big concern when working with audio as they can introduce intereference (e.g. a mains hum) on recordings, the two connected devices are electrically isolated with an optoisolator in the receiver.

At the very least I therefore expected to see some sort of optoisolator circuit on the adaptor's MIDI IN port and some sort of output buffer circuit on the adaptor's MIDI OUT port to convert between MIDI's current loop signalling and the Dreamcast's 3.3V logic on its serial I/O pins, and that is indeed what you can see from darcagn's photos. My worry was that there might be some additional Dreamcast-specific hardware inside the box, but fortunately there isn't – it's all off-the-shelf parts. My main concern was how everything was connected, as this can't be completely seen from the photos: would sending MIDI IN data to the console's serial port RX and relaying data from the console's serial port TX to MIDI OUT be enough? Some experimentation would be necessary!

Building a serial port connector

As mentioned above, the MIDI interface cable's box doesn't contain anything Dreamcast-specific, however this box is connected to the Dreamcast's serial port using a proprietary connector. To try anything out I'd need to find a way to connect a circuit to this port:

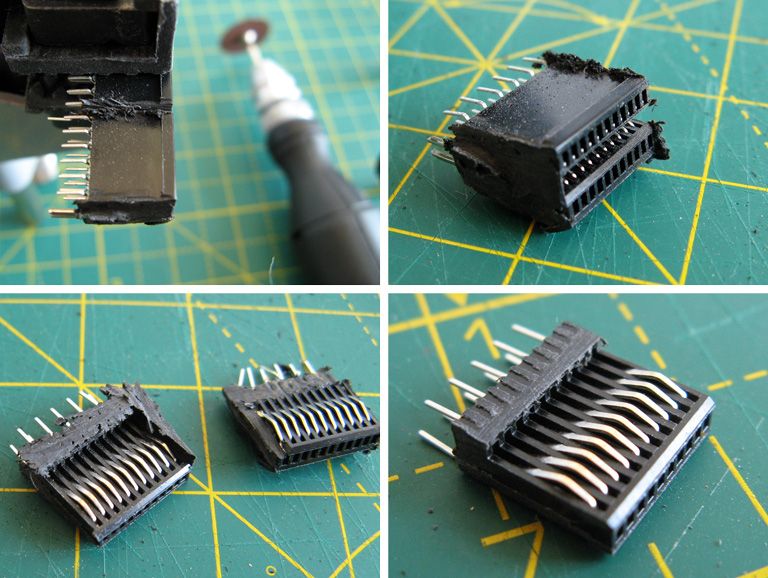

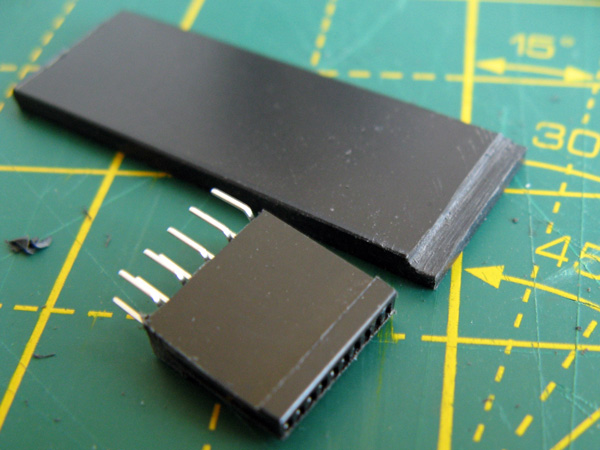

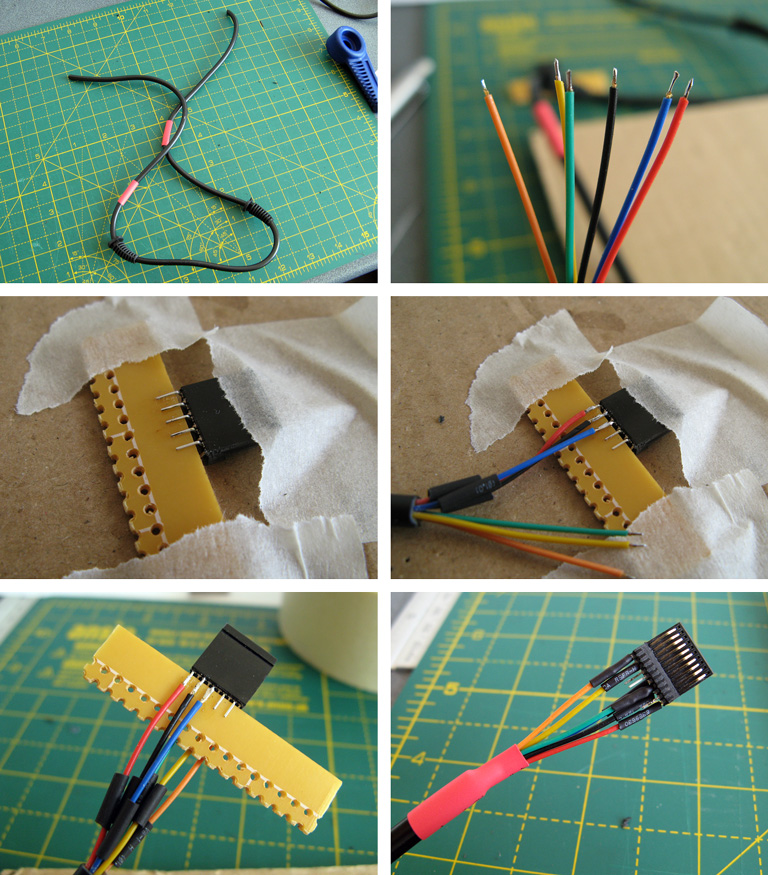

Fortunately, the port's contact pitch is the same as a PCI Express slot, and I was able to find a PCI Express slot for £5 which could be used to make multiple connectors! It will need to be cut down to size (and in half, as the Dreamcast's serial port only has contacts on one side rather than both sides of a PCI Express card) but with a bit of work will do the job.

The above photos show the process of cutting the PCI Express slot down to size. The Dreamcast serial port has 10 connectors in it, so a block is cut that is 12 connectors long using a cutting disc – as this is quite a rough process an extra sacrificial connector is left on each end as it doesn't matter if this gets mangled by the cutter. The block is then cut in half, leaving more of the support structure from the bottom of the slot on the side of the slot we're going to be using. The outer two connectors are then removed if they haven't already been damaged, leaving the central 10 connectors, and the outer plastic is brought to the final width and tidied up with some hand files. The fit of the connector should be tested against the Dreamcast's serial port:

There shouldn't be too much side-to-side movement but the connector will be very loose without something to hold it down against the contacts. In my case I found some 2mm thick ABS plastic sheet was the perfect material to make the backing piece for the connector, though you may find your choice of material depends on the thickness of your PCI Express slot. It will need to be 13.5mm wide (about ½") and a decent enough length to fit inside the enclosure you're going to use for the plug – in my case 4cms was about right. The plastic can be cut by scoring it with a knife and then snapping it over the edge of a table.

You should also drill some shallow holes in the plastic, with the centres of the holes being 3mm from the end and 3mm from the sides. The serial port has a couple of bumps stamped into the metal surround of the serial port and these matching holes in the plastic piece allow it to snap into place. A stripboard track-cutting drill is perfect for this task!

In my case the PCI Express slot also has a slight lip that prevents it from sitting flush against the backing support. I could have filed this flat but the connector is quite fragile so I didn't want to risk damaging it so I ended up filing a corresponding channel into the bottom of the backing support piece.

At this point make sure that everything fits. If it does you can start wiring up! The photos below show the process – heat-shrink tubing and strain reliefs are very useful, so don't forget to install them before soldering!

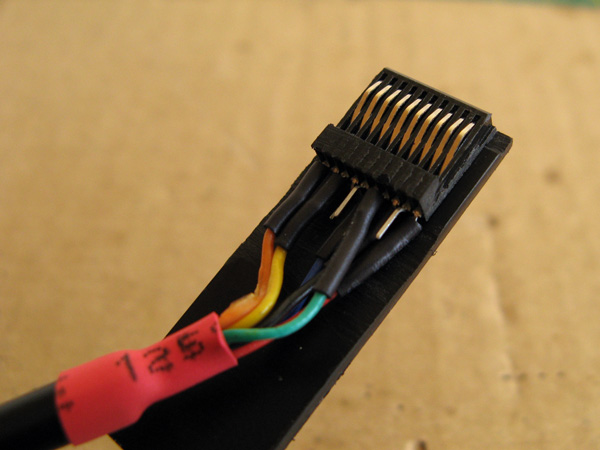

Putting a scrap piece of circuit board material between the two rows of pins also makes soldering much easier. In my case I only soldered the six pins required for this MIDI interface cable:

- 1: +5V

- 3: GND

- 4: RX

- 5: TX

- 8: GND

- 10: +3.3V

Pins are numbered from left to right when looking at the serial port at the rear of the console (if in doubt, you can check the voltages of the end pins against the console's metal chassis ground).

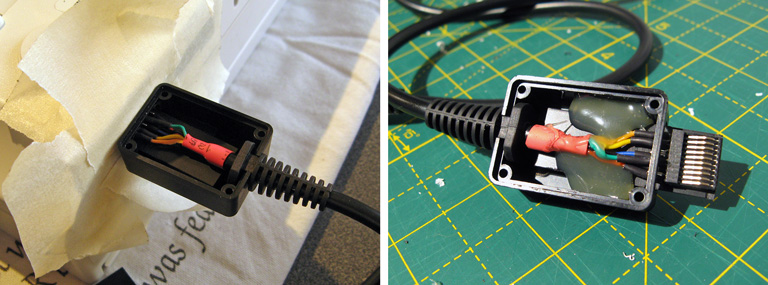

At this point you may wish to double check that your solder connections are made correctly and that nothing is shorted out – try with the cable plugged into the Dreamcast as well, and check that adjacent pins are not shorted together (the only two that should be shorted are pins 3 and 8, the two GNDs). If you're happy with that you can glue the connector onto the backing:

You may notice that this is actually a different connector in the photo to the previous one, and that's because I accidentally got glue into the connector's springy contacts and jammed them so had to start again – definitely not a fun mistake to make, so be careful!

Fortunately, the second one went more successfully. The glued connector snaps in and out of the console with a nice reassuring click thanks to the two holes drilled into the top surface. Before switching on the Dreamcast I tried wiggling the cable around to ensure that even when treated roughly it woudn't short out adjacent pins. When I was happy this was the case I switched on the Dreamcast and ensured that I was getting a consistent +5V from pin 1 and +3.3V from pin 10 – as these pins are at the far end of the connector these are the ones that are more likely to have problems with crooked connectors. In my case I found there was an intermittent fault with pin 1's +5V. This was because I hadn't glued the connector on particularly straight and so pin 1's connector was slightly back from the edge of the backing piece. I very carefully filed the backing piece's edge so that it was flush with the slightly wonky PCI Express connector, after which pin 1 made reliable contact.

When you're completely happy with the connector, you can make it more robust by putting it inside an enclosure. I have some very small project boxes that are perfect for this sort of thing, it's a bit bulky when compared to the official Sega product but it does its job well here and doesn't bump into the power connector or AV port connector.

I cut a slot in one end of the box for the connector to stick out of and a notch in the other for the cable strain relief to clip into. I surrounded the console's serial port with a few of layers of masking tape to ensure that when the connector was inserted there was still a small gap between the case and the plug to make sure that it could always be fully inserted and not held back by interference from the case (it also protected the console shell from accidental strings of hot glue!) I then plugged the connector into the Dreamcast, made sure that everthing was neatly lined up, and secured the parts in place with copious amounts of hot glue. Once this had set I added more hot glue to the rear of the connector to make sure it was all held as securely as possible, and then screwed the enclosure shut.

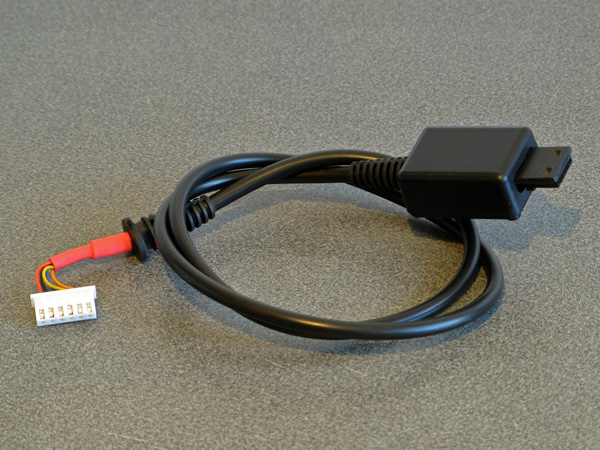

With all the effort spent on the Dreamcast end of the cable, don't forget about the MIDI interface box end! I'm fond of JST-XH connectors so crimped one onto the end of the cable, ready to plug into the circuit board. The finished cable is seen above!

Building a prototype MIDI interface cable

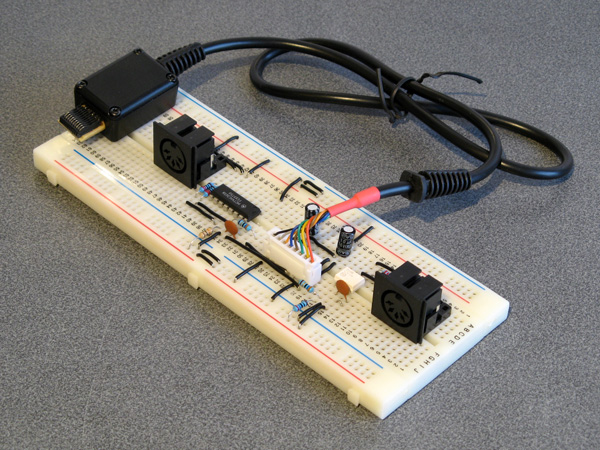

With a Dreamcast serial port cable to hand I was able to experiment and see what happened when using the O・to・i・re (お・と・い・れ) sequencer. Here's the circuit I ended up building on a breadboard:

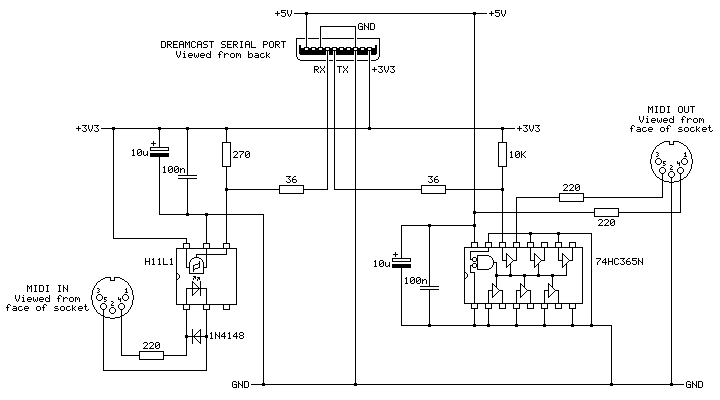

On the very left is the first serial cable connector I tried constructing. It looks more yellow than the final version as I used a piece of pad board for the backing piece instead of the 2mm ABS plastic – I thought I was going to solder the PCI Express connector piece to a circuit board rather than directly to the cable's wires, this turned out to be a mistake. One advantage is that it does have eight wires soldered to it – I thought I'd see what RTS and CTS were doing, but I ended up not using them. The MIDI port to the left is for MIDI OUT and has a large black chip to act as a buffer, the port to the right is for MIDI IN and has a small white chip as an optoisolator. Here's the corresponding circuit diagram:

The position of the ports is flipped in this diagram when compared to photo of the breadboard, but it otherwise matches up!

On the left is the MIDI IN port. This uses an H11L1 optoisolator as they are still reasonably easy to get hold of today, are fast enough for use with MIDI and can be run directly from +3.3V. Its output on pin 4 is open collector so it needs the 270Ω pull-up resistor to the +3.3V rail. A +5V-demanding optoisolator could also have been used if it had an open collector output and the pull-up resistor on its output was still tied to +3.3V (we don't want to run +5V into the Dreamcast's +3.3V logic!) but this makes the wiring a little more complicated so sticking to a +3.3V-compatible part makes life easier.

The 36Ω resistor between the output of the MIDI IN circuit and the serial port's RX pin is there because it is in the official cable. The official cable also places ferrite beads on every I/O pin (and has a ferrite bead clipped onto the cable itself) which I have not replicated in my own cable, but they can't hurt and I suppose it could protect the console from certain direct shorts!

The MIDI OUT port uses a 74HC365N to convert from the serial port's +3.3V logic to +5V to drive the MIDI output. MIDI signals can be run from +3.3V (it's the flow of current that's more important than the selected voltage) but +5V seems more typical so I thought I'd stick with that, and as we need to buffer the signal anyway (I'm not sure how much current the Dreamcast's serial ports are designed to sink or source) using the +5V supply we have available to us made sense. The voltage threshold for a "high" input signal is probably a bit too high with the 74HC365N – +3.3V is pretty close to the recommended values in the datasheet, so the 74HCT365N version of the chip would give you more margin for error and would be a drop-in replacement. In my testing the 74HC365N does work well, though, and it's what I had available.

The cable used in this prototype has eight connections rather than the six in the final. This is because I did experiment with the RTS (pin 6) and CTS (pin 7) signals from the console, however as far as I can see these are just pulled low and high respectively and do not change from the moment the console boots, even when sending and receiving MIDI data within the O・to・i・re (お・と・い・れ) software. If they were planned to be used for some purpose then I'm not sure what, and with only one piece of software released to test with I'm not sure I'll find out.

In any case, with this circuit data from the MIDI IN port is translated to +3.3V logic levels suitable for the Dreamcast's serial port input (RX) and translated back from the serial port output (TX) to a current loop suitable to drive a device connected to the MIDI OUT port. In practice it seems this MIDI OUT port acts more like a MIDI THRU with the O・to・i・re (お・と・い・れ) sequencer – any data sent to the MIDI IN port comes straight back out of the MIDI OUT port, however if you record some notes in the sequencer and play them back afterwards they aren't played back out of MIDI OUT. At first I wondered if I'd made a wiring error (accidentally connecting MIDI IN straight to MIDI OUT) but the MIDI OUT is indeed under control of the software as it will stop relaying messages on certain screens.

Putting it all together in a nice box

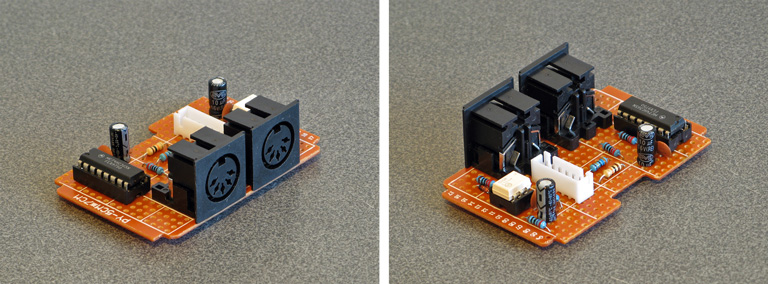

I ended up using my usual pad board construction technique to build the final device:

I followed the circuit diagram I'd drawn earlier (rather than copy the breadboard circuit) to ensure the diagram was correct. This was all assembled to fit in a ready-made ABS enclosure, into which I cut some holes. Historically I've had a hard time cutting neat round holes in plastic enclosures – I have a few hole saws and these are great for cutting through wood or acrylic but when trying to cut ABS they tend to bind and either rip the box out of the vice or just shatter it. I normally resort to drilling lots of very small holes around the perimeter of the circle and then try to file it to size, which is OK for buttons or sockets with overhanging parts to hide the inaccuracies but wouldn't do here! For this project I bought a very cheap step drill set on eBay (three bits for a fiver) with zero expectations but it did an excellent job, it kept the centre hole I'd started from and didn't need very much cleaning up. I wish I'd bought one sooner!

The other enclosure challenge to deal with was labelling the two ports. I normally get away without labelling my projects because the function of each port can be guessed quite easily (e.g. inputs and outputs normally only plug in one way, or player 1 is on the left and player 2 is on the right) but in this case there's not much convention for where MIDI OUT and MIDI IN go (though the original Dreamcast MIDI interface cable puts MIDI OUT on the left, as does my M-Audio Midisport). For this I thought I'd try using some dry transfer lettering designed for model-making, and it seems to do a great job!

I'm pretty happy with the final outcome – it's a bit of a pain making the serial cable for connection to the Dreamcast, but the end result works well and it saves spending an absolute fortune on the original Sega accessory.

Now I can get on with making music with my Dreamcast!

Connecting an old receiver/amplifier to an HDMI television, apropos achieving surround sound

Sunday, 16th August 2020

Now that the charity shops have reopened in the UK I enjoy hunting for good bargains and recently picked up a DAV-S400, a home cinema system originally sold back in 2002. I was not particularly interested in its DVD playback capabilities, especially as it only outputs composite video, but the integrated 5.1 surround sound amplifier and optical input made me think that I could use it to replace my current stereo amplifier for a better home cinema experience. The inclusion of SACD support was an added bonus as I'd bought myself a copy of The Dark Side of the Moon in that format back in 2004 and only been able to listen to it once, so if I couldn't get the home cinema side working at least the SACD part was self-contained and I'd be able to enjoy that.

This ended up being quite the learning project for me and I had to solve quite a few problems along the way. I'm still not entirely sure if I've found the best solutions in places but I thought I'd write this post in case it helps anyone else hooking up an old receiver/amplifier to a modern HDMI system!

The proposed setup

I watch most media using my Windows PC as a source. The PC is in one room and the TV is in another and long HDMI and USB cables run between both under the floorboards. An HDMI splitter is also used near the PC so it can drive its main monitor and the TV in the other room simultaneously.

I was previously running analogue stereo audio from the headphone socket of the TV to a stereo amplifier with a line level "super woofer" output that ran to a separate amplified subwoofer for 2.1 sound.

I knew that HDMI could carry digital audio, and both my monitor and TV have digital outputs. As the DAV-S400 has a digital input, I could just connect that to the digital output from the TV (instead of the headphone output) and in my naivety assumed that's all it would take to have glorious multichannel digital audio. Of course, things are rarely that easy…

My TV only has coaxial digital audio out via an RCA connector and the receiver has an optical input via a TOSLINK socket. Fortunately, my PC monitor has an optical output so I connected that to the receiver for the sake of testing. When I played media on my PC sound came out of the speakers, however only in stereo, even from 5.1 sources. What was going on?

Capabilities of S/PDIF

The digital output from the monitor/TV and the digital input of the amplifier operate with S/PDIF digital audio. This can carry two channels of uncompressed PCM audio for regular stereo. To carry 5.1 multichannel audio the data needs to be compressed using Dolby Digital or DTS. The DAV-S400 supports both, but you need to find a way to transfer that compressed signal to the receiver first.

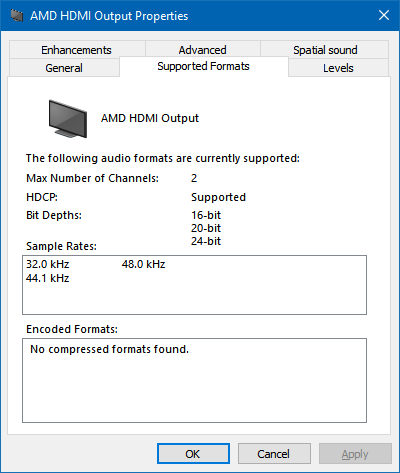

Foiled by the EDID

Here is the biggest fly in the ointment! Extended Display Identification Data (EDID) is metadata that an HDMI display provides to describe what sort of video resolutions and sound formats it supports. All three of my HDMI devices (the HDMI splitter and both displays) report that they support two-channel PCM audio but nothing else.

As a result, devices will only try to send stereo PCM audio to them and not Dolby Digital or DTS. If you have a sound card with an S/PDIF output you will notice that you can go in to its "Supported Formats" tab in Windows and tick which features the receiver supports – this is as the receiver can't tell the sound device which formats it supports automatically like an HDMI device can via its EDID. Unfortunately, Windows trusts the EDID and doesn't let you go in and enable support for features the display doesn't claim to provide. An easy solution here would be to just use my sound card's S/PDIF output but I didn't fancy taking the floorboards up again to run another cable…

A custom monitor driver to override the EDID

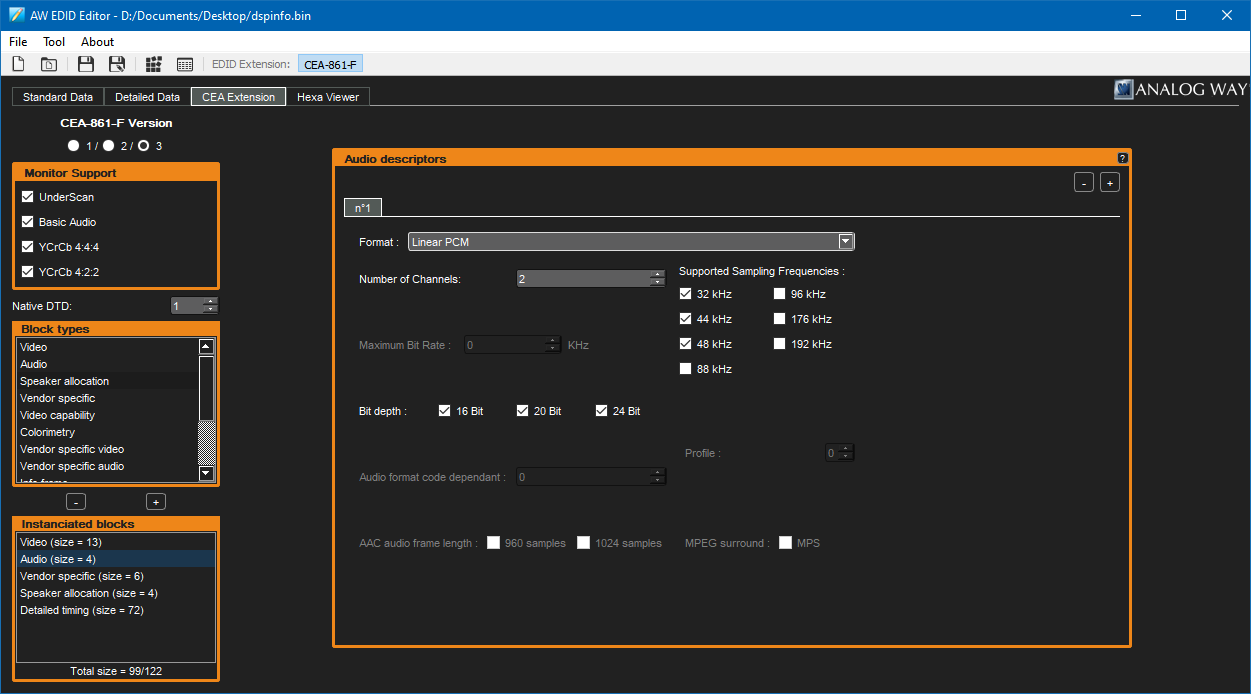

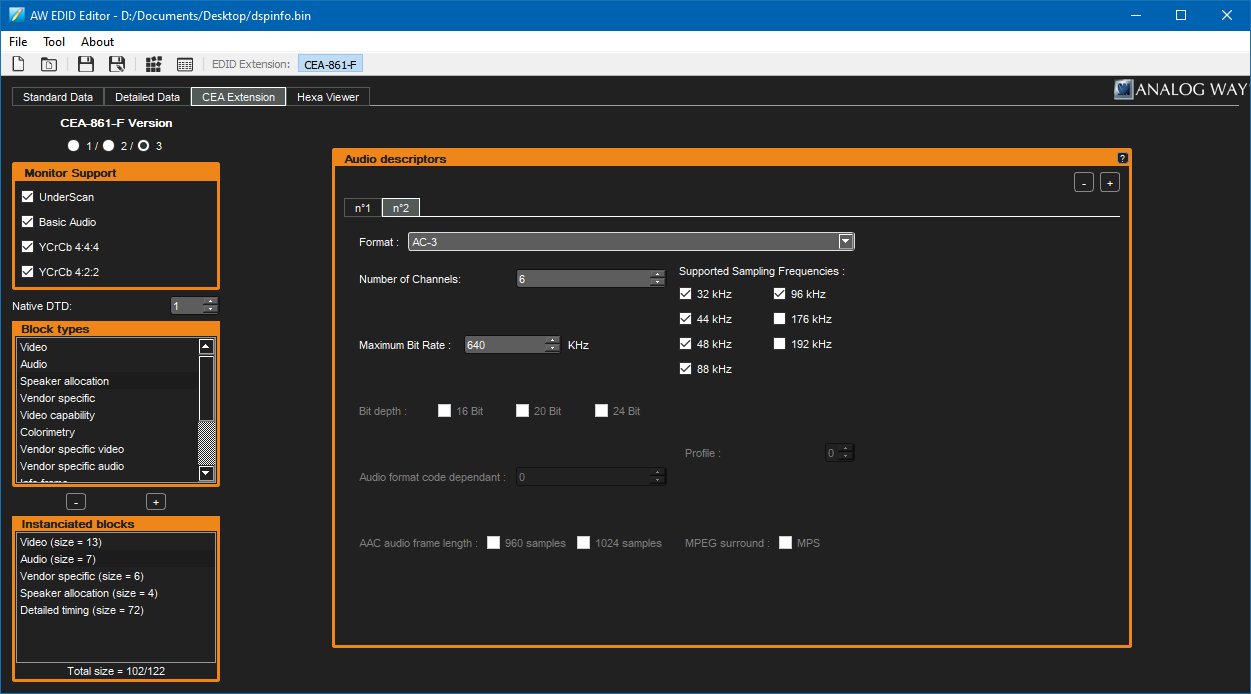

Fortunately you can create a custom monitor driver with a "fixed" EDID that advertises support for Dolby Digital and AC3 using a couple of easy tools.

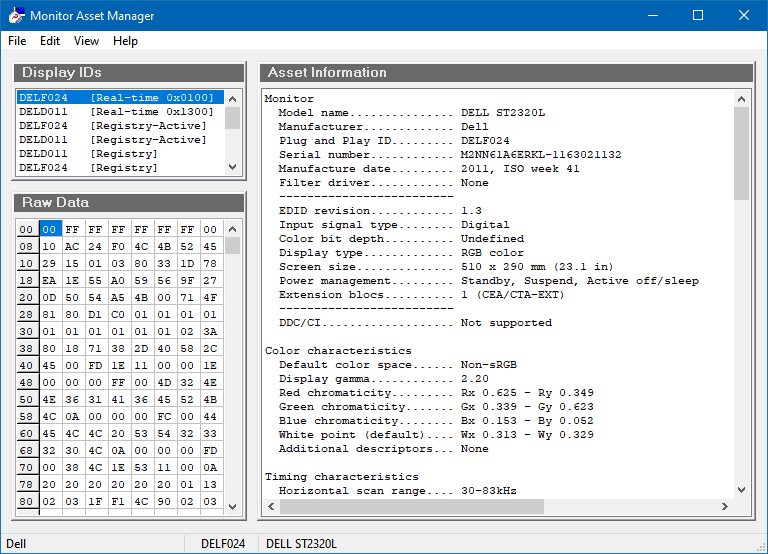

The first piece of software you'll need to use is EnTech's Monitor Asset Manager – this will allow you to extract the current EDID to a file and will later let us turn the updated EDID back into an installable driver.

Run the software and find the monitor that is connected to the HDMI port that you are going to be outputting sound to from the list in the top left. In my case that's my HDMI splitter (which for some reason identifies itself as a DELL ST2320L). Save the EDID to a .bin file via the File→Save as menu item.

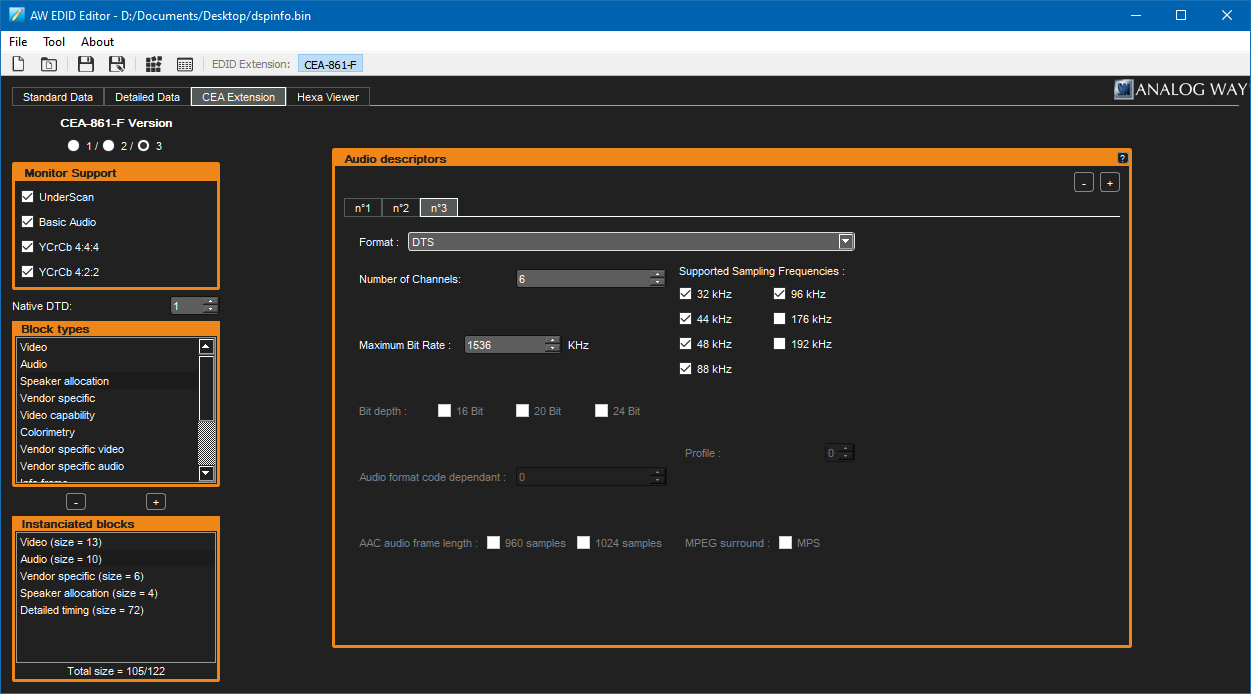

Now that you have your EDID data exported to a file, you'll need to edit it. A nice idiot-proof way to do so is with Analog Way's AW EDID Editor. Run the software and open the .bin file for the EDID file you just saved. Switch to the CEA Extension tab and you will probably see that it only reports support for stereo uncompressed PCM when you look at the "Audio" block.

At this point, you can add audio descriptors according to the capabilities of your receiver. I'm not entirely sure what the best values to enter here are, especially when it comes to bitrate – as far as I'm aware DVDs usually use up to 448kbps for Dolby Digital AC-3 and up to 768kpbs for DTS but there's a more concrete limit of 640kbps for AC-3 and 1536kbps for DTS so these are the values I've used when setting up my EDID.

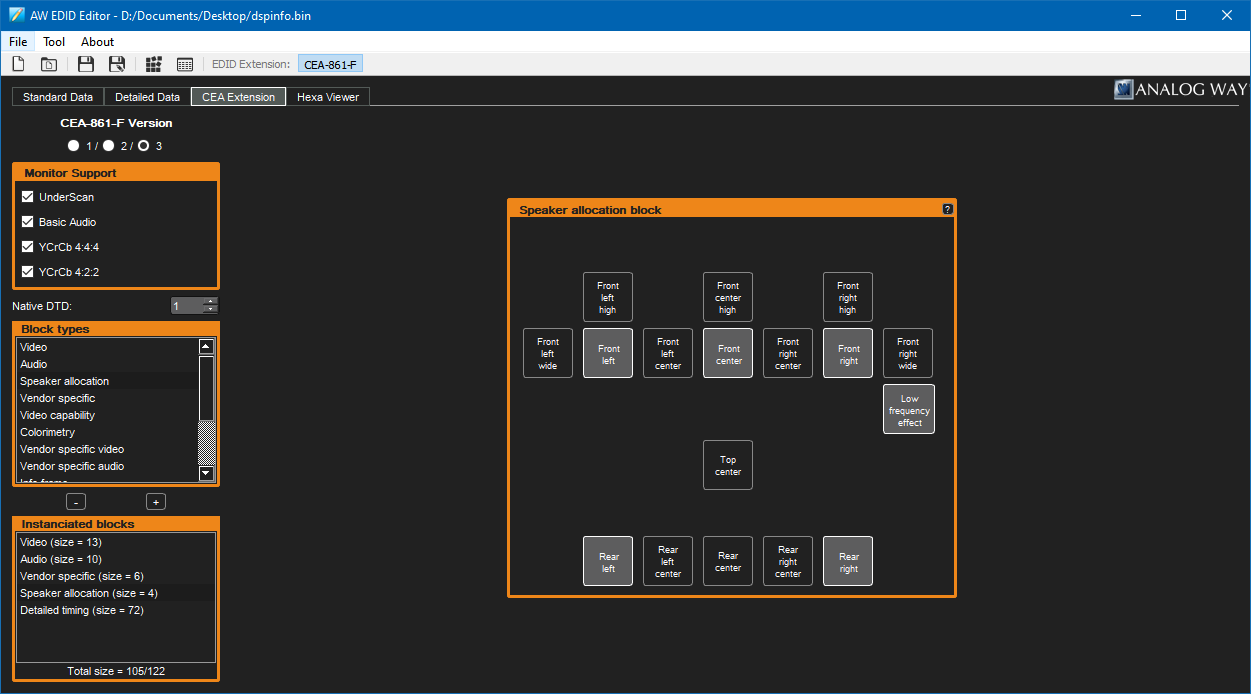

You can also use the "Speaker allocation" block to specify which speakers your device has attached – in my case I've set this up to match my 5.1 setup. Save the EDID at this point, and you're ready to create the custom monitor driver!

Go back to EnTech's Monitor Asset Manager and open the saved .bin file for your new EDID. Click File→Create INF and save the INF to create your new monitor driver. Easy!

What's less easy now is installing that driver on a modern 64-bit version of Windows, as the driver is unsigned and Windows will not let you install unsigned drivers normally. To save headaches at this point I'd recommend looking up how to enable the installation of unsigned drivers, but at the time of writing this is how it's done in Windows 10 (this will involve restarting your computer):

- Click Start→Power

- Hold Shift, then click "Restart".

- At the "Choose an option" screen, select "Troubleshoot".

- At the "Troubleshoot" screen, select "Advanced options".

- At the "Advanced options" screen, select "Start-up Settings".

- At the "Start-up Settings" screen, click "Restart".

- Once the computer has restarted, select the "Disable driver signature enforcement" option (F7).

When the computer has restarted, you should be able to install your unsigned monitor driver. To do this, go into Device Manager and find the entry for your HDMI device in the "Monitors" section.

- Right-click your device and select "Update driver".

- Select "Browse my computer for drivers".

- Click "Let me pick from a list of available drivers on my computer".

- Click the "Have Disk..." button.

- Browse for the monitor.inf file you created previously, then click OK.

- Select the EDID Override option that appears in the list and click "Next".

- Click "Install this driver software anyway" when prompted by the unsigned driver warning dialog box.

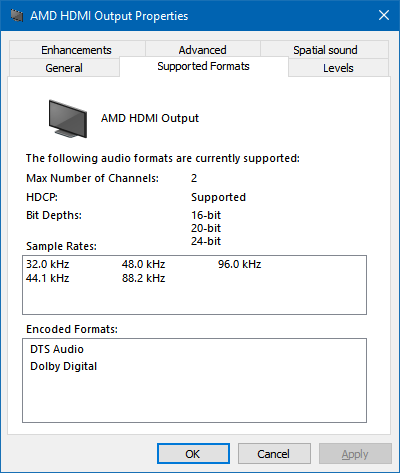

At this point, you will likely need to restart again but all being well after doing so you should now see that Dolby Digital and DTS Audio are now listed as supported encoded formats in the Sound control panel.

Passing through Dolby Digital and DTS without conversion to PCM

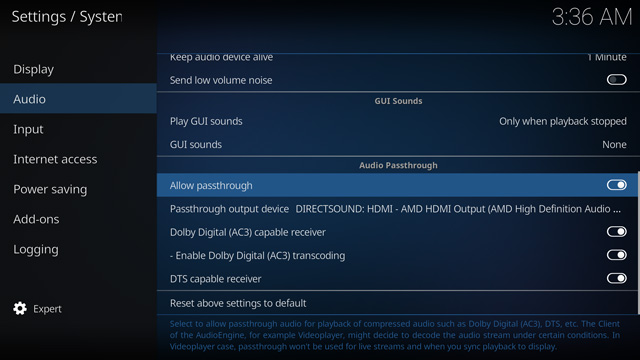

Getting Windows to believe that your receiver supports Dolby Digital and DTS over HDMI is the trickiest problem, but you still need to ensure that the compressed audio gets through every step of the way from the media to the receiver.

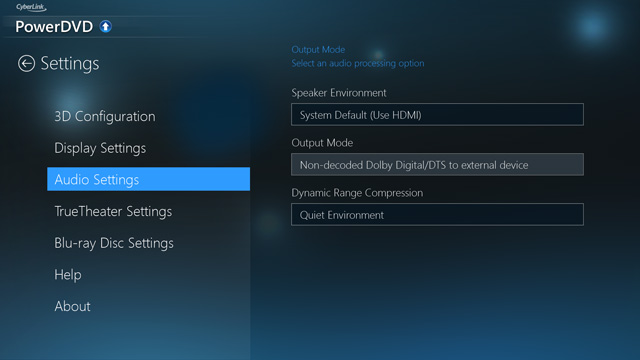

You will need to ensure that your media playback software is configured to pass through the audio directly rather than decoding it to PCM. If your software has an audio settings menu option, ensure that that is set to allow non-decoded audio to pass through.

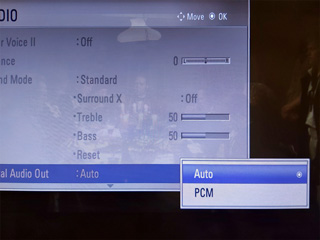

You will also need to ensure that your display is configured to pass through the audio without decoding it to PCM – check its sound menus for the relevant options, and change any settings to "Auto" or the appropriate equivalent instead of "PCM".

In my case I was testing with the receiver connected to my PC monitor via its optical output. Dolby Digital worked fine, however DTS was not working at all. I found that if I enabled the monitor's internal speaker it would output sound when fed Dolby Digital but not when fed DTS, however my TV would output sound when fed either. It seems that my PC monitor genuinely lacks support for DTS and won't even pass it out of the digital output port – fortunately when I acquired a coax to optical adaptor for my TV I found that it passed through DTS just fine!

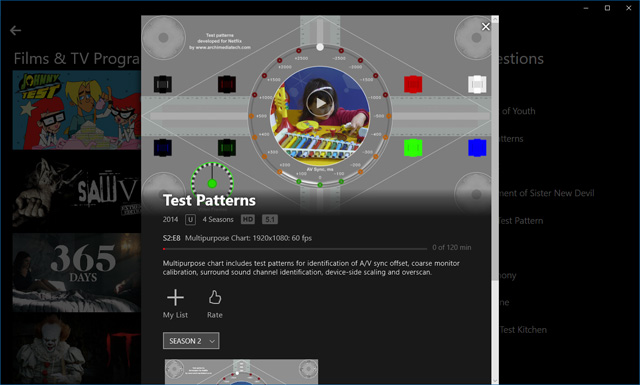

The Windows 10 application version of Netflix also supports 5.1 audio over Dolby Digital and didn't need any further configuration. If you search the library for "Test" there's a useful selection of test patterns that can be used to ensure your speakers are set up correctly.

Other notes

I have a separate Blu-ray player attached to my TV as my PC's video card displays corrupt video when decoding Blu-ray 3D unless I install very old versions of its drivers and I thought a separate Blu-ray player was an easier option than dual-booting into Windows Vista. Unfortunately, my Blu-ray player believes my TV's EDID and refuses to pass-through Dolby Digital or DTS over HDMI even when set to output "bitstream" audio. Fortunately it has a separate coaxial digital audio out that outputs Dolby Digital or DTS even when it's outputting PCM over HDMI but it does mean I need to add a switch box for the single digital input into the receiver which is slightly less elegant than running everything through the TV. There are standalone EDID spoofers that plug in between your device and the monitor that may be able to resolve this issue in the same way the custom PC driver did but these are not inexpensive so I have not been able to test this myself.

The DAV-S400 is extremely fussy about reading hybrid SACDs, and will often detect them as regular CDs. Ejecting and closing the tray persuades the machine to re-scan the disc, so if you have problems press the "DVD Display" button to ensure the OSD is visible and double-tap the eject button to quickly eject and close the tray until the indicator in the top right of the screen reads "SACD" instead of "CD".

If it does detect it as an SACD it will then play it absolutely flawlessly, the issue only seems to be with the initial format identification. I was able to improve the success rate with Dark Side of the Moon by carefully cleaning the drive lens, however I couldn't get Brothers in Arms to be detected at all until I went into the service menu and recalibrated the drive. You can do this by switching the machine on in DVD mode, holding the Stop+Display buttons on the unit together and rotating the volume wheel clockwise. From here go into "Drive Auto Adjustment". I initially tried calibrating with an SACD (labelled "LCD" in the menu) but this didn't help, so I tried the "All" menu option which prompts you to insert a single-layer DVD (DVD-SL), CD, then dual-layer DVD (DVD-DL). After this point it doesn't automatically prompt for an SACD so I left this in the default settings rather than calibrating and to my surprise found that Brothers in Arms now works (and, once detected, plays flawlessly). This inability to handle hybrid SACDs correctly seems to be a common flaw with Sony players of this vintage, unfortunately.

On a more practical note, I needed to find a way to attach my speakers to the amplifier, which had connectors that exposed a pair of pins around 6mm apart. Following a hunch I bought some Tamiya power connectors and found these to be a good fit once you cut off the retaining clip!

I did however find that a couple of the audio channels were intermittent and when I took the case lid off was surprised to see that one of the electrolytic capacitors on the amplifier board was barely held in by any solder at all and when I touched it it let out a big fat noisy spark! I resoldered it in along with all of the audio transformers, connectors and relays on the amplifier PCB which fortunately restored all audio channels back to their former glory.

All in all this has been quite a learning experience, though having now watched Terminator 2 and Mad Max: Fury Road with 5.1 surround sound I can definitely say it was worth it.