SmartBox experimentation with DOS, RISC OS and C#/WinForms

Monday, 6th November 2023

My latest eBay purchase was influenced by a desire for some practical test/prototype equipment, a bit of nostalgia and a desire to learn something new.

A lot of my projects involve some sort of microcontroller running some software that will take inputs, perform decisions on them, and produce outputs. Getting to that stage tends to involve quite a lot of "boilerplate" hardware and software setup, and I'd quite like something that I can just plug in and get cracking with and write some quick test code instead of having to assemble a circuit on a breadboard or faff around with a clumsy IDE.

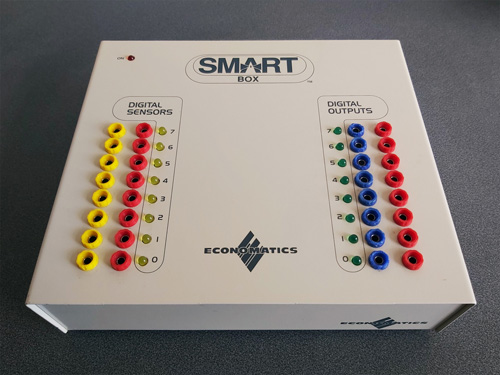

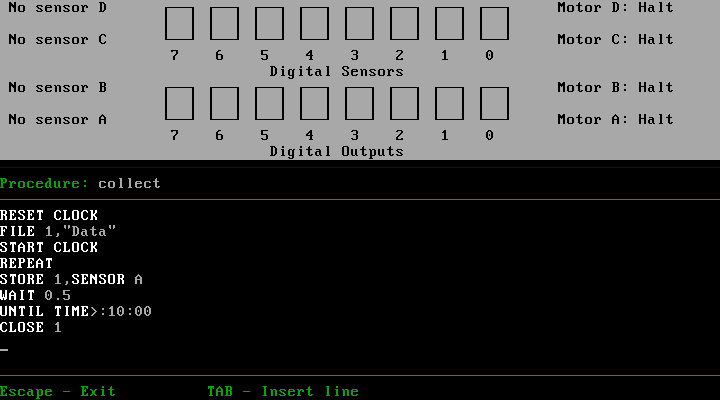

When I was at school in the 1990s one of the devices that got me into microcontrollers in the first place was a computer control system based around the Economatics SmartBox. This plugs into a computer via a serial connection, has eight simple digital inputs, eight simple digital outputs, four analogue inputs and four motor drivers. Programs could be written in a BASIC-like language or in flowchart form, and once you'd run and tested them on the SmartBox you could program them to a PIC microcontroller to run without the host computer.

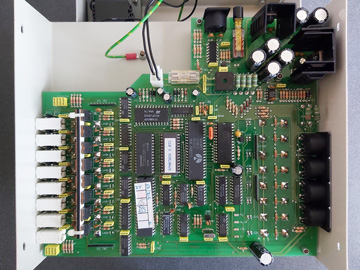

All along I'd assumed that the SmartBox was a simple interface box that relied on the host computer to do all of the processing, but doing some digging I found a thread on StarDot that delved into the heart of the machine and saw that there's a 65C02 CPU inside along with 32KB of RAM and the OS runs from a socketed 8KB ROM. As a long-term Z80 fan I thought it was time I should see how the other side lived – in spite of my fondness for the BBC Micro I don't own one and have not programmed any 6502 assembly, so a SmartBox seemed like it would also provide an affordable 6502 computer for experimentation.

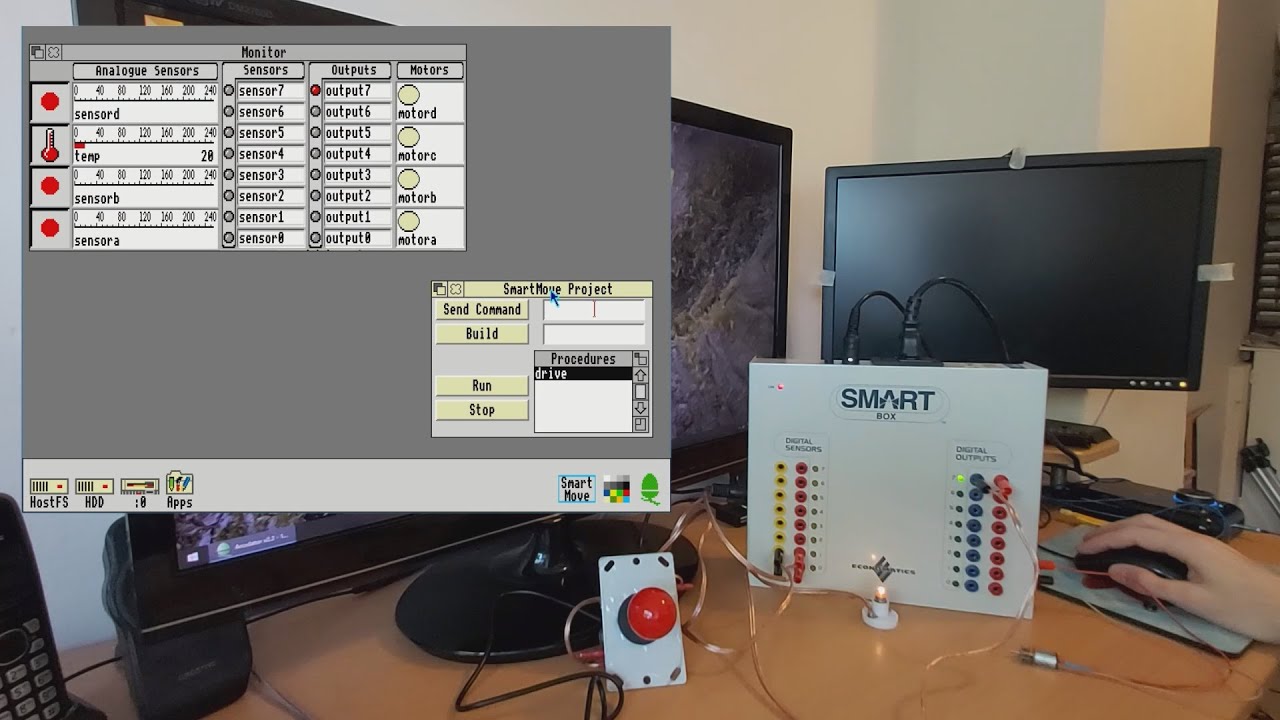

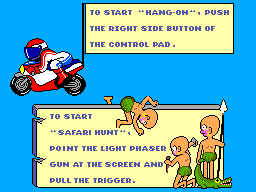

Of course, one challenge was going to be finding the supporting software for the venerable SmartBox. Fortunately in the StarDot thread people had shared archives of the DOS, BBC Master and RISC OS software. One of the many handy features of DOSBox-X is its ability to connect an emulated serial port to a physical one in the host system, and so after building a serial cable for my SmartBox (using a pinout found, once again, via the StarDot thread) I was able to hook it up to my PC and get it working with SmartMove, the BASIC-like programming environment for the SmartBox.

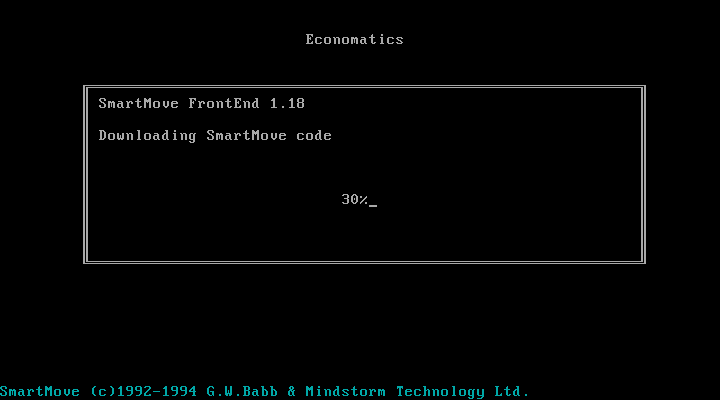

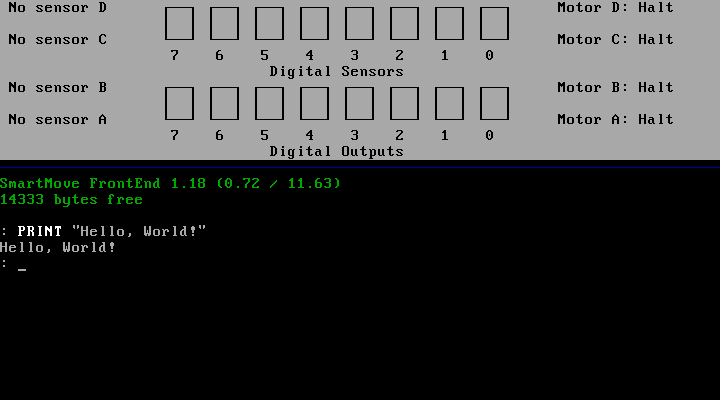

When the software is first run it needs to download SmartMove code into the SmartBox. This is because the programming environment and interpreter is actually running on the SmartBox itself, and the SmartMove software on the host PC is simply loading that interpreter onto the box (found in an accompanying file of 65C02 machine code named AL.COD) and then providing a user interface to that environment as a sort of terminal. This means you can close the SmartMove software (and unplug the serial cable) and your program will continue running on the SmartBox.

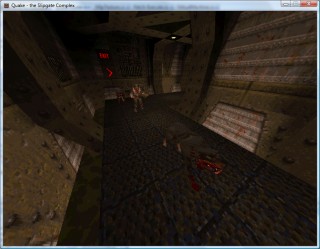

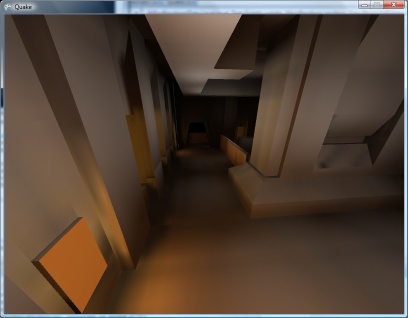

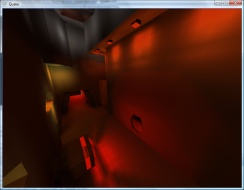

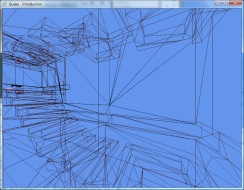

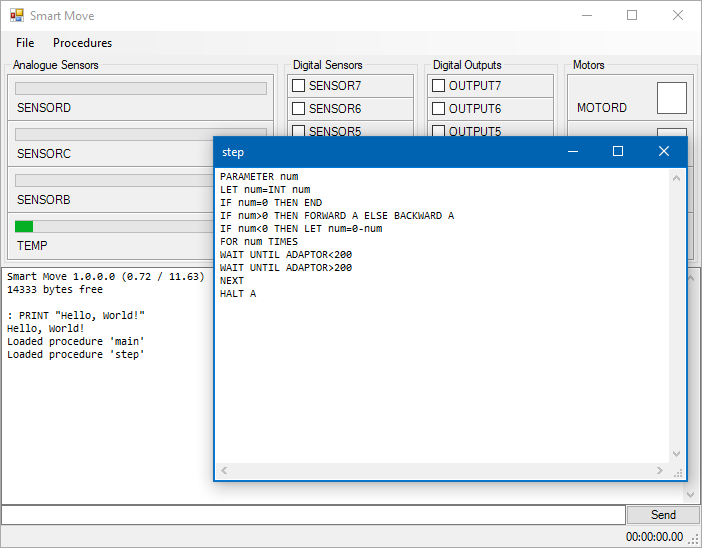

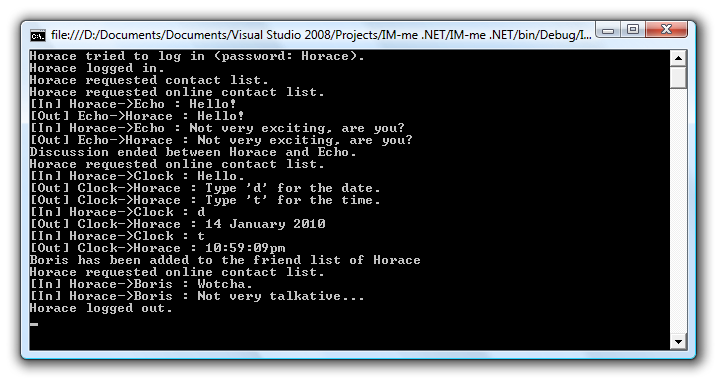

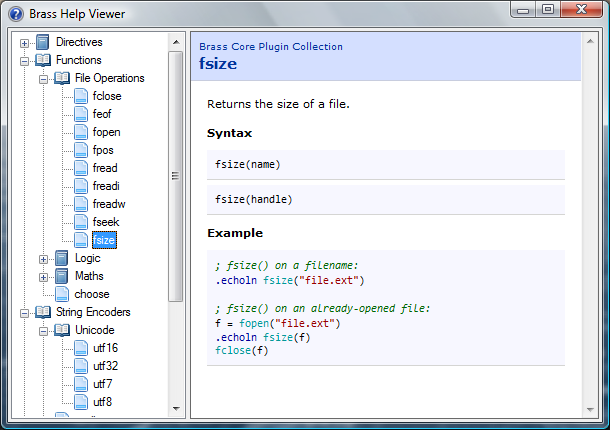

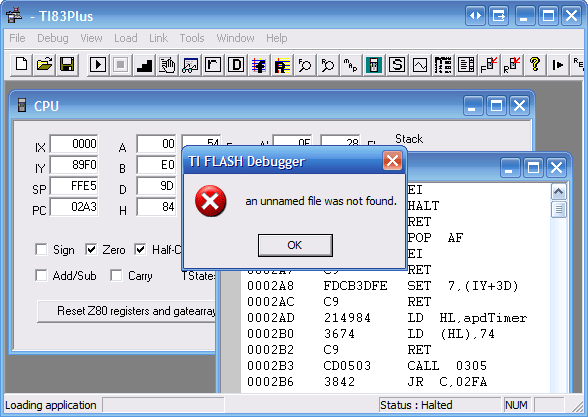

This ability to load and execute code directly on the SmartBox is one of the things that intrigued me as a way to get into 65C02 programming, but for the time being I was interested in digging deeper into the how the existing SmartMove software was interfacing with the box with the intention of writing a simple Z80 host interface that I could then adapt to the Cambridge Z88, my CP/M computer and maybe even the TI-83 Plus calculator series. Fortunately the documentation for the serial protocol used by SmartMove application has been documented so I was able to prototype a crude version of the software in C# using WinForms. It needs some serious tidying up before I can release it but as a basic test it does the job:

The ability to build new versions of the interface software for different platforms without needing to worry about porting over the BASIC interpreter seems sensible considering there were versions of SmartMove available for DOS, BBC Master, RISC OS and Apple Macintosh. All could use the same AL.COD but would just need to provide the relevant UI, input and output routines specific to their host platforms.

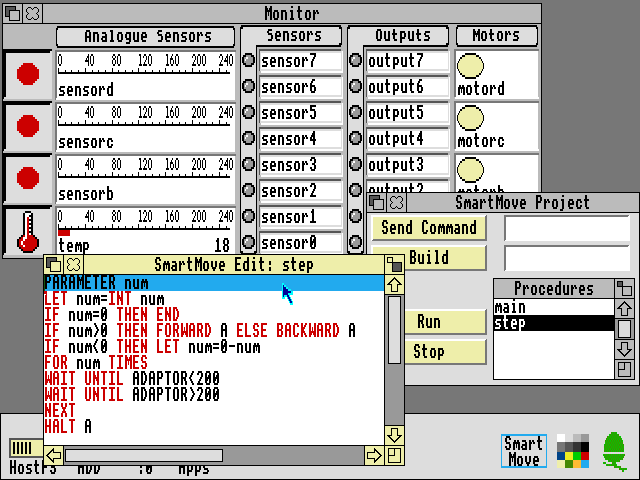

I had been using the DOS version of SmartMove as the initial inspiration of the user interface for my C#/WinForms implementation, however the screenshots of the RISC OS version in the user manual looked rather more visually appealing and an archived copy of this software was available. Unfortunately, I don't own an Acorn Archimedes, I was unable to get the software running properly on modern RISC OS on a Raspberry Pi (even with various compatibility shims in place) and I couldn't find an emulator that handled the serial port in a similar fashion to DOSBox-X. However, the excellent Arculator has source code available and armed with a copy of the 6651 UART datasheet I thought I'd have a go at hacking in the feature myself.

"Hack" is definitely the operative word and though my code is abominable it does work well enough to get the available RISC OS software working on my PC. It's downloadable from here and requires the addition of the host PC's serial port name to arc.cfg (e.g. serial_port = COM1).

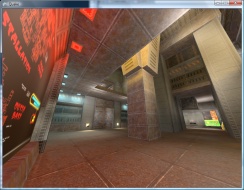

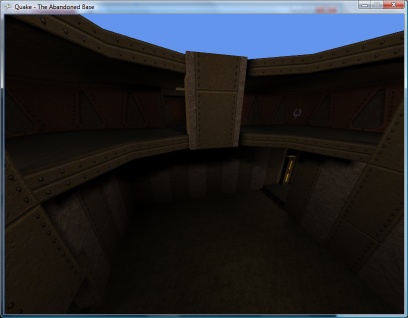

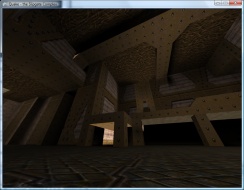

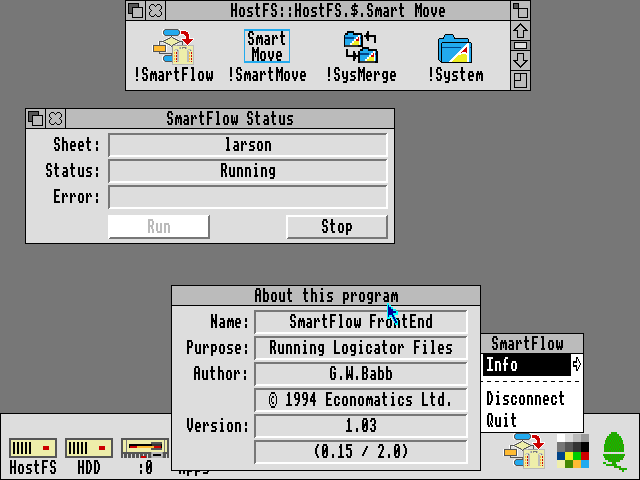

The above screenshot shows the RISC OS version of SmartMove which provided some additional inspiration for how a GUI version of the software should work. As I'd previously loaded some procedures onto the SmartBox via my C# version I could then bring the same procedures up for editing in the RISC OS version of the software. Handy!

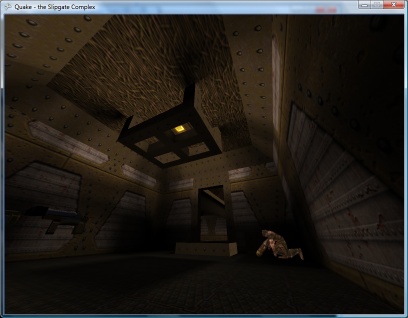

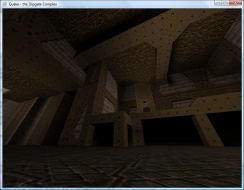

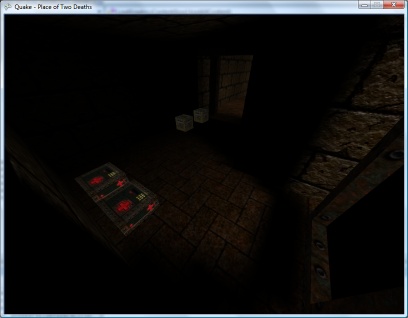

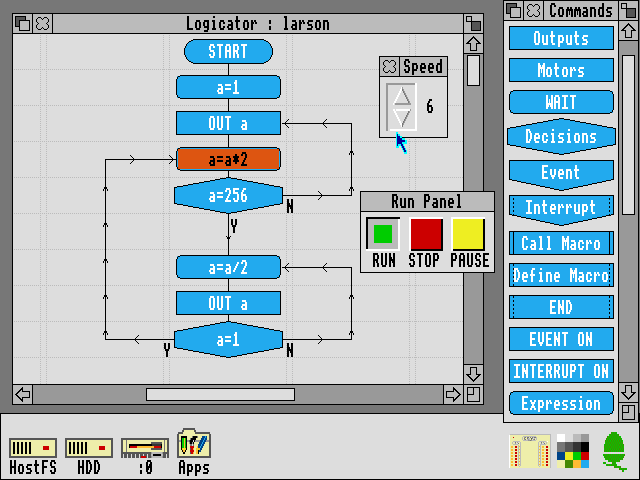

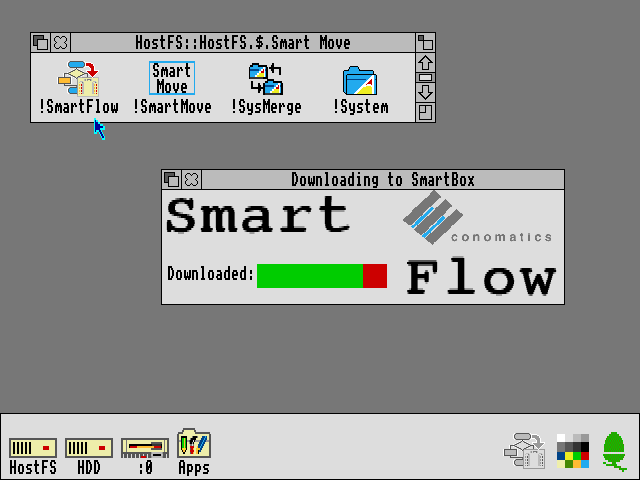

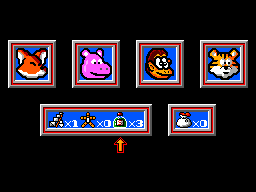

The Logicator software can be used to build programs using flowcharts instead of a BASIC-like programming language. As far as I'm aware Logicator directly accesses the inputs and outputs from a number of different host interface boxes and doesn't rely on 65C02 code loaded onto the box like SmartMove, but this does mean that when you close Logicator your program stops aas it's relying on the host PC to run the show. However, included with the archive of RISC OS software is an application called SmartFlow which first loads a flow chart "interpreter" into the SmartBox:

Once loaded you can then load a Logicator-format flowchart onto the SmartBox where it can be run without being connected to a host PC:

All in all it's been quite interesting to dig into the SmartBox and get a feel for how it works and what can be done with it. To this end I recorded a video demonstration of the SmartBox and its usage within RISC OS, though so far I feel I'm only really scratching the surface!

360 degree photos from Lego, a PICAXE, C# and JavaScript

Friday, 9th July 2010

As you may have guessed from the ratio of photos to actual content in my entries I do quite enjoy taking photos of things. One of the reasons I enjoy working with electronics over writing software for computers is that a finished product results in something physical, which I find much more rewarding than a purely virtual hobby.

One type of photograph I particularly enjoy on other websites is the interactive 360° view of a product. The ability to click and drag to rotate an object on the screen makes it seem more real.

What do you need to take this sort of photograph and show it on a web page? There are four components I could think of:

- A rotating platform that could be controlled to rotate to a specific angle.

- A fixed camera that can be triggered once the platform has advanced to the correct angle.

- A way to combine all of the photos taken at different angles into a single file.

- An piece of code that would allow the user to rotate the object on-screen and display the correct single view of the object.

My final solution is a bit of a Heath Robinson affair but it seems to work quite well!

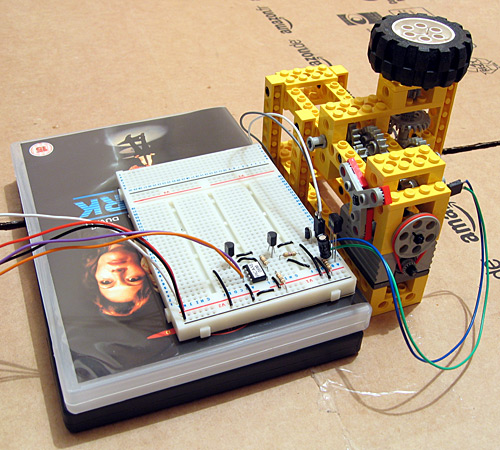

The rotating platform

The most obvious way to build such a platform is to use a stepper motor, as that is specifically designed to be positioned to a particular angle. The problem is that I don't have any stepper motors, and even if I did it would be quite tricky to connect one to a platform. A more practical alternative is to use something I do have — Lego Technic.

A Lego motor cannot be set to rotate to a particular position, so some additional electronics are required. The motor drives a worm gear which in turn rotates a three-bladed propeller relatively slowly (shown with red pieces attached to it in the photo). This propeller cuts the path of a beam of infra-red light between an LED and an infra-red receiver module. A microcontroller (in this case, a PICAXE-08M) is used to advance the platform in steps by switching the motor on, waiting for the beam to be unblocked, waiting for the beam to be blocked again then switching the motor off. The gears I am using have twenty-four or eight teeth, so each pair of gears divides the rotational speed by 24/8=3. I am using four pairs of gears which results in a division of 34=81. The propeller has three blades which further divides the rotational speed by three resulting in the ability to set the platform to 81×3=243 distinct angles.

' This code is for a PICAXE-08M #PICAXE 08M ' This pin is used to generate the 38kHz IR carrier. It should be connected to the IR LED's cathode (-). Symbol IRPwmPin = 2 ' This pin is connected to the IR demodulator's output. Symbol IRReceiverPin = Pin3 ' This pin is connected to the motor enable output. Symbol MotorPin = 4 Symbol SerialControlIn = 1 ' The desired position of the "stepper" motor. Symbol StepDesired = B8 ' The current position of the "stepper" motor. Symbol StepCurrent = B9 Symbol StepDesiredConfirm = B10 Symbol StepDesiredPotential = B11 ' Returned from the CheckBeam routine. Symbol BeamBlocked = B12 ' Rather than spin once at a time (slow) spin up to this many times between exchanging position information with the computer. Symbol SpinLoopCount = 3 ' Stores the spin loop time. Symbol SpinLoop = B13 ' The number of steps in a complete revolution. Symbol TotalSteps = 243 Main: ' Reset the current and desired steps. StepDesired = 0 StepCurrent = 0 ' Switch the motor off. Low MotorPin 'StepDesiredConfirmCount = 0 Do ' Fetch the desired position. SetFreq M8 SerIn SerialControlIn, N4800_8, (CR, LF), #StepDesiredPotential, #StepDesiredConfirm SetFreq M4 ' Check the received data - the second value should be the logical inversion of the first. StepDesiredConfirm = Not StepDesiredConfirm If StepDesiredPotential = StepDesiredConfirm Then StepDesired = StepDesiredPotential End If ' Adjust the position if required. For SpinLoop = 1 To SpinLoopCount ' Broadcast the current step position. SerTxd(#StepCurrent, ",", #StepDesired, CR, LF) ' Do we need to run the motor? If StepCurrent <> StepDesired Then ' Switch the motor on. High MotorPin Pause 20 ' Wait for the beam to be unblocked. Do GoSub CheckBeam Loop Until BeamBlocked = 0 Pause 20 ' Wait for the beam to become blocked again. Do GoSub CheckBeam Loop Until BeamBlocked = 1 ' Switch the motor off. Low MotorPin ' Increment step current to indicate a change of step. Inc StepCurrent If StepCurrent = TotalSteps Then StepCurrent = 0 End If End If Next SpinLoop Loop ' Checks whether the beam is blocked or not. ' Returns BeamBlocked = 0 for an unblocked beam, BeamBlocked for a blocked beam. CheckBeam: PwmOut IRPwmPin, 25, 53 ' 38kHz, calculated via PICAXE->Wizards->pwmout Pause 1 BeamBlocked = IRReceiverPin PwmOut IRPwmPin, Off Return

The BASIC program on the PICAXE constantly outputs the current position and desired position via the serial programming cable as ASCII in the format <current>,<desired><CR><LF>. It also checks for the desired position every loop on via a serial input pin (sadly not the one used for programming the PICAXE as that is not permitted on the 08M) in the format <CR><LF><desired>,<~desired>. (again in ASCII). The desired position is transmitted twice, once normally and the second time inverted (all zero bits set to one and all one bits set to zero) as a simple form of error detection; should the second value received not be a logical inversion of the first then the value is discarded.

A copy of the schematic can be downloaded by clicking the above thumbnail. It is pretty simple; serial data is input on pin IN1 (move the serial input from the programming cable from SERIAL_IN to IN1), an IR LED is driven from pin PWM2 via a current-limiting resistor, an IR receiver sends its input to pin IN3, a Darlington pair drives the motor via pin OUT4 and information is sent out via the SERIAL_OUT pin (no need to move the programming cable for that one).

Triggering the camera

My camera does not have a standard remote control, but does has some software that allows you to capture shots when it's connected to your USB port. Unfortunately the Canon PowerShot SDK is rather old and is no longer maintained, which means that any software that uses it is bound to its bugs and limitations. One of its bigger problems is that it doesn't work on Vista; by setting the Remote Capture utility into XP compatibility mode I could set up a shot and see a live viewfinder but attempting to release the shutter caused the app to hang for about a minute before claiming the camera had been disconnected.

Fortunately VirtualBox emulates USB and serial ports so I set up Windows XP in a virtual machine and installed the Remote Capture utility. It still doesn't work very well (taking about thirty seconds between releasing the shutter and transferring the image) but it's better than nothing.

To control platform I use the following C# code. It's very poorly written (you need to make sure that you quickly set the Remote Capture application as the foreground window when you start it, for example, and it has a hard-coded 10 second delay after taking the photo to transfer the photo from the camera to the PC — when my camera's batteries started going flat it started to drop frames).

using System; using System.Globalization; using System.IO.Ports; using System.Text; using System.Text.RegularExpressions; using System.Threading; using System.Windows.Forms; using System.Diagnostics; using System.Linq; class Program { const int StepsInRevolution = 243; enum ApplicationState { AligningStepper, WaitingStepperAligned, WaitingStartPistol, Photographing, Exiting, } static void Main(string[] args) { StringBuilder receivedData = new StringBuilder(); using (var serialPort = new SerialPort("COM1", 4800, Parity.None, 8, StopBits.Two)) { serialPort.WriteTimeout = 1; serialPort.Open(); var packetFieldsRegex = new Regex(@"^(\d+),(\d+)$"); int? currentPosition = null; int desiredPosition = 0; int? confirmedDesiredPosition = null; int startPosition = 0; int angleCount = 64; int currentAngle = 0; serialPort.DataReceived += new SerialDataReceivedEventHandler((sender, e) => { if (e.EventType == SerialData.Chars) { receivedData.Append(serialPort.ReadExisting()); string receivedDataString; int newLinePosition; while ((newLinePosition = (receivedDataString = receivedData.ToString()).IndexOf("\r\n")) != -1) { var packet = receivedDataString.Substring(0, newLinePosition); receivedData = receivedData.Remove(0, packet.Length + 2); var packetFields = packetFieldsRegex.Matches(packet); if (packetFields.Count == 1) { currentPosition = int.Parse(packetFields[0].Groups[1].Value, CultureInfo.InvariantCulture); confirmedDesiredPosition = int.Parse(packetFields[0].Groups[2].Value, CultureInfo.InvariantCulture); } } } }); ApplicationState appState = ApplicationState.AligningStepper; // Main loop. while (appState != ApplicationState.Exiting) { // Update the stepper position. try { serialPort.Write(string.Format(CultureInfo.InvariantCulture, "\r\n{0},{1}.", desiredPosition, (byte)~desiredPosition)); } catch (TimeoutException) { serialPort.DiscardOutBuffer(); } Thread.Sleep(10); // What are we doing? switch (appState) { case ApplicationState.AligningStepper: if (currentPosition.HasValue) { desiredPosition = (currentPosition.Value + 5) % StepsInRevolution; appState = ApplicationState.WaitingStepperAligned; } break; case ApplicationState.WaitingStepperAligned: if (currentPosition.Value == desiredPosition) { startPosition = desiredPosition; appState = ApplicationState.WaitingStartPistol; //while (Console.KeyAvailable) Console.ReadKey(true); //Console.WriteLine("Press any key to start rotating..."); } break; case ApplicationState.WaitingStartPistol: //while (Console.KeyAvailable) { // Console.ReadKey(true); appState = ApplicationState.Photographing; //} break; case ApplicationState.Photographing: if (currentPosition == desiredPosition) { Console.Write("Taking photo {0} of {1}...", currentAngle + 1, angleCount); SendKeys.SendWait(" "); Thread.Sleep(10000); Console.WriteLine("Done!"); if (currentAngle++ == angleCount) { appState = ApplicationState.Exiting; } else { desiredPosition = (startPosition + (currentAngle * StepsInRevolution) / angleCount) % StepsInRevolution; } } break; } } Console.WriteLine("Done."); Console.ReadKey(true); } } }

It was meant to prompt to press a key before starting to allow you to re-align the object to the starting position (if required) but this would switch focus away from the Remote Capture utility. I'll probably fix this to switch the focus explicitly to the Remote Capture utility before sending the key to trigger a capture, and will also add code that polls the photo destination directory to spot when the file has been downloaded from the camera instead of the hard-coded 10 second delay. Working in the virtual machine and with the buggy Remote Capture utility is a frustrating endeavour so I left it as it is for the time being!

Stitching the photos together

Once the photos had been taken they needed to be stitched together into a single file. I decided to use 64 angles for a complete revolution as this seemed a good trade-off between fine control over rotation and a decent file size. It also allowed the images to be arranged into a neat 8×8 grid.

I first used VirtualDub to crop each image. VirtualDub allows you to open an image sequence and export to an image sequence so it seemed ideal for the task. Once I had the object neatly cropped I stitched all of them together into a large single PNG file using the following C# program:

using System; using System.Drawing; using System.IO; using System.Text.RegularExpressions; class Program { static void Main(string[] args) { var middleImage = 14; // Index of the "middle" (default angle) image. var nameRegex = new Regex(@"Processed(\d{2})"); var images = new Bitmap[64]; try { foreach (var file in Directory.GetFiles(@"D:\Documents\Pictures\Digital Photos\Projects\Line Blanker\Insides 360\Processed", "*.png")) { var matches = nameRegex.Matches(file); if (matches.Count == 1) { images[int.Parse(matches[0].Groups[1].Value)] = new Bitmap(file); } } var maxSize = new Size(0, 0); for (int i = 0; i < images.Length; i++) { if (images[i] == null) { Console.WriteLine("Image {0} missing!", i); } else { maxSize = new Size(Math.Max(images[i].Width, maxSize.Width), Math.Max(images[i].Height, maxSize.Height)); } } using (var finalImage = new Bitmap(maxSize.Width * 8, maxSize.Height * 8)) { using (var g = Graphics.FromImage(finalImage)) { g.PixelOffsetMode = System.Drawing.Drawing2D.PixelOffsetMode.Half; for (int x = 0; x < 8; ++x) { for (int y = 0; y < 8; ++y) { var image = images[(x + y * 8 + middleImage) % images.Length]; if (image != null) { g.DrawImage(image, new Point(x * maxSize.Width + (maxSize.Width - image.Width) / 2, y * maxSize.Height + (maxSize.Height - image.Height) / 2)); } } } } finalImage.Save("out.png"); } } finally { for (int i = 0; i < images.Length; i++) { if (images[i] != null) { images[i].Dispose(); images[i] = null; } } } } }

The program requires that the input images are named Processed00.png to Processed63.png, which is easily arranged when exporting an image sequence from VirtualDub. The resulting image can be tidied up in a conventional image editor.

Embedding the result on a web page

The final bit of code required is to allow the 360° image to be embedded and manipulated on a web page. I opted to use JavaScript for this task as it seemed the lightest and simplest way to work.

if (typeof(Rotate360) == 'undefined') { var Rotate360 = new Class({ Implements : [Options, Events], options : { width : 320, height : 240, container : null, element : null }, sign : function(v) { return (v > 0) ? +1 : (v < 0 ? -1 : 0); }, initialize : function(source, options) { this.setOptions(options); this.source = source; var rotate360 = this; this.element = new Element('div', { 'class' : 'rotate360', styles : { width : this.options.width + 'px', height : this.options.height + 'px', background : 'transparent no-repeat url("' + this.source + '") scroll 0 0' }, events : { mouseenter : function(e) { if (typeof(rotate360.mouseHandlerDiv) != 'undefined') { var myPosition = rotate360.element.getCoordinates(); rotate360.mouseHandlerDiv.setStyles({ left : myPosition.left + 'px', top : myPosition.top + 'px', width : myPosition.width + 'px', height : myPosition.height + 'px' }); } } } }); this.mouseHandlerDiv = new Element('div', { styles : { position : 'absolute', cursor : 'e-resize' }, events : { mousemove : function(e) { if (typeof(rotate360.mouseHeld) != 'undefined' && rotate360.mouseHeld && typeof(rotate360.previousPageX) != 'undefined' && typeof(rotate360.previousPageY) != 'undefined') { var currentBackgroundPosition = rotate360.element.getStyle('background-position').split(' '); currentBackgroundPosition[0] = parseInt(currentBackgroundPosition[0]); currentBackgroundPosition[1] = parseInt(currentBackgroundPosition[1]); if (typeof(rotate360.rotateX) == 'undefined') rotate360.rotateX = 0; rotate360.rotateX += (e.page.x - rotate360.previousPageX) / (360 * (rotate360.options.width / 270) / ((rotate360.image.width * rotate360.image.height) / (rotate360.options.width * rotate360.options.height))); var workingAngle = parseInt(rotate360.rotateX); currentBackgroundPosition[0] = -rotate360.options.width * (workingAngle % (rotate360.image.width / rotate360.options.width)); currentBackgroundPosition[1] = -rotate360.options.height * Math.floor(workingAngle / (rotate360.image.height / rotate360.options.height)); while (currentBackgroundPosition[0] > 0) currentBackgroundPosition[0] -= rotate360.image.width; while (currentBackgroundPosition[0] <= -rotate360.image.width) currentBackgroundPosition[0] += rotate360.image.width; while (currentBackgroundPosition[1] > 0) currentBackgroundPosition[1] -= rotate360.image.height; while (currentBackgroundPosition[1] <= -rotate360.image.height) currentBackgroundPosition[1] += rotate360.image.height; rotate360.element.setStyle('background-position', currentBackgroundPosition[0] + 'px ' + currentBackgroundPosition[1] + 'px'); rotate360.previousPageX = e.page.x; rotate360.previousPageY = e.page.y; } else { rotate360.previousPageX = e.page.x; rotate360.previousPageY = e.page.y; } }, mousedown : function(e) { e.stop(); rotate360.mouseHeld = true; rotate360.mouseHandlerDiv.setStyles({ left : 0, width : '100%' }); }, mouseup : function(e) { e.stop(); rotate360.mouseHeld = false; rotate360.element.fireEvent('mouseenter'); } } }).inject(document.body, 'top'); this.image = new Asset.image(this.source, { onload : function() { if (rotate360.options.element) { rotate360.element.replaces(rotate360.options.element); } else if (rotate360.options.container) { rotate360.options.container.adopt(rotate360.element); } } }); } }); window.addEvent('domready', function() { $$('img.rotate360').each(function(rotate360) { var src = rotate360.src.replace(/\.([a-zA-Z]+)$/, '_360.$1'); var img = new Asset.image(src, { onload : function() { new Rotate360(img.src, { width : rotate360.width, height : rotate360.height, element : rotate360 }); } }); }); }); }

The above code requires MooTools (both "core" and "more" for its Asset classes). It can be invoked manually or (preferably) will replace any image with a class of rotate360 with the 360° version — if the file was example.jpg the 360° version would be example_360.jpg.

Examples

I've taken photos of a few of my previous projects using this technique — USB remote control, AVR TV game and VGA line blanker. The process could use some refinement but it certainly seems to work!

IM-me wireless terminal

Thursday, 14th January 2010

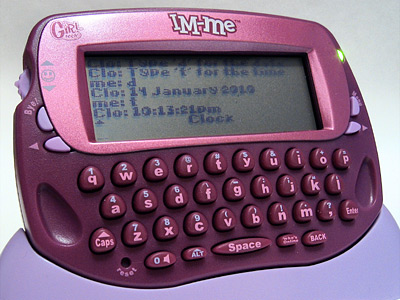

A recent post on Hack a Day alerted me the to the IM-me, a device designed to be used with a web-based IM service that communicated with the PC via a USB wireless adaptor.

According to Hunter Davis, the body of the messages were sent between the PC and the IM-me are in plain text. This sounded like a good start to me, so I picked one up from Amazon UK for £7.49 (they're now available for even less than that). You get a lot of electronics for that price; there's a CC1110F32 microcontroller inside (the chips inside the device and its wireless adaptor are clearly marked – no nameless blob of epoxy that you might have expected from the price) and Dave has poked around the insides of his and has mapped the contact pads exposed via the battery compartment to the debug port on the microcontroller. You could use this debug port to overwrite the stock firmware with your own if the fancy took you. However, I'm more interested in seeing what I can do with the device without writing my own firmware for it.

The wireless adaptor shows up in Windows a simple USB HID, so I installed SnoopyPro and logged a chat session with myself. Fortunately, there is indeed no obfuscation or encryption to the structure of messages. I have worked on a C# library that handles most of the different message types (no group chat yet, only direct contact-to-contact) and written up what I've found here. The C# code can be found here, though it is not especially robust yet.

I think that my main problem is a poor grasp of asynchronous I/O. I read data asynchronously, but write synchronously, and don't currently do anything to protect against my code "speaking over" the incoming data. If you output data when the device is half way through sending a packet, it seems to ignore the data you're sending it. In the case of long messages, which are made up of multiple packets sent in rapid succession, they don't appear to ever reach the device. The USB device responds with a single 0 byte after a packet is written to it, which I don't currently wait for. I'm not sure how you can, when mixing asynchronous reading and synchronous writing, so if anyone has any suggestions or links to reading material I'd greatly appreciate it!

I have no intention of going near the existing IM-me web service – being able to use the IM-me as a general-purpose wireless terminal to talk to your own software opens up a wealth of possibilities. You could set it up to notify you of new emails, read RSS feeds, post updates to social networking sites, use it as a home automation console, remote control a media PC... You may wish to paint it black first, though!

Addendum: Whoops, after refactoring some code I broke the checksum generation. It appears that the IM-me ignores the checksum when receiving messages. I have stuck a brief pause between each byte written to the device and a slightly longer one between each packet sent to the device, and I can now send long messages to it.

RC-5, NEC, JVC and Panasonic infrared codes

Wednesday, 3rd June 2009

I've rewritten the remote control signal decoding software to handle multiple protocols. As well as SIRCS, it now supports RC-5, NEC, JVC and two Panasonic codes (one "old" 11-bit code and one "new" 48-bit code). There's not much in the way of screenshots at the moment, other than a debug window that gets filled when keys are pressed:

NecCommand Address=24, Command=87, Extended=False, Repeat=1

RC5Command Address=20, Command=53, Repeat=True, Repeat=1

NewPanasonicCommand OEM Device 1=2, OEM Device 2=32, Device=144, Sub-Device=0, Command=10, Repeat=1

RC5Command Address=8, Command=35, Repeat=True, Repeat=1

OldPanasonicCommand Address=0, Command=20, Repeat=1

SircsCommand Address=2362, Command=121, Length=20, Repeat=1

SircsCommand Address=7002, Command=84, Length=20, Repeat=1

NecCommand Address=64, Command=146, Extended=False, Repeat=1

NecCommand Address=81, Command=8, Extended=False, Repeat=1

JvcCommand Address=3, Command=23, Repeat=1

Gripping stuff, I'm sure you'll agree.

The C# source code for this can be downloaded here.

A keyring remote control (courtesy of Poundland) has highlighted one possible issue in handling repeating buttons. Rather than target any particular device, it will try and brute-force a response. For example, here's the result of pressing the power button once in one particular mode:

SircsCommand Address=1, Command=21, Length=12, Repeat=2

OldPanasonicCommand Address=0, Command=32, Repeat=1

NecCommand Address=32, Command=11, Extended=False, Repeat=1

NewPanasonicCommand OEM Device 1=2, OEM Device 2=32, Device=128, Sub-Device=0, Command=61, Repeat=1

That's four different protocols from one button. I suppose some sort of mapping from protocol-specific code to a string (so those five commands would be translated into five "power" strings) and comparing the time between signals to turn the input into something meaningful may help, but that would require an enormous database of known codes.

C# emu2413

Thursday, 8th January 2009

This is fairly embarrassing; somebody sent me an email that was flagged as spam which I accidentally deleted. So if you sent me an email and I haven't replied, I'm not deliberately being rude; could you send it again? ![]()

After encountering strange crashes (not .NET exceptions, full out crashes) with emu2413 I decided to port it to straight C# instead from its existing C incarnation (emu2413.h.cs and emu2413.c.cs). Even though the original was macro-heavy it was relatively simple to port, and so there's no dependency on an unmanaged DLL to generate FM sound any more. However, the C# version is significantly slower (Cogwheel now takes about 50% extra CPU time when FM sound is enabled), possibly due to many extraneous method calls that were macros in the original.

However, the emulator still crashes when FM sound is enabled. And I have no idea why, as it only happens in Release mode and outside the IDE. The Debug build works fine inside and outside the IDE, and Release mode works fine when run within the IDE. ![]()

Controller input updates to Cogwheel

Monday, 5th January 2009

I hope you all had a good Christmas and New Year period!

I received an Xbox 360 controller for Christmas, so have done a bit of work on Cogwheel to add support for it. (You can download a copy of the latest version 1.0.2.0 with SlimDX here).

The first issue to deal with was the D-pad on the Xbox 360 controller. When treated as a conventional joystick or DirectInput device the D-pad state is returned via the point-of-view (POV) hat. The joystick input source class couldn't raise events generated by the POV hat so support for that had to be added. This now allows other controllers that used the POV hat for slightly bizarre reasons (eg the faceplate buttons on the PlayStation controller when using PPJoy) to work too.

The second issue was the slightly odd way that the Xbox 360's DirectInput driver returns the state of the triggers - as a single axis, with one trigger moving the axis in one direction, the other trigger moving it in the other. You cannot differentiate between both triggers being held and both being released, as both states return 0. To get around this, I've added support for XInput devices, where all buttons and triggers operate independently.

The Xbox 360 controller now shows up twice in the UI - once as an XInput device and again as a conventional joystick. Fortunately, you can check if a device is an XInput device by the presence of IG_ in its device ID. Here's some C# code that can be used to check with a joystick is an XInput device or not.

using System.Globalization; using System.Management; using System.Text.RegularExpressions; namespace CogwheelSlimDX.JoystickInput { /// <summary> /// Provides methods for retrieving the state from a joystick. /// </summary> public class Joystick { /* ... */ /// <summary> /// Gets the vendor identifier of the <see cref="Joystick"/>. /// </summary> public ushort VendorId { get; private set; } /// <summary> /// Gets the product identifier of the <see cref="Joystick"/>. /// </summary> public ushort ProductId { get; private set; } /* ... */ /// <summary> /// Determines whether the device is an XInput device or not. Returns true if it is, false if it isn't. /// </summary> public bool IsXInputDevice { get { var ParseIds = new Regex(@"([VP])ID_([\da-fA-F]{4})"); // Used to grab the VID/PID components from the device ID string. // Iterate over all PNP devices. using (var QueryPnp = new ManagementObjectSearcher(@"\\.\root\cimv2", string.Format("Select * FROM Win32_PNPEntity"), new EnumerationOptions() { BlockSize = 20 })) { foreach (var PnpDevice in QueryPnp.Get()) { // Check if the DeviceId contains the tell-tale "IG_". var DeviceId = (string)PnpDevice.Properties["DeviceID"].Value; if (DeviceId.Contains("IG_")) { // Check the VID/PID components against the joystick's. var Ids = ParseIds.Matches(DeviceId); if (Ids.Count == 2) { ushort? VId = null, PId = null; foreach (Match M in Ids) { ushort Value = ushort.Parse(M.Groups[2].Value, NumberStyles.HexNumber); switch (M.Groups[1].Value) { case "V": VId = Value; break; case "P": PId = Value; break; } } if (VId.HasValue && this.VendorId == VId && PId.HasValue && this.ProductId == PId) return true; } } } } return false; } } /* ... */ } }

When the joysticks are enumerated they are only added to the input manager if they are not XInput devices.

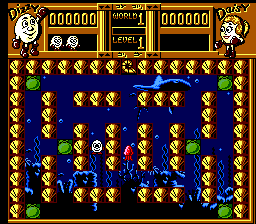

To round up the entry, here's a screenshot of a minesweeper clone I've been working on in BBC BASIC.

You can view/download the code here and it will run in the shareware version of BBC BASIC for Windows. The code has been deliberately uglified (cramming multiple statements onto a single line, few comments, trimmed whitespace) to try and keep it within the shareware version's 8KB limit as this is a good limit to keep in mind for the TI-83+ version too.

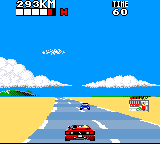

Sega Master System emulation in Silverlight

Monday, 15th December 2008

I've had to quickly learn Silverlight for work recently, which has been an interesting experience. I've had to write new code, which is fine but doesn't really excite me as far as Silverlight is concerned - it doesn't really matter which language new code is developed in, as long as it gets the job done.

What does interest me more is that Silverlight is ".NET in your browser", and I'm a big fan of .NET technology with a handful of .NET-based projects under my belt. Silverlight therefore gives me the opportunity to run these projects within the browser, which is a fun idea. ![]()

To this end, I've turned Cogwheel, a Sega 8-bit system emulator, into a Silverlight application. It took about an hour and a half, which was not as bad as I'd expected! (Skip to the bottom for instructions for the demo).

Raster graphics

Silverlight's raster graphics support is somewhat lacking. You can display raster graphics in Image elements, but - as far as I can see - that's about it. If you wish to generate and display images dynamically via primitive pixel-pushing, you're out of luck as far as Silverlight's class library is concerned.

Thankfully, Ian Griffiths has developed a class named PngGenerator that can speedily encode a PNG from an array of Colors that can then be displayed in an Image. Cogwheel's rasteriser returns pixel data as an array of integers so there's a small amount of overhead to convert these but other than that it's easy to push pixels, albeit in a fairly roundabout manner.

Render loop

The render loop is based around an empty Storyboard that invokes an Action every time it completes then restarts itself.

using System; using System.Windows; using System.Windows.Media.Animation; namespace Cogwheel.Silverlight { public static class RenderLoop { public static void AttachRenderLoop(this FrameworkElement c, Action update) { var Board = new Storyboard(); c.Resources.Add("RenderLoop", Board); Board.Completed += (sender, e) => { if (update != null) update(); Board.Begin(); }; Board.Begin(); } public static void DetachRenderLoop(this FrameworkElement c) { var Board = (Storyboard)c.Resources["RenderLoop"]; Board.Stop(); c.Resources.Remove("RenderLoop"); } } }

I'm not sure if this is the best way to do it, but it works well enough and is easy to use - just grab any FrameworkElement (in my case the Page UserControl) and call AttachRenderLoop:

private void UserControl_Loaded(object sender, RoutedEventArgs e) { this.UserControlRoot.AttachRenderLoop(() => { /* Update/render loop in here. */ }); }

Missing .NET framework class library features

This is the big one; Silverlight does not cover the entire .NET framework class library, and so bits of it are missing. Fortunately this can be resolved, the difficulty depending on how you want the functionality of the original app to be affected.

Missing types you're not interested in.

These are the easiest to deal with, and this includes attributes and interfaces that the existing code uses that you're not especially interested in. For example, Cogwheel uses some of .NET's serialisation features for save states - a feature I wasn't intending on implementing in the Silverlight version. The [Serializable] and [NonSerialized] attributes are not available in Silverlight, nor is the IDeserializationCallback interface. To get the project to compile some dummy types were created.

namespace System { class SerializableAttribute : Attribute { } class NonSerializedAttribute : Attribute { } interface IDeserializationCallback { void OnDeserialization(object sender); } }

Missing types or methods that you don't mind partially losing.

Cogwheel features some zip file handling code that uses System.IO.Compression.DeflateStream, a class not available in Silverlight. Rather than remove the zip classes entirely (which would require modifications to other files that relied on them) it was easier to use conditional compilation to skip over the DeflateStream where required.

switch (this.Method) { case CompressionMethod.Store: CompressingStream = CompressedStream; break; #if !SILVERLIGHT case CompressionMethod.Deflate: CompressingStream = new DeflateStream(CompressedStream, CompressionMode.Compress, true); break; #endif default: throw new NotSupportedException(); }

Missing instance methods.

C# 3.0 adds support for extension methods - user-defined methods that can be used to extend the functionality of existing classes that you cannot modify directly. Silverlight is missing a number of instance methods on certain classes, such as string.ToLowerInvariant();. By using extension methods the missing methods can be restored.

namespace System { public static class Extensions { public static string ToLowerInvariant(this string s) { return s.ToLower(CultureInfo.InvariantCulture); } public static string ToUpperInvariant(this string s) { return s.ToUpper(CultureInfo.InvariantCulture); } } }

Missing static methods.

These are the most work to fix as extension methods only work on instance methods, not static methods. This requires a change at the place the method is called as well as the code for the method itself.

I've got around this by creating new static classes with Ex appended to the name then using using to alias the types. For example, Silverlight lacks the Array.ConvertAll method.

namespace System { static class ArrayEx { public static TOut[] ConvertAll<TIn, TOut>(TIn[] input, Func<TIn, TOut> fn) { TOut[] result = new TOut[input.Length]; for (int i = 0; i < input.Length; i++) { result[i] = fn(input[i]); } return result; } } }

First, a replacement method is written with Ex appended to the class name. Secondly, any file that contains a reference to the method has this added to the top:

#if SILVERLIGHT using ArrayEx = System.ArrayEx; #else using System.IO.Compression; using ArrayEx = System.Array; #endif

Finally, anywhere in the code that calls Array.ConvertAll is modified to call ArrayEx.ConvertAll instead. When compiling for Silverlight it calls the new routine, otherwise it calls the regular Array.Convert.

Demo

The links below launch the emulator with the selected ROM image.

To run your own ROM image, click on the folder image in the bottom-right corner of the browser window to bring up a standard open file dialog.

Zip files are not handled correctly, but if you type *.* into the filename box, right-click a zip file, pick Open, then select the ROM from inside that it should work (it does on Vista at any rate).

The cursor keys act as you'd expect; Ctrl or Z is button 1/Start; Alt, Shift or X is 2 (Alt brings up the menu in IE). Space is Pause if you use an SMS ROM and Start if you use a Game Gear ROM. Keys don't work at all in Opera for some reason, but they should work fine in IE 8 and Firefox 3. You may need to click on the application first!

Issues

There are a number of issues I have yet to address. Performance is an obvious one; it's a little choppy even with 100% usage of one the cores on a Core 2 Duo. Sound is missing, and I'm not sure what Opera's doing with keys. Other than that, I thought it was a fun experiment. ![]()

Once I've tidied it up a bit I'll merge the source code with the existing source repository.

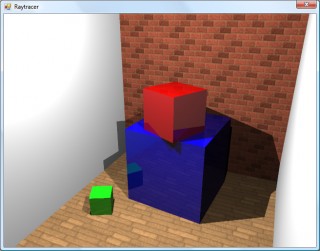

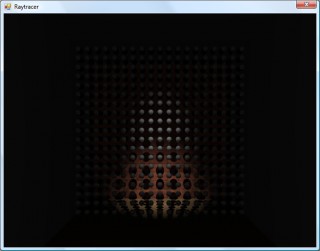

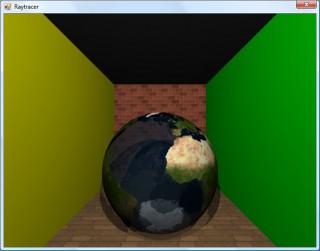

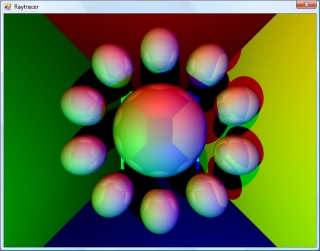

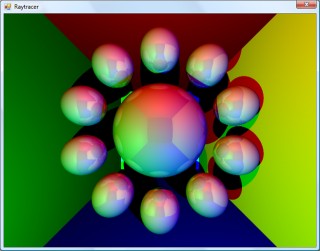

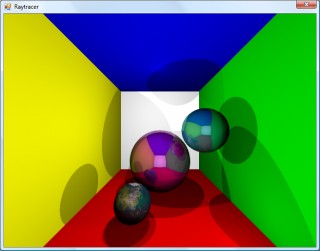

CSG, fisheye and spotlights.

Monday, 5th May 2008

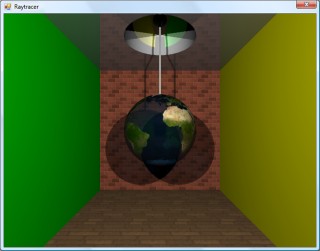

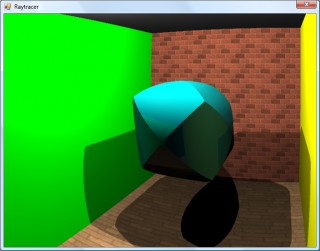

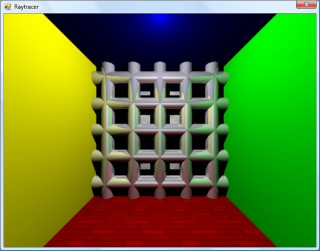

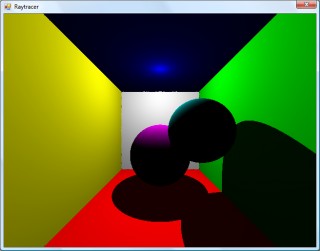

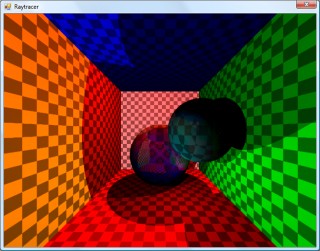

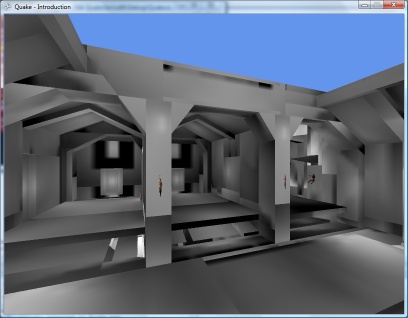

One way of constructing solids is to use a method named constructive solid geometry (or CSG for short). I added two simple CSG operators - intersection and subtraction - that both take two surfaces and return the result.

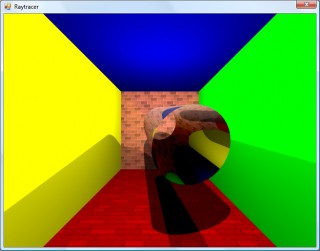

In the above image, the surface that makes up the ceiling is created by subtracting a sphere from a plane. Of course, much more interesting examples can be created. For example, here is the surface created by taking a sphere and subtracting three cylinders from it (each cylinder points directly along an axis).

One problem with the camera implementation was that it couldn't be rotated. To try and aid this, I used a spherical to cartesian conversion to generate the rays - which has the side-effect of images with "fisheye" distortion.

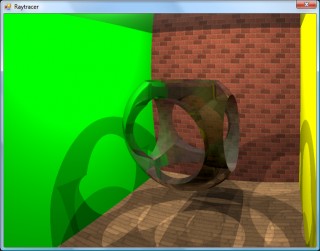

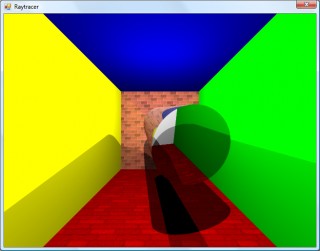

The above left image also demonstrates a small amount of refraction - something that I've not got working properly - through the surface. The above right image is the result of the intersection of three cylinders aligned along the x,y and z axes.

To try and combat the fisheye distortion, I hacked together a simple matrix structure that could be used to rotate the vectors generated by the earlier linear projection. The result looks a little less distorted!

The final addition to the raytracer before calling it a day was a spotlight. The spotlight has an origin, like the existing point light - but it adds a direction (in which it points) and an angle to specify how wide the beam is. In fact, there are two angles - if you're inside the inner one, you're fully lit; if you're outside the outer one, you're completely in the dark; if you're between the two then the light's intensity is blended accordingly.

In the above screenshot, a spotlight is shining down and backwards away from the camera towards a grid of spheres.

If you're interested in an extremely slow, buggy, and generally badly written raytracer, I've uploaded the C# 3 source and project file. The majority of the maths code has been pinched and hacked about from various sites on the internet, and there are no performance optimisations in place. I do not plan on taking this project any further.

Building and running the project will result in the above image, though you may well wish to put the kettle on and make yourself a cup of tea whilst it's working. ![]()

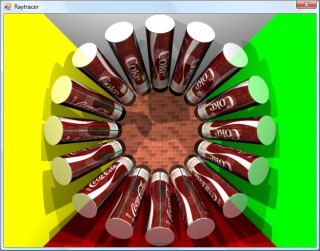

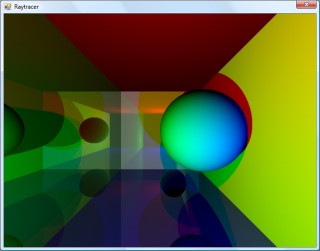

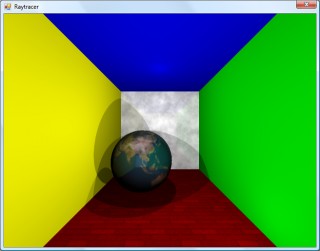

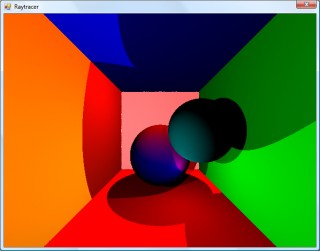

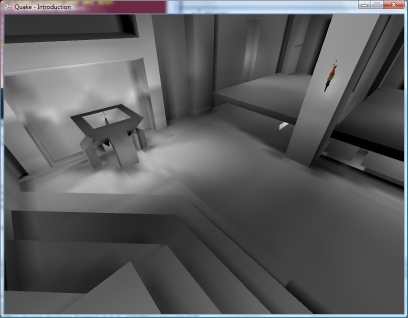

Cylinders and translucent surfaces

Tuesday, 29th April 2008

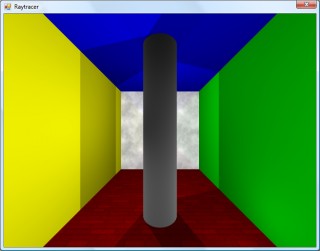

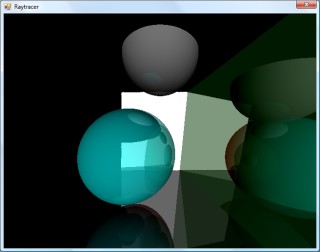

The first addition to the raytracer was a cylindrical surface, represented by two end points and a radius. In the two screenshots above, the cylinder is infinitely long - not very useful. However, by calculating the point on the cylinder's axis that is closest to the struck point on its surface you can work out how far along its axis you are, and from that whether you are between either of the cylinder's ends.

The cylinder can have its ends optionally capped. To add the caps, you can create plane that has a normal that points in the the direction of the cylinder's axis. If you collide with the plane, you can then calculate the distance between the point you struck on it and the end coordinate of the cylinder. If this distance is smaller than the radius of the cylinder, you've hit one of the end caps.

Texturing the cylinders proved rather difficult. The v component of the texture component can be calculated by working how far along the cylinder's axis you are - easy. However, to wrap a texture around the rotational axis of the cylinder is a bit more complicated. In the first screenshot, I simply used Math.Atan2(z,x) to get the angle, and hence texture coordinate - but this only works if the cylinder points along the y axis. If I had another vector that lay perpendicular to the cylinder's axis I could use the dot product to work out its angle, but I don't. The cross product could generate one, but I'd need another vector to cross the axis with... In the end, this post came to my rescue, and I managed to get it working for cylinders pointing along any axis - producing the second screenshot.

An addition I've wanted to make for a while was support for translucent surfaces.

This required a few changes to the structure of the raytracer. Previously, all methods for calculating ray intersections had to return a single Collision object, which contained a boolean flag specifying whether the collision was succesful. A translucent sphere would need to return two collision points - one as the ray enters the front and one as it leaves the back of its surface. To this end, all collision detection methods now return an array (empty or null if no collisions were made), and each collision has a flag indicating whether the collision was made by the ray entering the solid or leaving the solid (this is required, for example, to invert surface normals for correct shading).

Once the nearest struck point to the camera has been handled (including recursive reflection-handling code) it is checked to see if it's on a translucent surface. If so, the raytracer continues raytracing away from the camera, and blends the new result with the existing result based on its opacity. By stopping and starting again, one can adjust the direction of the ray - for example, to add a refraction effect (which I have not yet got working ![]() ).

).

One trick the above image misses is that it's still simply scaling down the intensity of the light by the opacity of the surface it passes through. It would look nicer if the light was coloured by the surface it passes through; so, in the above example, the white light shining through the blue water on the sphere should cast blue-tinted shadows.

Whilst it's far from being real-time, I can still make it dump out a sequence of frames to create a basic animation.

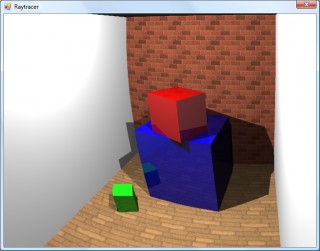

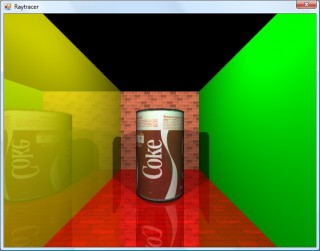

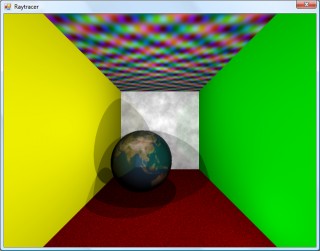

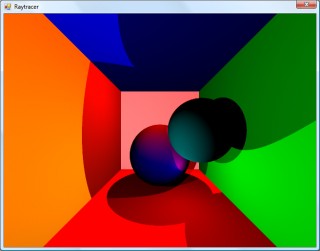

Fixed reflections and proper textures

Sunday, 27th April 2008

How long does that scene take to render?

Just over a minute, so not very good performance at all. I've made some changes since then (including multithreading) that drop it down to about 30 seconds.

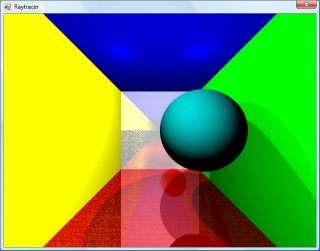

I decided to rewrite the main raycasting code from scratch, after seeing results such as the above. I'm not sure where the speckles were coming from, nor why the reflections were being calculated incorrectly. The new code writes to regions of an array of integers (for 32-bit ARGB output), and is designed much more simply. By splitting the output buffer into two halves I can perform the raytracing in two threads, which makes much better use of modern dual-core CPUs.

A scene with lots of reflective spheres would seem like a good test. If you look at the reflections in the outer ring of spheres, they're quite different (and now appear to be correct) now, so whatever was wrong now seems to have been fixed.

A scene with multiple reflective planes no longer appears to have the noise and reflection bugs that were clearly visible in the first screenshot in this entry.

Textures would certainly make the objects look a bit more interesting, but I couldn't think of a simple way of aligning a texture to a surface. I decided that textures should be treated as simple 2D rectangles, and each material can now have a diffuse texture applied to it (which provides a method Colour GetColour(Vector2 coordinate) to read it). To attach the texture to the surface of an object the surface needs to implement ITexturable, which exposes the method Vector2 GetTextureCoordinate(Vector3 surfacePoint).

In short; it's the job of the surface class (such as the Sphere or Plane classes) to map the struck point to a texture coordinate. This is most easily handled with the sphere, which simply converts the cartesian coordinates of the stuck point to polar coordinates.

For planes, I thought that the easiest way of aligning the texture would be to declare two vectors - one that represents the texture's X axis and one that represents the texture's Y axis.

For example, take the white wall at the back of the room in the above screenshot. To align a texture parallel to its surface, one could set the texture's X axis vector to point right and its Y axis vector to point down. By changing the magnitude of these vectors the texture can be scaled.

For the floor in the above image, the texture's X axis points right, and its Y axis points into the screen.

As the texture merely has to provide a method that takes in a texture coordinate and outputs a colour, this lets us declare simple procedural textures.

The rather garish ceiling is declared like this in code:

this.Tracer.Objects.Add(new WorldObject() { Surface = new Plane(Vector3.Down, 10.0d) { TextureXAxis = Vector3.Right, TextureYAxis = Vector3.Forward, }, Material = new Material() { Colour = Colour.White, Texture = new ProceduralTexture( p => new Colour( 1.0d, (Math.Sin(p.X) * Math.Cos(p.Y * 2)) / 2.0d + 0.5d, (Math.Cos(p.X) * Math.Sin(p.Y * 3)) / 2.0d + 0.5d, (Math.Sin(p.X * 5) * Math.Sin(p.Y / 0.3d)) / 2.0d + 0.5d ) ), }, });

I think before I go any further I'm going to need to support a wider variety of surfaces than spheres and planes. Another limitation with the existing implementation is that only a single collision between a ray and a surface is reported, which limits what can be done with the renderer - for example, a glass sphere that refracts a ray that passes through it would need to report two collisions, one for the front of the sphere as the ray passes through and again one for the back as the ray leaves.

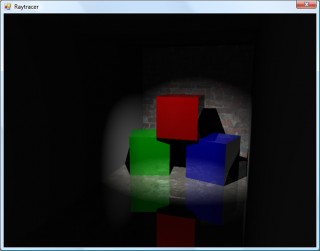

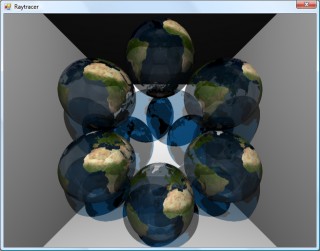

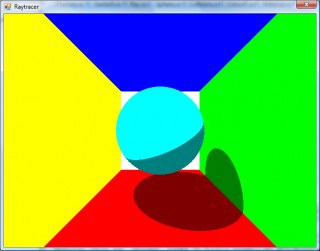

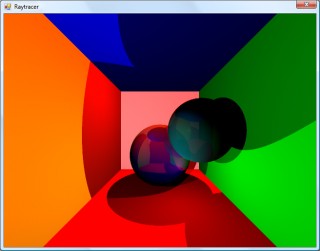

Raytraced shadows, reflections and chessboards

Saturday, 26th April 2008

I thought that better lighting might help the scene look a bit nicer, so decided removed all the existing lighting code (and reflection code, to make life easier) and tried to add some basic shadowing.

When a ray's intersection with the world is found, a ray is cast back from that point towards the light source. If this ray collides with another object on its return trip to the light, it's assumed that it's in the shade. In the above test, points in shade simply had their diffuse colour divided by two. This still looks rather flat, though.

As we know the surface normal of the surface that has been struck and the direction of the ray that's going between the struck point and the light source, we can work out how much the surface point is facing the light by taking the dot product of the two vectors. When multiplied by the surface's diffuse colour, this results in much smoother lighting.

That's better, but would look better with multiple lights. I start with a running total colour (initially black), then iterate over a list of lights. If the struck point is in line with the light, I add the surface diffuse colour multiplied by the light colour to this running total. This results in a much more interesting-looking scene.

The edges of the surfaces are rather ugly and noisy, probably due to rounding errors. They are helped if I offset the pixel coordinates by 0.5 (so the rays are shot through the centre of pixels, rather than the top-left corner), but proper supersampling would probably look better.

It does, thankfully, at the expense of making the rendering time four times longer!

I reintroduced the reflective surfaces - those reflections don't look quite right to me, but I can't really tell. More detail in the world might make it easier, so I'd like to add some sort of texturing.

I'm undecided how to handle mapping struck points to texture coordinates. For the moment I'm just XORing together the x, y and z components together - if the least significant bit of the result is zero, return half of the diffuse colour, otherwise return the full diffuse colour.

Raytracing - Beware of the coder colours

Friday, 25th April 2008

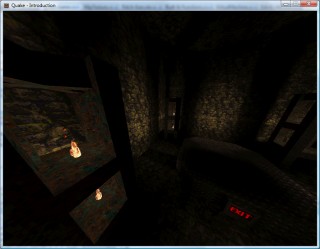

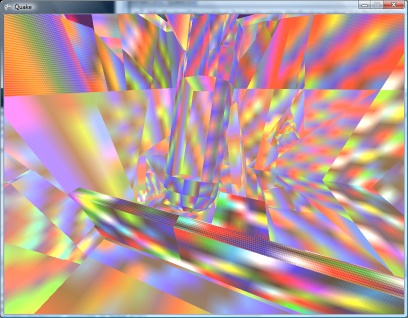

As much as I claim to be interested in software rendering (be it as part of a game engine or as an effect in a demo), I've never actually written a raytracer. Having written some basic vector and plane arithmetic code for physics in the XNA Quake project, I thought I'd give it a stab.

Currently, the world is just a simple List<WorldObject>, where each WorldObject has a Surface and Material property. The Surface has to implement IRayCollidable, which lets me call GetCollision(Ray) on it to find out where a ray strikes it (if at all), returning the point of collision and the normal of the surface that was hit. Currently, there are only two types that implement this interface - Plane and Sphere - but they'll do for testing.

For each ray, I iterate over the list of items in the world and grab the collision point. If a collision is made, I add the details to another list (including the total length of the ray at this point) and, if the surface's material is marked as reflective (ie, has a Reflectivity property greater than zero) I reflect the ray against the surface normal and cast again (recursively, so it's very easy to cause a StackOverflowException when two shiny surfaces are parallel to eachother).

Once I have a record of all the collisions, I sort them in back-to-front order based on the length of the ray, then iterate over them, blending the colours as I go (so a reflection in a green surface ends up being green tinted).

To try and get a better sense of the 3D scene, I added a simple directional light. This simply takes the dot product of the hit surface normal and the light's direction, then multiplies it by the material's diffuse colour. The above screenshot has a light pointing directly away from the camera, hence the upper and left walls are completely black (however, the bottom and right walls, being reflective, are partially visible).

I've trying to do this without looking up the correct way of doing it, experimenting as I go - mainly in an attempt to try and patch up my rather poor handle on 3D maths and collision detection.

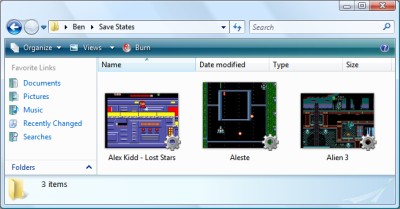

SaveStates and Key Names

Monday, 31st March 2008

It's a good feeling when issue 1 is finally marked as Fixed - in this case it was another interrupt-related bug. The IFF1 flag was being used to mask non-maskable interrupts; I don't think it should and it hasn't seem to have broken anything just yet by making non-maskable interrupts truly non-maskable.

I have also changed the savestate format to something that will be a little more backwards compatible rather than a plain BinaryFormatter dump of the entire emulator state. The data is now saved in what is basically an INI file with C#-style attributes and CSS-style url() syntax for binary data.

[Type(BeeDevelopment.Sega8Bit.Hardware.VideoDisplayProcessor)] VideoRam=Dump(Video\VideoRam.bin) Address=49165 WaitingForSecond=False AccessMode=ColourRamWrite ReadBuffer=32 Registers=Dump(Video\Registers.bin) System=Ntsc SupportsMode4=True ; ... snip ...

Other changes include a faked fullscreen mode (ie, a maximised borderless window) and an option to retain the aspect ratio of the game, so games no longer appear stretched on widescreen monitors. The sound emulation is a bit better, but still a little noisy in certain games with spurious beeps or buzzes.

One minor problem was on the key configuration control panel. Converting members of the Keys enumeration into displayable strings can be done via .ToString(), but this results in names that do not match the user's keyboard layout (such as OemQuestion for /, OemTilde for # and even Oemcomma for , - note the lowercase c, all on a UK keyboard).

With a prod in the right direction from jpetrie, here's a snippet that can be used to convert Keys into friendly key name strings:

#region Converting Keys into human-readable strings. /// <summary> /// Converts a <see cref="Keys"/> value into a human-readable string describing the key. /// </summary> /// <param name="key">The <see cref="Keys"/> to convert.</param> /// <returns>A human-readable string describing the key.</returns> public static string GetKeyName(Keys key) { // Convert the virtual key code into a scancode (as required by GetKeyNameText). int Scancode = MapVirtualKey((int)key, MapVirtualKeyMode.MAPVK_VK_TO_VSC); // If that returned 0 (failure) just use the value returned by Keys.ToString(). if (Scancode == 0) return key.ToString(); // Certain keys end up being mapped to the number pad by the above function, // as their virtual key can be generated by the number pad too. // If it's one of the known number-pad duplicates, set the extended bit: switch (key) { case Keys.Insert: case Keys.Delete: case Keys.Home: case Keys.End: case Keys.PageUp: case Keys.PageDown: case Keys.Left: case Keys.Right: case Keys.Up: case Keys.Down: case Keys.NumLock: Scancode |= 0x100; break; } // Perform the conversion: StringBuilder KeyName = new StringBuilder("".PadRight(32)); if (GetKeyNameText((Scancode << 16), KeyName, KeyName.Length) != 0) { return KeyName.ToString(); } else { return key.ToString(); } } /// <summary> /// Retrieves a string that represents the name of a key. /// </summary> /// <param name="lParam">Specifies the second parameter of the keyboard message (such as <c>WM_KEYDOWN</c>) to be processed.</param> /// <param name="lpString">Pointer to a buffer that will receive the key name.</param> /// <param name="size">Specifies the maximum length, in TCHAR, of the key name, including the terminating null character. (This parameter should be equal to the size of the buffer pointed to by the lpString parameter).</param> /// <returns>The length of the returned string.</returns> [DllImport("user32.dll")] static extern int GetKeyNameText(int lParam, StringBuilder lpString, int size); /// <summary> /// Translates (maps) a virtual-key code into a scan code or character value, or translates a scan code into a virtual-key code. /// </summary> /// <param name="uCode">Specifies the virtual-key code or scan code for a key. How this value is interpreted depends on the value of the <paramref name="uMapType"/> parameter.</param> /// <param name="uMapType">Specifies the translation to perform. The value of this parameter depends on the value of the <paramref name="uCode"/> parameter.</param> /// <returns>Either a scan code, a virtual-key code, or a character value, depending on the value of <paramref="uCode"/> and <paramref="uMapType"/>. If there is no translation, the return value is zero.</returns> [DllImport("user32.dll")] static extern int MapVirtualKey(int uCode, MapVirtualKeyMode uMapType); enum MapVirtualKeyMode { /// <summary>uCode is a virtual-key code and is translated into a scan code. If it is a virtual-key code that does not distinguish between left- and right-hand keys, the left-hand scan code is returned. If there is no translation, the function returns 0.</summary> MAPVK_VK_TO_VSC = 0, /// <summary>uCode is a scan code and is translated into a virtual-key code that does not distinguish between left- and right-hand keys. If there is no translation, the function returns 0.</summary> MAPVK_VSC_TO_VK = 1, /// <summary>uCode is a virtual-key code and is translated into an unshifted character value in the low-order word of the return value. Dead keys (diacritics) are indicated by setting the top bit of the return value. If there is no translation, the function returns 0.</summary> MAPVK_VK_TO_CHAR = 2, /// <summary>uCode is a scan code and is translated into a virtual-key code that distinguishes between left- and right-hand keys. If there is no translation, the function returns 0.</summary> MAPVK_VSC_TO_VK_EX = 3, MAPVK_VK_TO_VSC_EX = 4, } #endregion

It uses P/Invoke and the Win32 API so is only suitable for use on Windows.

Fun with IThumbnailProvider

Friday, 28th March 2008

Note: I have been informed that the code below no longer works in Windows 7 due to changes in the way IThumbnailProvider operates. It is recommended that you use unmanaged code instead of the managed solution presented below.

I have started releasing Cogwheel binaries on its project page, so if you'd like a look at the project but can't be bothered to check out and build the source yourself you can now give it a whirl.

One of the newer additions is a savestate mechanism; this is a very lazy bit of code on my behalf as all it does currently is serialise the entire emulator to a file using the BinaryFormatter. This resulted in savestates weighing in at about 6MB; by marking certain private fields (such as look-up tables in the Z80 emulator) as [NonSerialized] it was down to 2MB. To squash it down to the current ~250KB size the savestate is compressed using the zip file classes I've written to handle loading ROMs from zips.

Whilst this is going to change soon (I'm currently working this on an simple INI file serialiser, so the savestate files will be compatible with later releases of the software) I decided to experiment with the idea of dumping extra data into the savestate - namely, a screenshot.

The screenshot is simply saved as Screenshot.png in the root of the savestate's zip archive. Creating a thumbnailer is extremely easy under Vista, and as the thumbnailer runs out-of-process you can use .NET code! Here's a quick and dirty run-down of how to make them if you decide to write one yourself.

Setting up the project

Create a new class library project in Visual Studio, then go switch to its project properties editor. On the Application tab, set Target Framework to something sensible (I currently try and keep everything at .NET 2.0 level), then click on the Assembly Information button and tick the Make assembly COM-Visible box.

Finally, move to the Signing tab, and tick the box marked Sign the assembly. From the drop-down box, pick New, which will create a new key file and add it to the project (this is required later for COM registration).

Add the COM interface wrappers

This is a simple copy and paste job! Just bung this in a source file somewhere:

using System; using System.Runtime.InteropServices; using System.Runtime.InteropServices.ComTypes; namespace Thumbnailer { /// <summary> /// Defines the format of a bitmap returned by an <see cref="IThumbnailProvider"/>. /// </summary> public enum WTS_ALPHATYPE { /// <summary> /// The bitmap is an unknown format. The Shell tries nonetheless to detect whether the image has an alpha channel. /// </summary> WTSAT_UNKNOWN = 0, /// <summary> /// The bitmap is an RGB image without alpha. The alpha channel is invalid and the Shell ignores it. /// </summary> WTSAT_RGB = 1, /// <summary> /// The bitmap is an ARGB image with a valid alpha channel. /// </summary> WTSAT_ARGB = 2, } /// <summary> /// Exposes a method for getting a thumbnail image. /// </summary> [ComVisible(true), Guid("e357fccd-a995-4576-b01f-234630154e96"), InterfaceType(ComInterfaceType.InterfaceIsIUnknown)] public interface IThumbnailProvider { /// <summary> /// Retrieves a thumbnail image and alpha type. /// </summary> /// <param name="cx">The maximum thumbnail size, in pixels. The Shell draws the returned bitmap at this size or smaller. The returned bitmap should fit into a square of width and height <paramref name="cx"/>, though it does not need to be a square image. The Shell scales the bitmap to render at lower sizes. For example, if the image has a 6:4 aspect ratio, then the returned bitmap should also have a 6:4 aspect ratio.</param> /// <param name="hBitmap">When this method returns, contains a pointer to the thumbnail image handle. The image must be a device-independent bitmap (DIB) section and 32 bits per pixel. The Shell scales down the bitmap if its width or height is larger than the size specified by cx. The Shell always respects the aspect ratio and never scales a bitmap larger than its original size.</param> /// <param name="bitmapType">Specifies the format of the output bitmap.</param> void GetThumbnail(int cx, out IntPtr hBitmap, out WTS_ALPHATYPE bitmapType); } /// <summary> /// Provides a method used to initialize a handler, such as a property handler, thumbnail provider, or preview handler, with a file stream. /// </summary> [ComVisible(true), Guid("b824b49d-22ac-4161-ac8a-9916e8fa3f7f"), InterfaceType(ComInterfaceType.InterfaceIsIUnknown)] public interface IInitializeWithStream { /// <summary> /// Initializes a handler with a file stream. /// </summary> /// <param name="stream">Pointer to an <see cref="IStream"/> interface that represents the file stream source.</param> /// <param name="grfMode">Indicates the access mode for <paramref name="stream"/>.</param> void Initialize(IStream stream, int grfMode); } }

(You may wish to set the namespace to something more appropriate). As you can see, most of that source file is documentation.

Create your thumbnailer class

First thing you'll need to do here is to generate a GUID for your thumbnailer; this is so that when you register your thumbnailer Windows will know which COM object to create an instance of which it can then call to generate a thumbnail (the GUID of your thumbnailer is attached to the extension of the file via standard file associations - more on that later).

Your thumbnailer class should implement two interfaces; IThumbnailProvider (obviously!) and IInitializeWithStream. Here's a skeleton class for the thumbnailer:

using System; using System.Drawing; using System.Drawing.Drawing2D; using System.IO; using System.Runtime.InteropServices; using System.Runtime.InteropServices.ComTypes; namespace Thumbnailer { [ComVisible(true), ClassInterface(ClassInterfaceType.None)] [ProgId("YourApp.ThumbnailProvider"), Guid("YOUR-GUID-IN-HERE")] public class ThumbnailProvider : IThumbnailProvider, IInitializeWithStream { #region IInitializeWithStream private IStream BaseStream { get; set; } public void Initialize(IStream stream, int grfMode) { this.BaseStream = stream; } #endregion #region IThumbnailProvider public void GetThumbnail(int cx, out IntPtr hBitmap, out WTS_ALPHATYPE bitmapType) { hBitmap = IntPtr.Zero; bitmapType = WTS_ALPHATYPE.WTSAT_UNKNOWN; try { // Thumbnailer code in here... } catch { } // A dirty cop-out. } #endregion } }

You will probably want to set the ProgId to something meaningful, and make sure you set the GUID to the one you just generated.

What will happen is that Windows will first initialise your object by calling IInitializeWithStream.Initialize(), passing in an IStream. The above implementation stores the IStream in a member property for future reference.

Windows will then call IThumbnailProvider.GetThumbnail(). cx is the maximum size of the thumbnail (width and height) you should return; Windows will scale your thumbnail down if you return one that is too large. Do not scale your thumbnail up to match this value; it is perfectly valid to return one that is smaller than the requested value. Also; do not scale your thumbnail up to a square: you should return it at the same aspect ratio of the source image.

For the moment, and for the sake of testing, here's a snippet that will create a bright red thumbnail using GDI+:

using (var Thumbnail = new Bitmap(cx, cx)) { using (var G = Graphics.FromImage(Thumbnail)) { G.Clear(Color.Red); } hBitmap = Thumbnail.GetHbitmap(); }

Registration

If you compile your class library at this point you should end up with a single DLL. You need to register this DLL using the command-line tool RegAsm.exe that comes with the .NET framework.

Open an elevated command prompt (you need admin rights for this bit) and set the working directory to the output directory of your DLL. Now, invoke the following command:

%windir%\Microsoft.NET\Framework\v2.0.50727\RegAsm /codebase YourThumbnailer.dllThat's half of the battle; the last bit boils down to conventional file associations.

Run the registry editor, and open the HKEY_CLASSES_ROOT key. You will see a list of keys representing file extensions; find one (or create a new one) to match the extension that you wish to attach your thumbnailer to. Under that create a new key named shellex, and under that create another key named {e357fccd-a995-4576-b01f-234630154e96}. Set its (Default) value to {YOUR-GUID-IN-HERE} - yes, the GUID you created earlier. That should look something like this:

- HKEY_CLASSES_ROOT

- .yourextension

- shellex

- {e357fccd-a995-4576-b01f-234630154e96} = {YOUR-GUID-IN-HERE}

- shellex

- .yourextension

That's it! ![]() You may need to log out then in again (and/or reboot and/or just kill all Explorer instances and restart them) for Explorer to catch on if nothing seems to be working.

You may need to log out then in again (and/or reboot and/or just kill all Explorer instances and restart them) for Explorer to catch on if nothing seems to be working.

A final note: IStream to Stream

The only final hitch is that IStream is not the same as our beloved .NET Stream. I use the following snippet to dump all of the contents of an IStream into an array of bytes (which can then be converted to a stream using new MemoryStream(byte[]) if need be).

private byte[] GetStreamContents() { if (this.BaseStream == null) return null; System.Runtime.InteropServices.ComTypes.STATSTG statData; this.BaseStream.Stat(out statData, 1); byte[] Result = new byte[statData.cbSize]; IntPtr P = Marshal.AllocCoTaskMem(Marshal.SizeOf(typeof(UInt64))); try { this.BaseStream.Read(Result, Result.Length, P); } finally { Marshal.FreeCoTaskMem(P); } return Result; }

This, naturally, is not a good idea if you're thumbnailing very large files, as it dumps the entire thing into memory!

For more information, take a look at MSDN: Thumbnail Providers, which includes useful information (including how to change the overlay icon in the bottom-right of your thumbnails or the adornments).

The COM wrappers and GetStreamContents() snippet are based on this uberdemo article.

Finally, another screenshot; you can now load IPS patch files directly into Cogwheel using the advanced ROM load dialog - which can be useful for translations.

Sound, at long last.

Friday, 7th March 2008

I have finally got around to adding sound to Cogwheel using Ianier Munoz's waveOut API wrapper.

The technique used is fairly simple. I start with a sound buffer that is a multiple a number of video frames in length (1/60th of a second is one frame) - four seems a good number. This buffer needs to be periodically topped up with sound samples (every four frames in the above example).

I run the emulator for one frame, then generate a frame's worth of audio. I add these samples to a queue. The sound callback then periodically dequeues these samples and appends them to its buffer.

// This is called once every video frame. // 735 samples at 44100Hz = 1/60th second. // (Multiplied by two for stereo). this.Emulator.RunFrame(); short[] Buffer = new short[735 * 2]; this.Emulator.Sound.CreateSamples(Buffer); this.GeneratedSoundSamples.Enqueue(Buffer);

The important thing is that the sound is always generated after the video frame (and thus after any hardware writes). I log writes to the sound hardware over the period of a frame (along with the number of CPU cycles that have elapsed), then space them out when generating the sound samples so that they play in synch. My previous problems were caused by the sound emulation trying to "look ahead" past what had already been generated.

However, there is a potential problem with this - as the video and sound emulation are not locked in synch with eachother, there are two cases that could crop up:

- The emulator runs faster than 60Hz, generating too many sound samples.

- The emulator runs slower than 60Hz, not generating enough sound samples.

The first is the easiest to deal with. In most instances you'd want a couple of extra frames of sound data left in the queue after topping up the sound buffer, in case in the next period not enough are generated. However, if I notice that the queue is longer than entire sound buffer after topping it up, I clear it completely. This would make the sound a little choppy, but so far this hasn't happened in my tests.

The latter is a little more complex. If I just left it the sound buffer would have gaps in it, causing noticable pops (this I have noticed in some of the more processor-intensive games). To cover up the gaps, I generate enough extra frames of sound data to fill the gap. As no sound hardware writes are made, this has the effect of extending any tones that were currently playing, so the sound will play back slightly out of time. However, slightly out of time by a few 60ths of a second is a better solution than a pop.

// This is called when the sound buffer needs topping up. // That's about once every four frames. private void SoundBufferFiller(IntPtr data, int size) { // Temporary buffer to store the generated samples. short[] Generated = new short[size / 2]; for (int i = 0; i < Generated.Length; i += 735 * 2) { if (this.GeneratedSoundSamples.Count > 0) { // We've already queued up some sound samples. Array.Copy(this.GeneratedSoundSamples.Dequeue(), 0, Generated, i, 735 * 2); } else { // Erk, we're out of samples... force generate some more and use those instead. // (This avoids popping). short[] Temp = new short[735 * 2]; this.Emulator.Sound.CreateSamples(Temp); Array.Copy(Temp, 0, Generated, i, 735 * 2); } } // Copy to the sound buffer. Marshal.Copy(Generated, 0, data, size / 2); // If too many samples are being generated (FPS > 60Hz) then make sure it doesn't go out of control. while (this.GeneratedSoundSamples.Count > this.SoundBufferSizeInFrames) this.GeneratedSoundSamples.Dequeue(); }

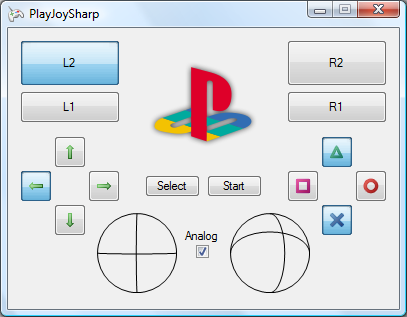

PlayStation Controllers

Monday, 4th February 2008

PlayStation controllers are relatively comfortable, and I have a number of them knocking about.

From top to bottom - PS2 IR remote control and receiver; Guitar Hero wireless Kramer and receiver; black PS2 DualShock 2 analogue joypad; a pair of grey standard PS1 digital joypads.

As I've learned in the past, a decent gamepad can help with certain games. Of course, what's much more fun than playing the games is trying to work out how these controllers work.

The byte-level protocol is very simple; the PlayStation pulls a select line low (used to grab the attention of the controller) then pulses the clock eight times, writing a bit at a time onto one line and reading another bit at a time from another. This means that the controller and PlayStation end up sending and receiving a byte simultaneously. Finally, the PlayStation checks to see if controller pulls the acknowledge line low to indicate that it received the data; if no acknowledgement is received it assumes that there is no controller on the port it is currently accessing.

All electrical connections are unidirectional, and so a controller can be easily connected to a standard PC's parallel port. There are a number of diagrams floating around the internet using similar pin connections, so I followed one of those.

I cut up a pound-shop parallel cable for the PC end and a controller extension cable for the PlayStation end. PlayStation controllers require power; a lot of diagrams I've seen refer to a 9V and 5V supply, some 7.6V and 3.3V. A voltmeter informs me that it's the latter option. Rather than try and draw power from the parallel port, I'm using a generic power supply set to 7.5V. To derive the 3.3V I'm using a 5V regulator followed by two 1A rectifier diodes in series - the diodes provide a voltage drop of 0.7V across each, resulting 3.6V.

I wrote an application in C# that attempted to swap bytes back and forth between the PC and the controller, and was getting good results. I was not, however, having any luck polling the acknowledgement line. It didn't appear to ever go low - my guess was that the program simply couldn't poll the parallel port rapidly enough. Not that this is a slur on C#, of course, but to access the parallel port in the first place I need to use an unmanaged library.

The solution was therefore to write an unmanaged library myself that would handle the PlayStation protocol side of things, which I could then wrap up and add nice functionality to via a C# managed library.